The Transformers

A complete architectural breakdown of Transformers, paired with a step-by-step guide to coding BERT from the ground up.

The Transformer Architecture

1.1 Introduction to Large Language Models

1.2 Anatomy of the transformer block

1.3 Tokenization

1.4 Byte Pair Encoding

1.5 Word Embedding

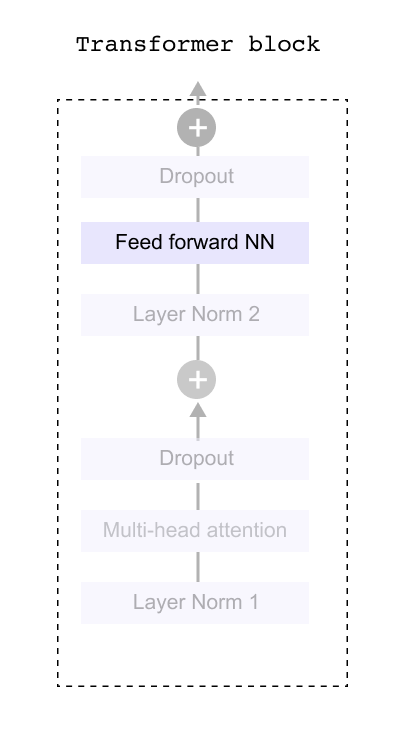

1.6 Transformer Block

1.7 The Need for Attention Mechanism

1.8 Self Attention Mechanism

1.9 Understanding the Input Embedding Matrix

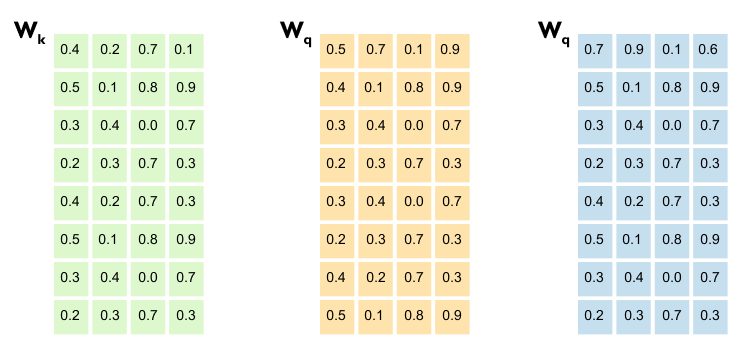

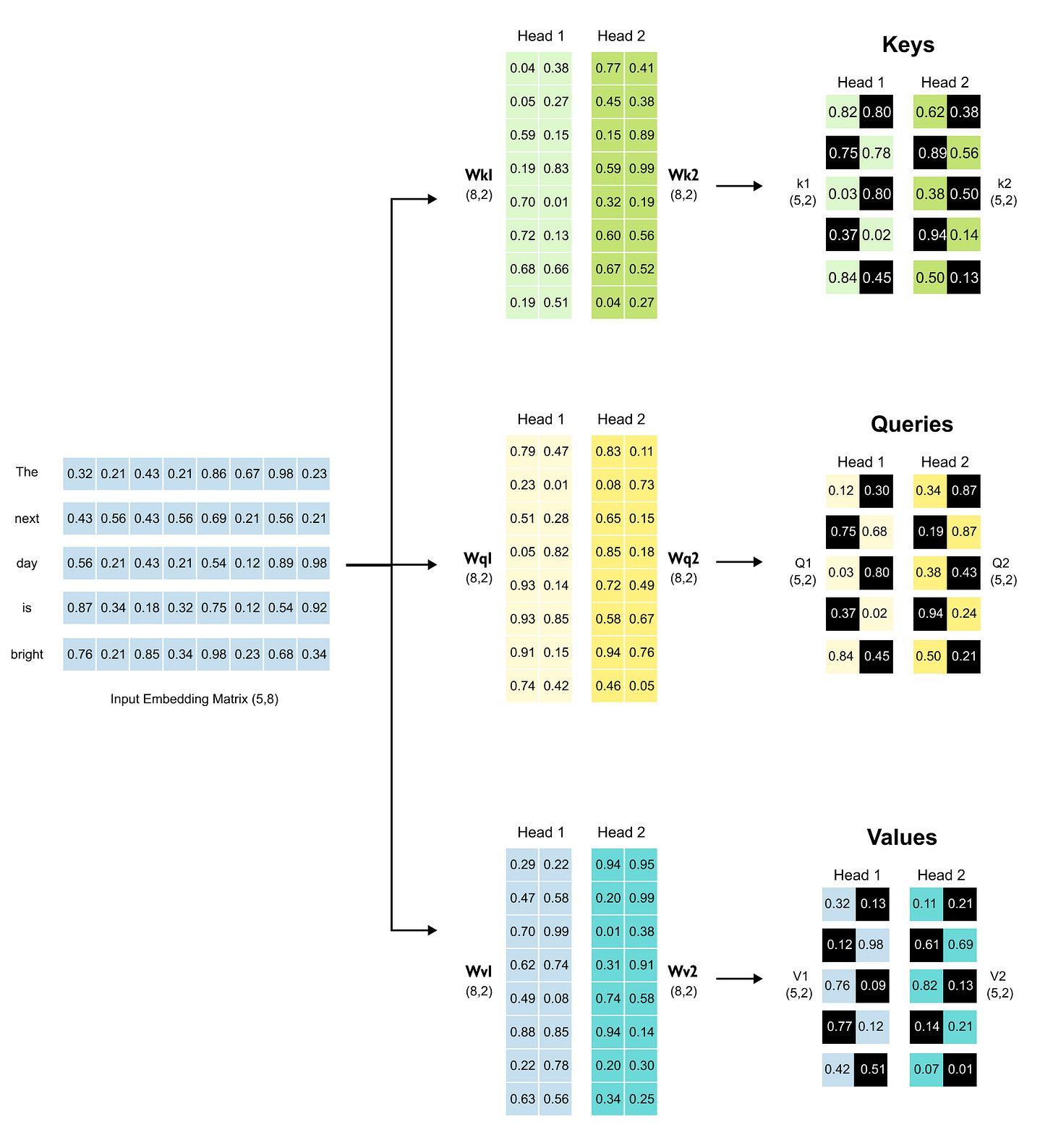

1.10 From Embeddings to Queries, Keys & Values

1.11 A Quick Note on Matrix Multiplication

1.12 Why Scale Attention Scores?

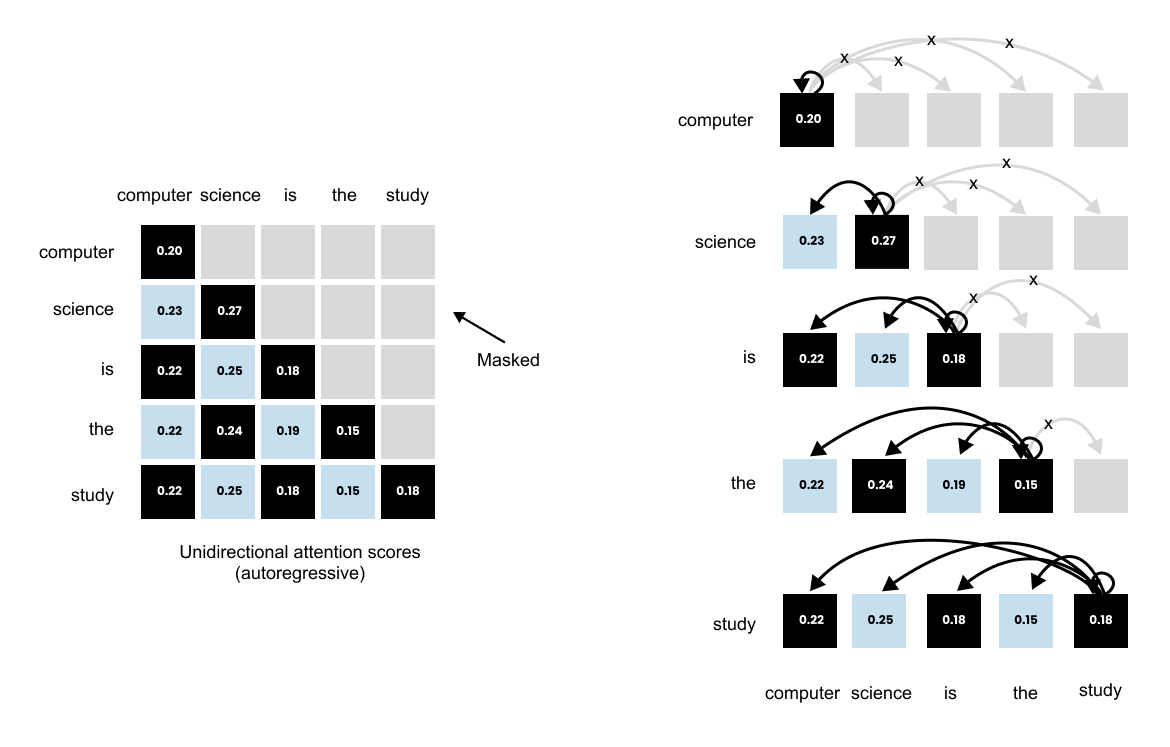

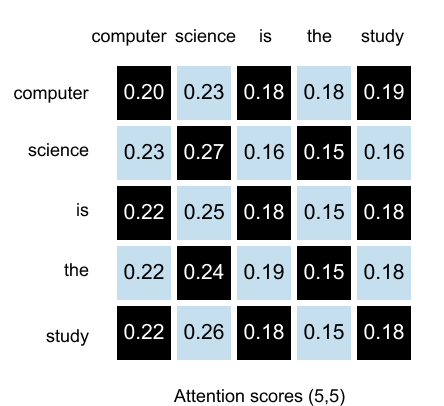

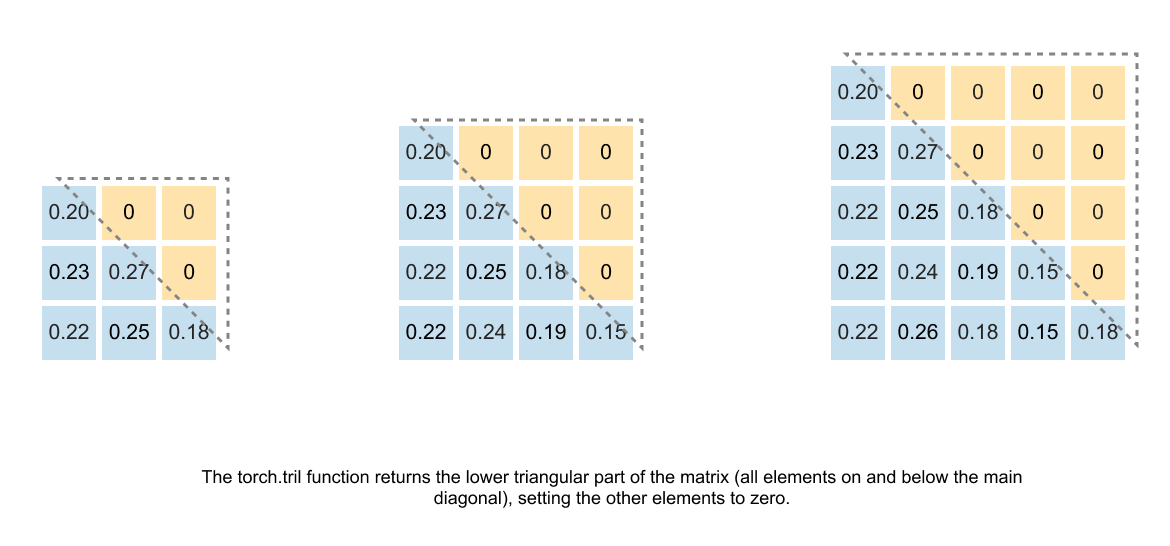

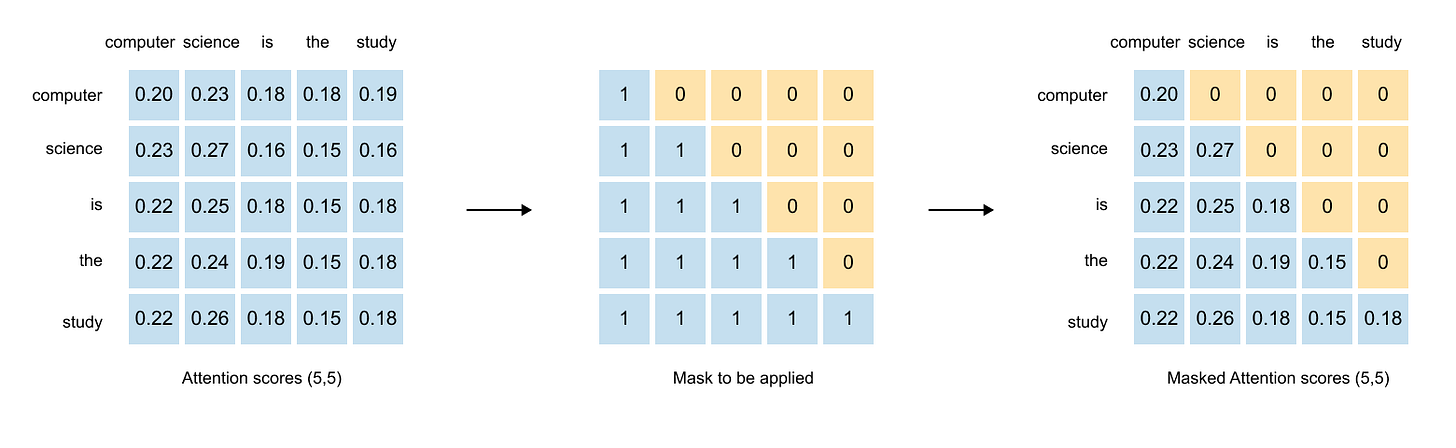

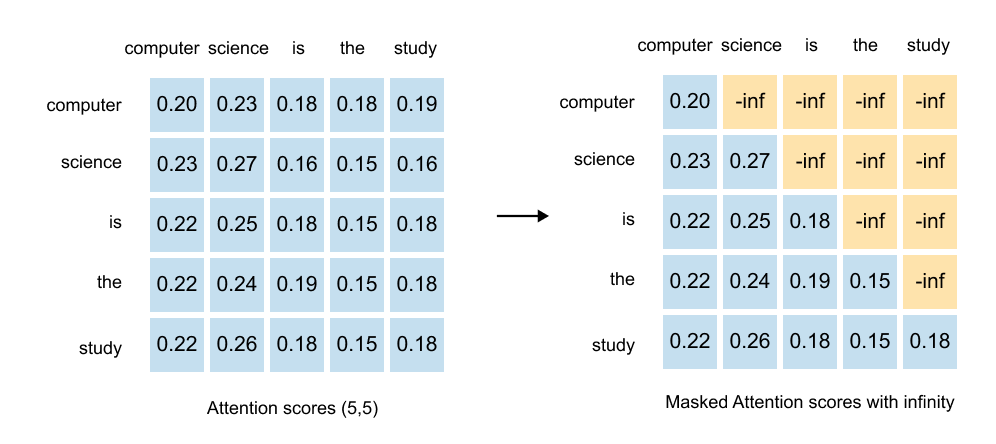

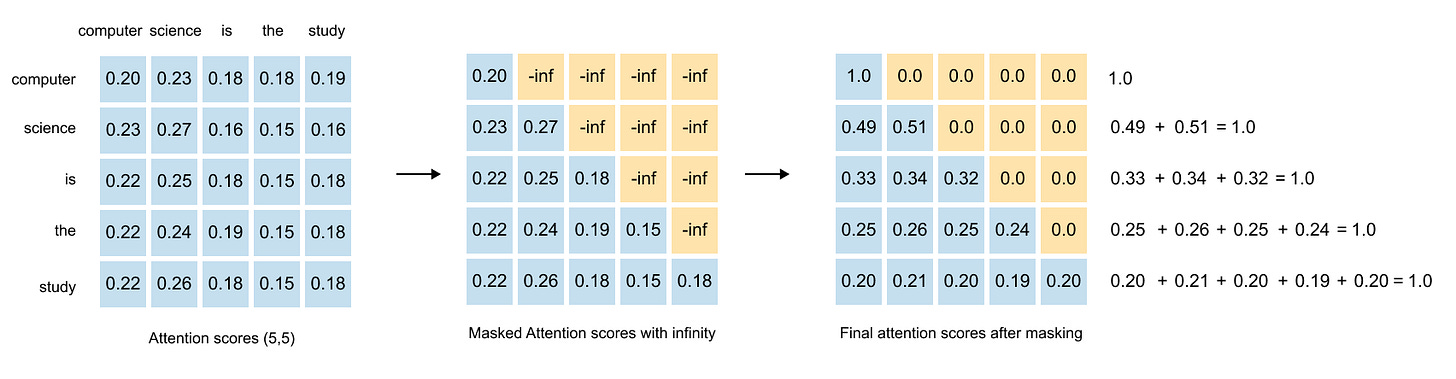

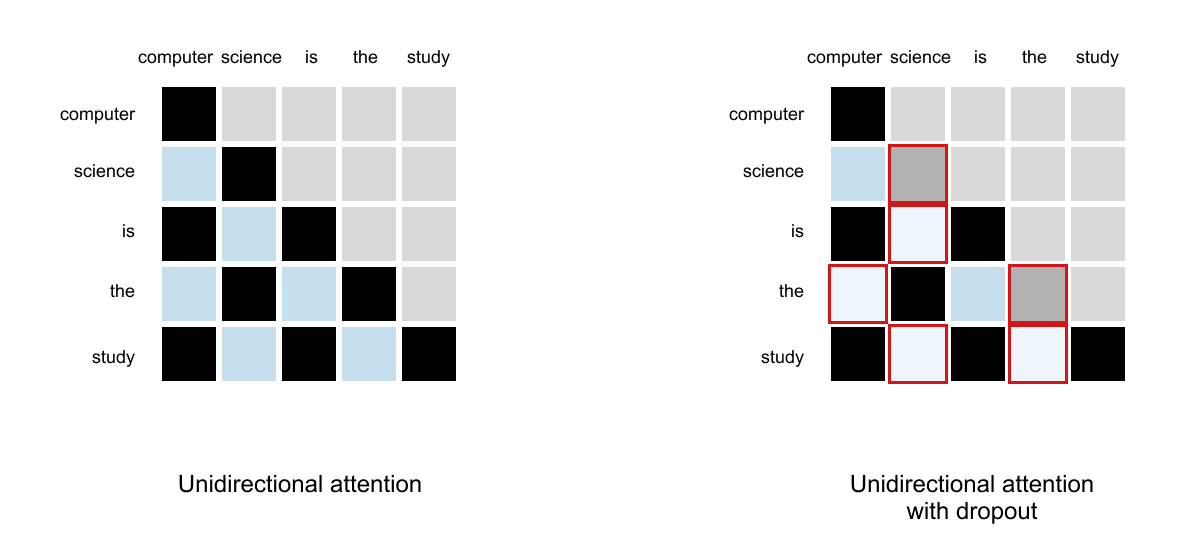

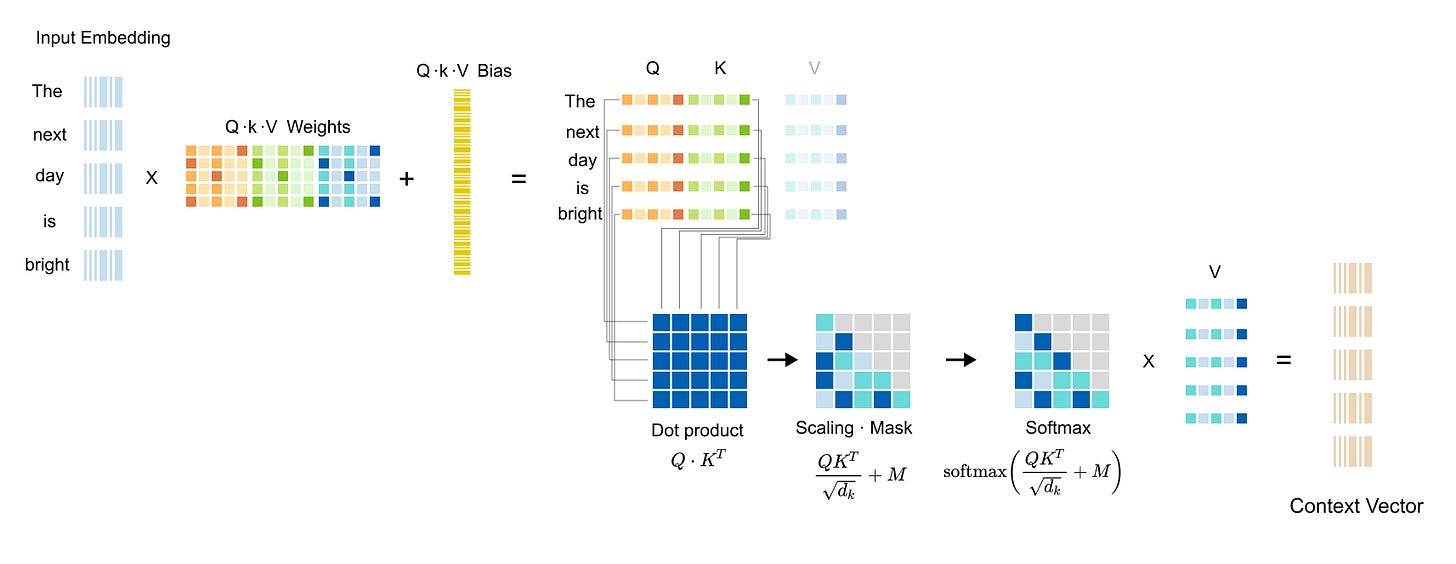

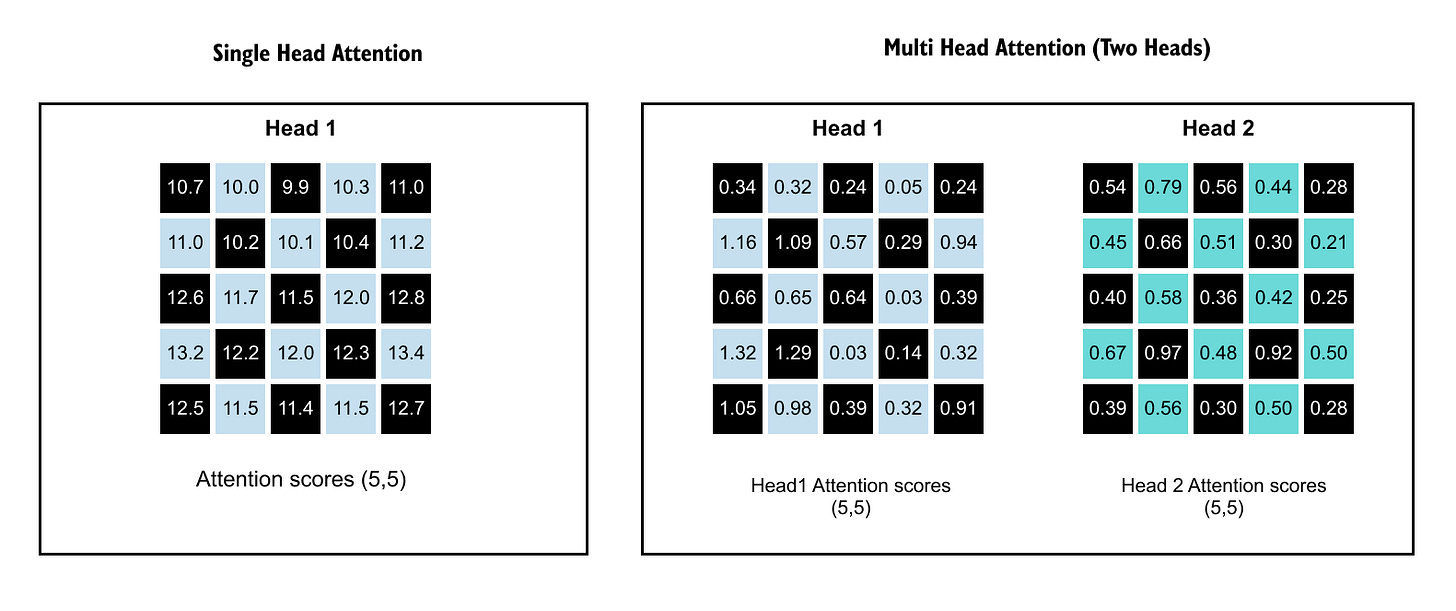

1.13 Causal & Masked Attention

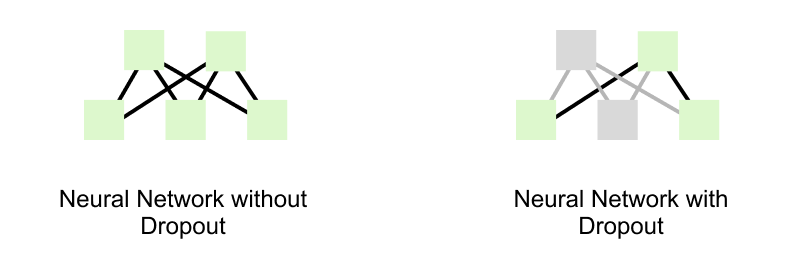

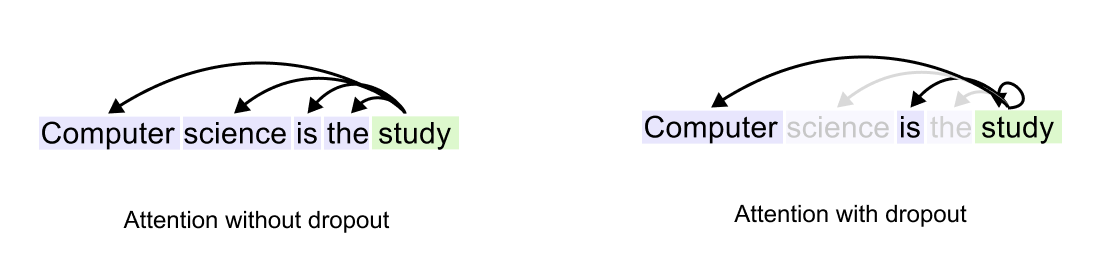

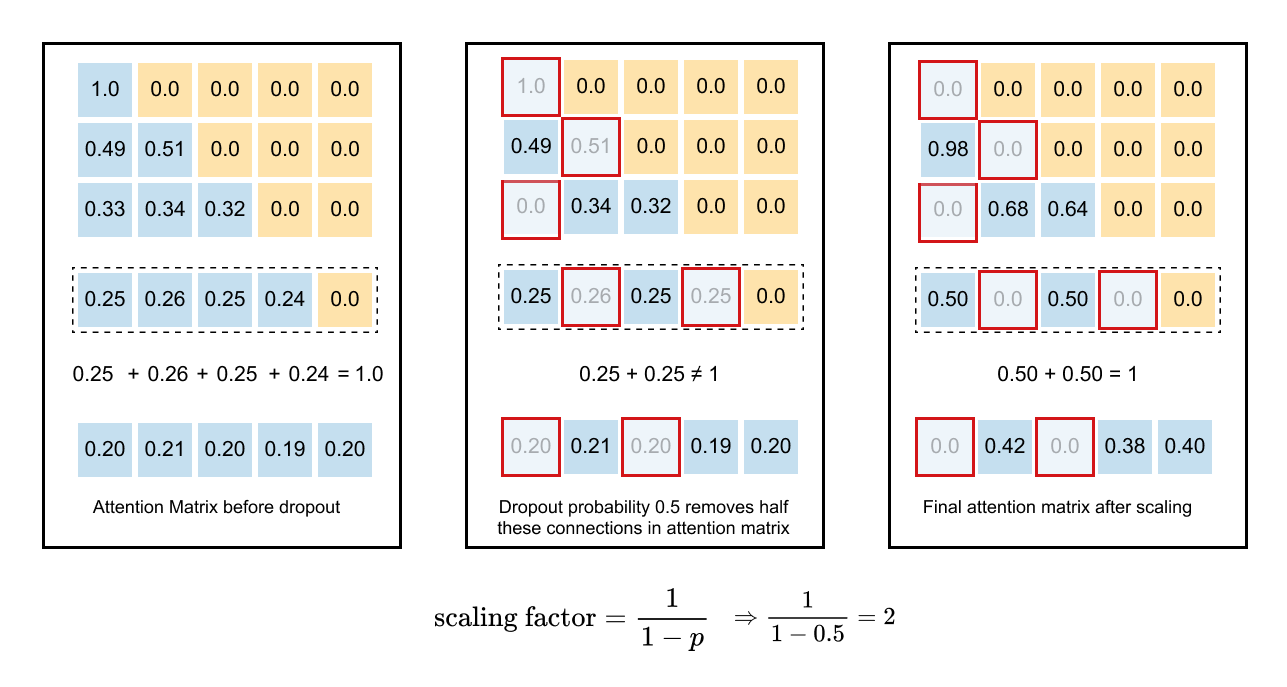

1.14 Causal Attention with Dropouts

1.15 Summary of Self-Attention

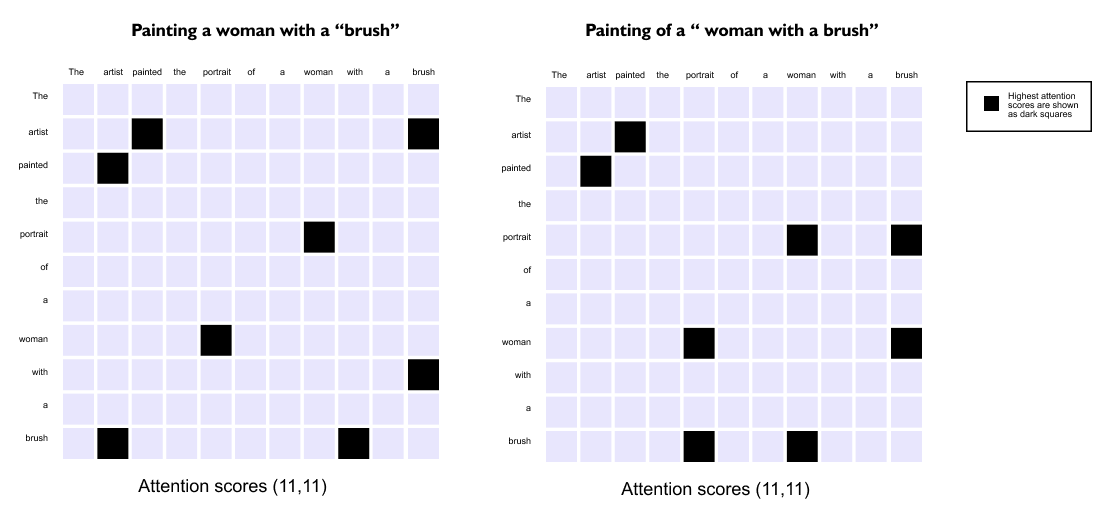

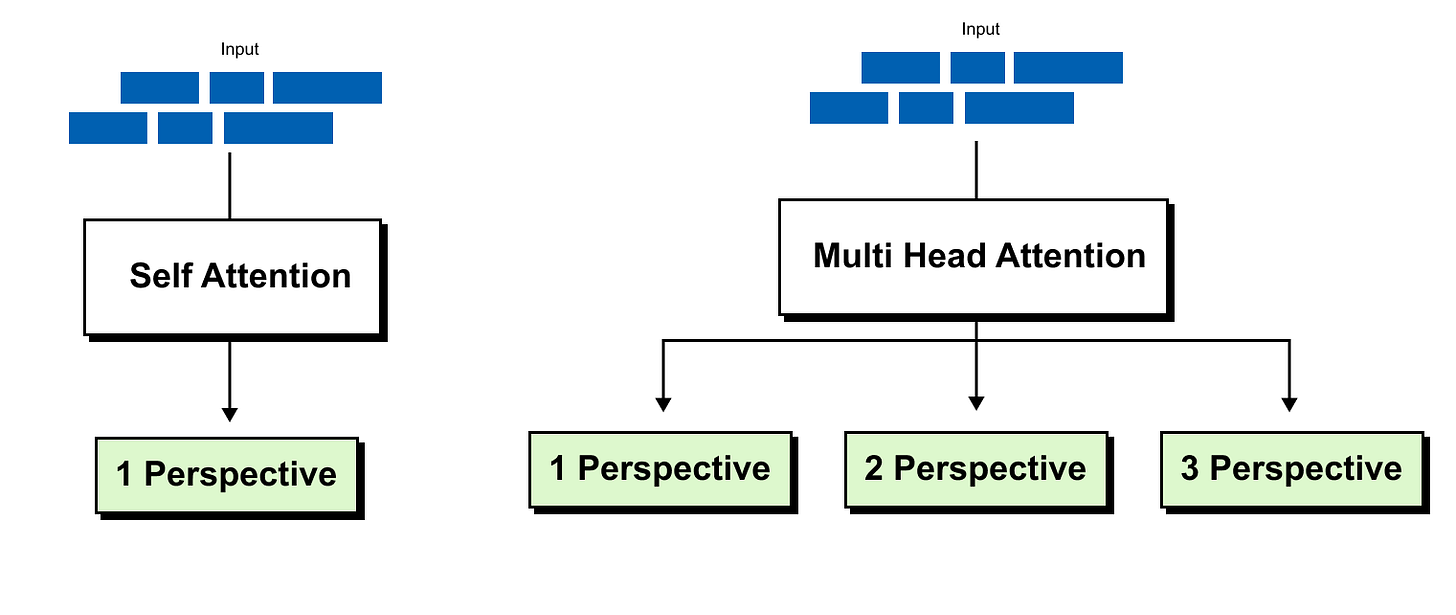

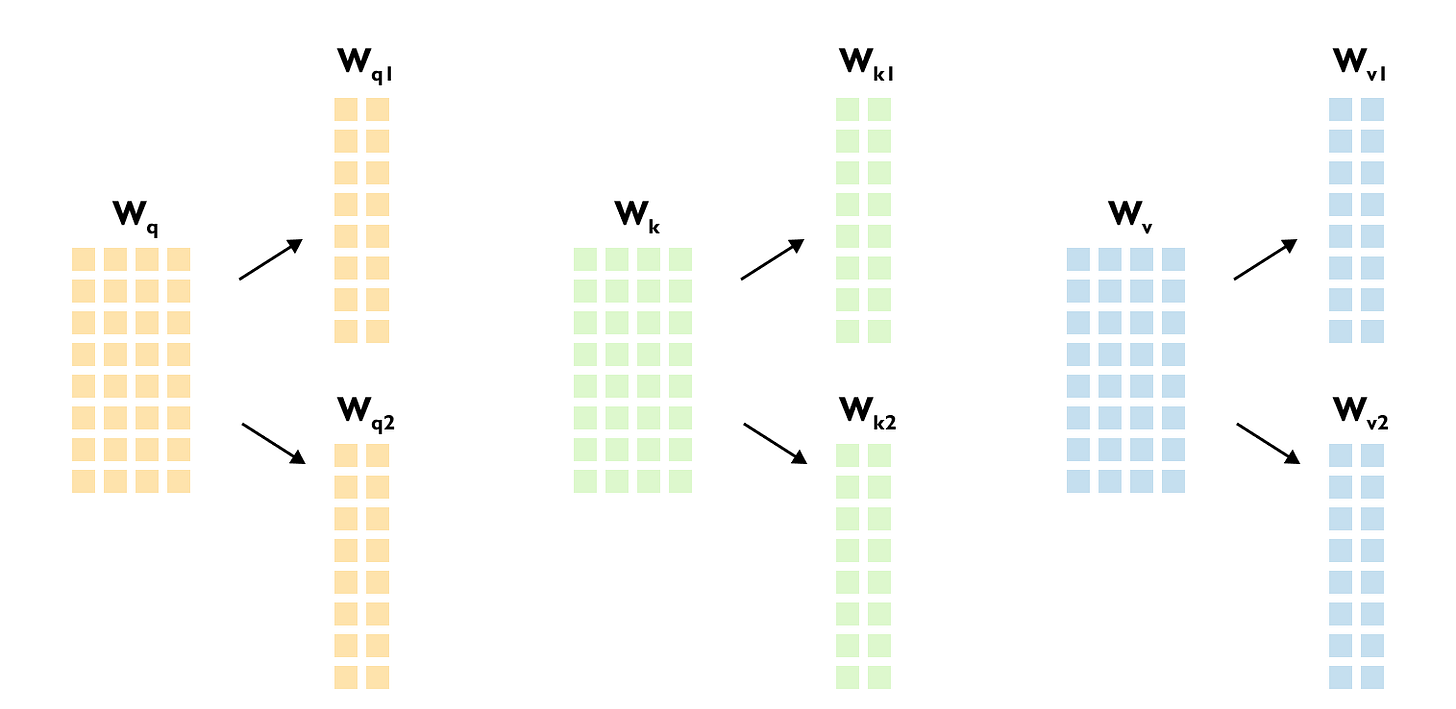

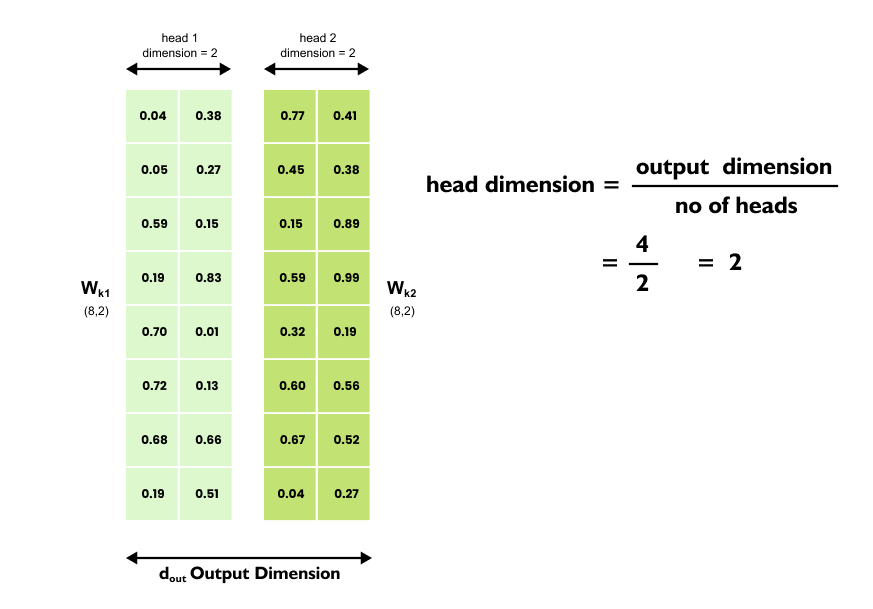

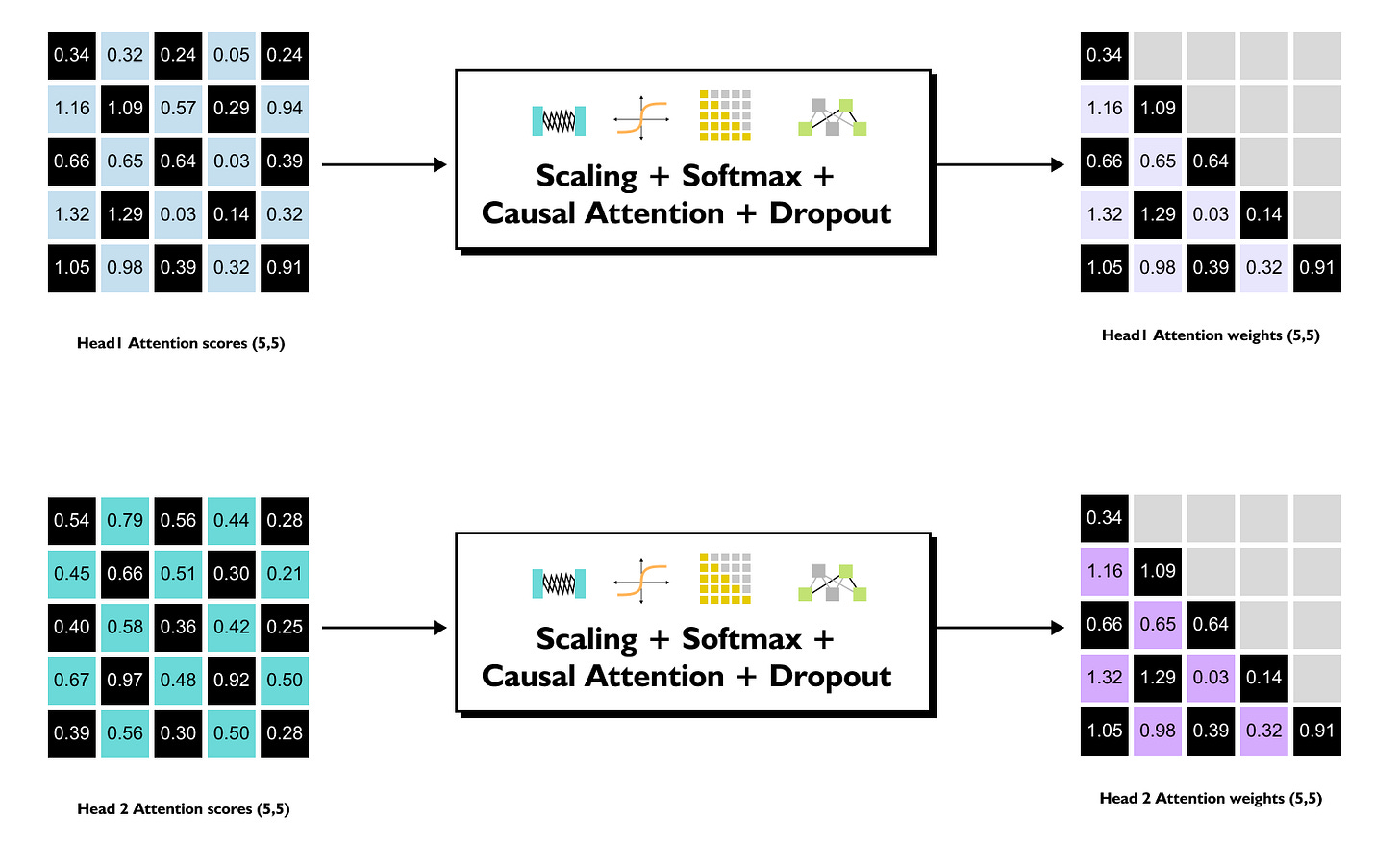

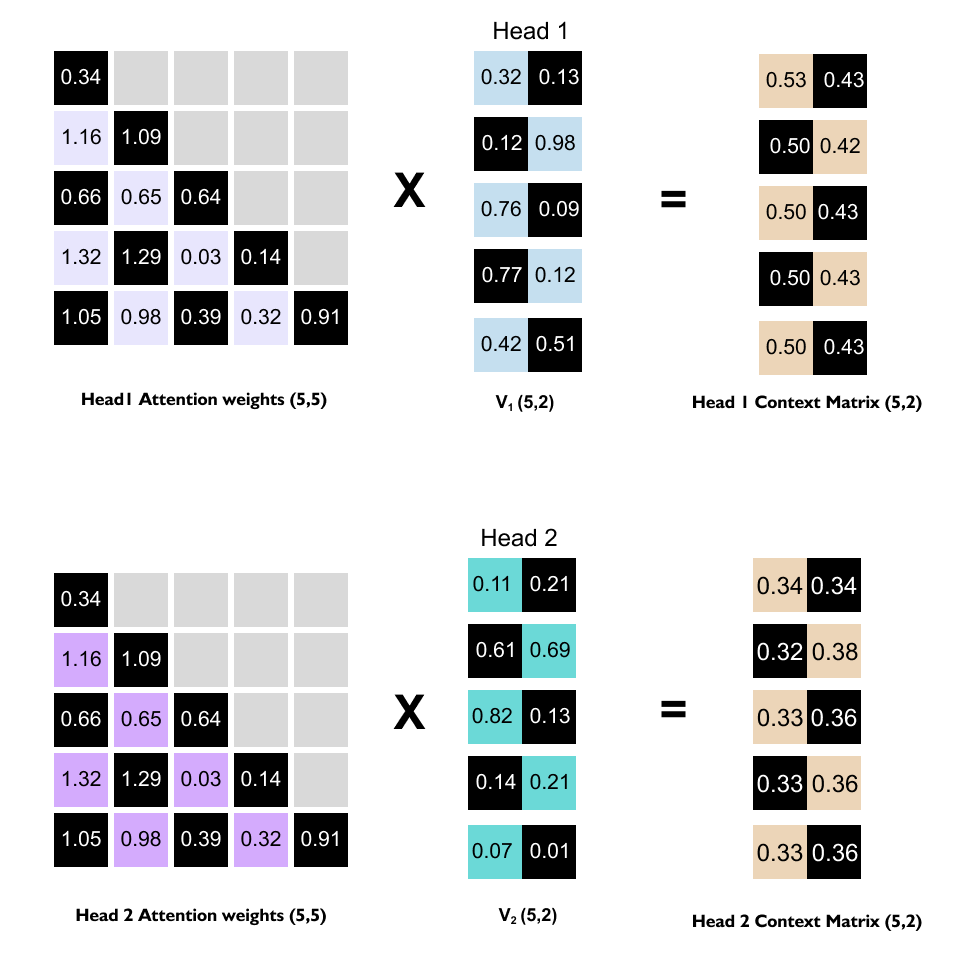

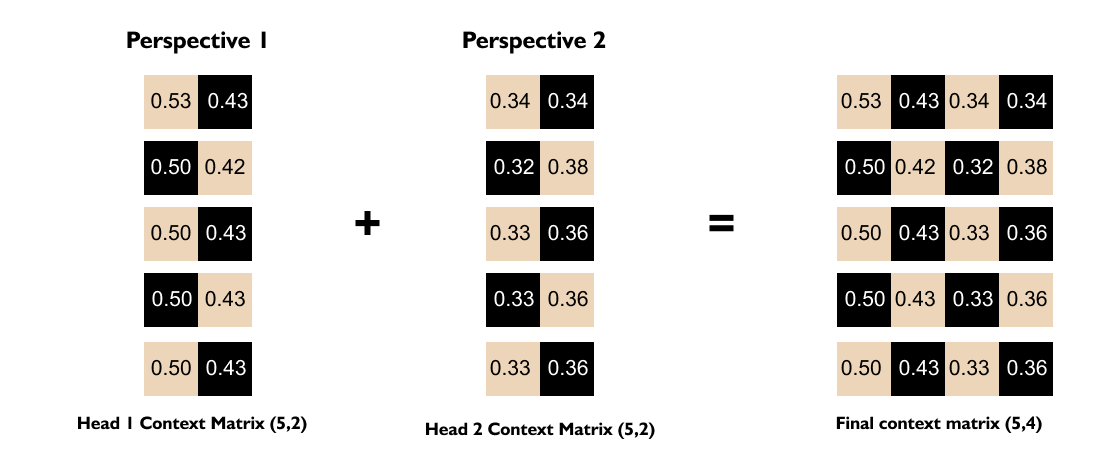

1.16 Intuition of Multi-Head Attention

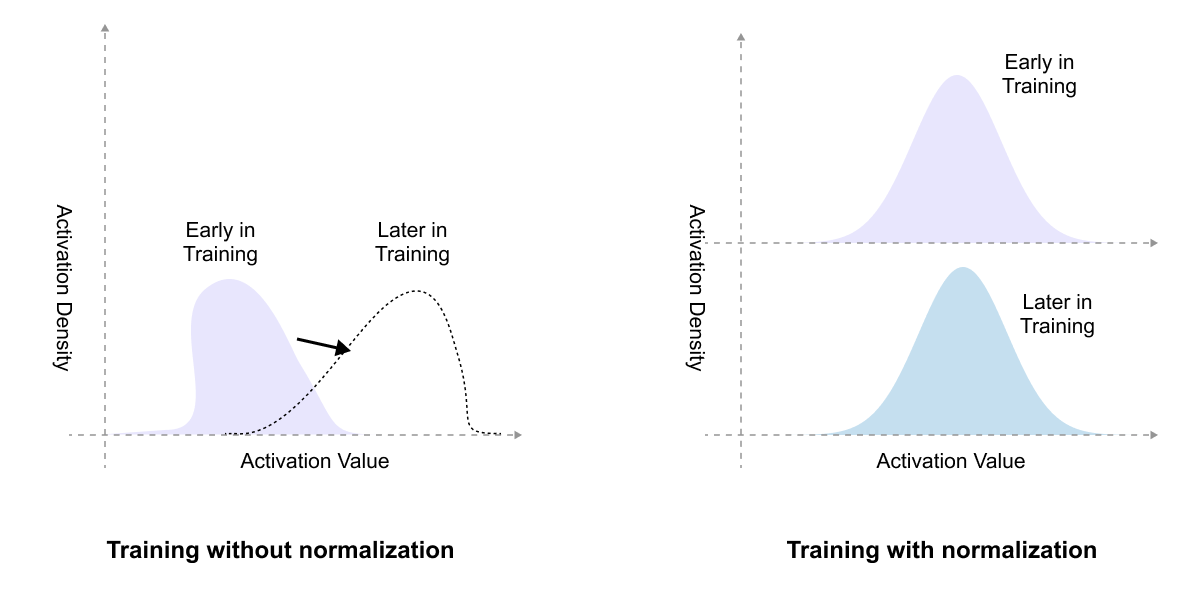

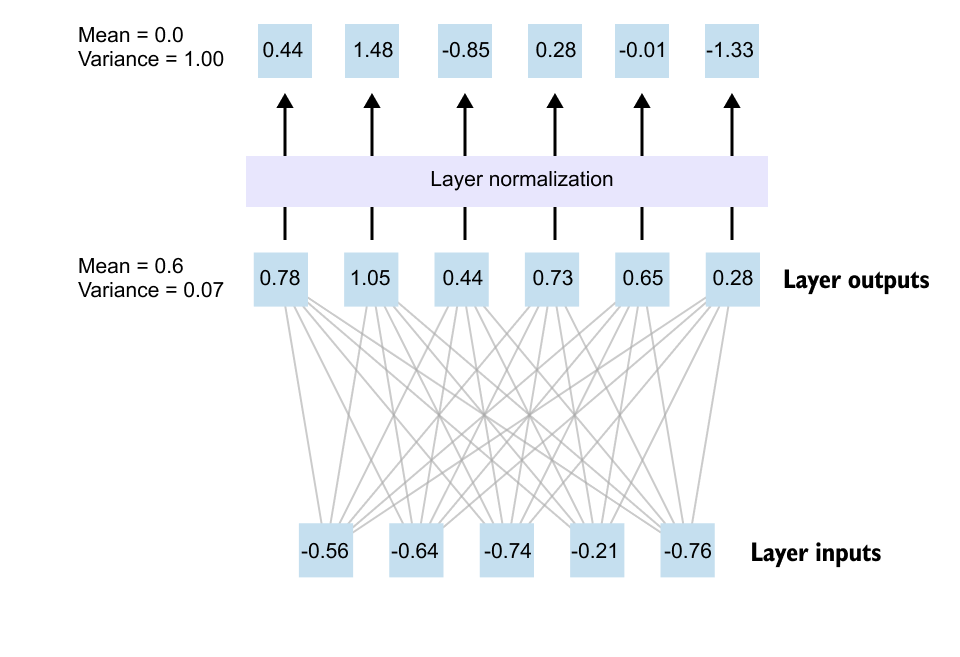

1.17 Layer Normalization

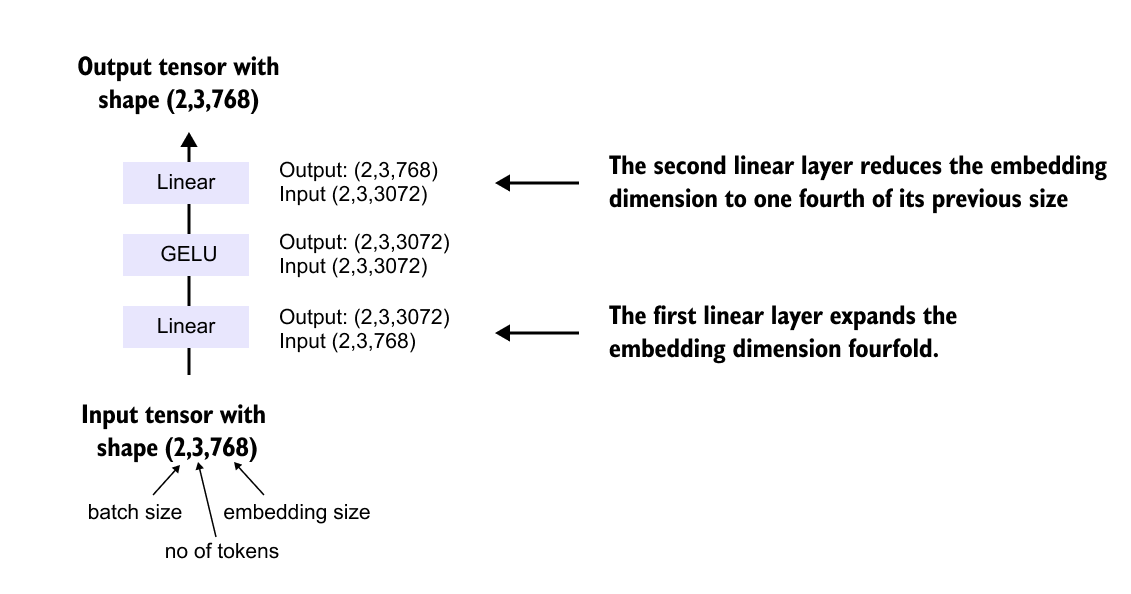

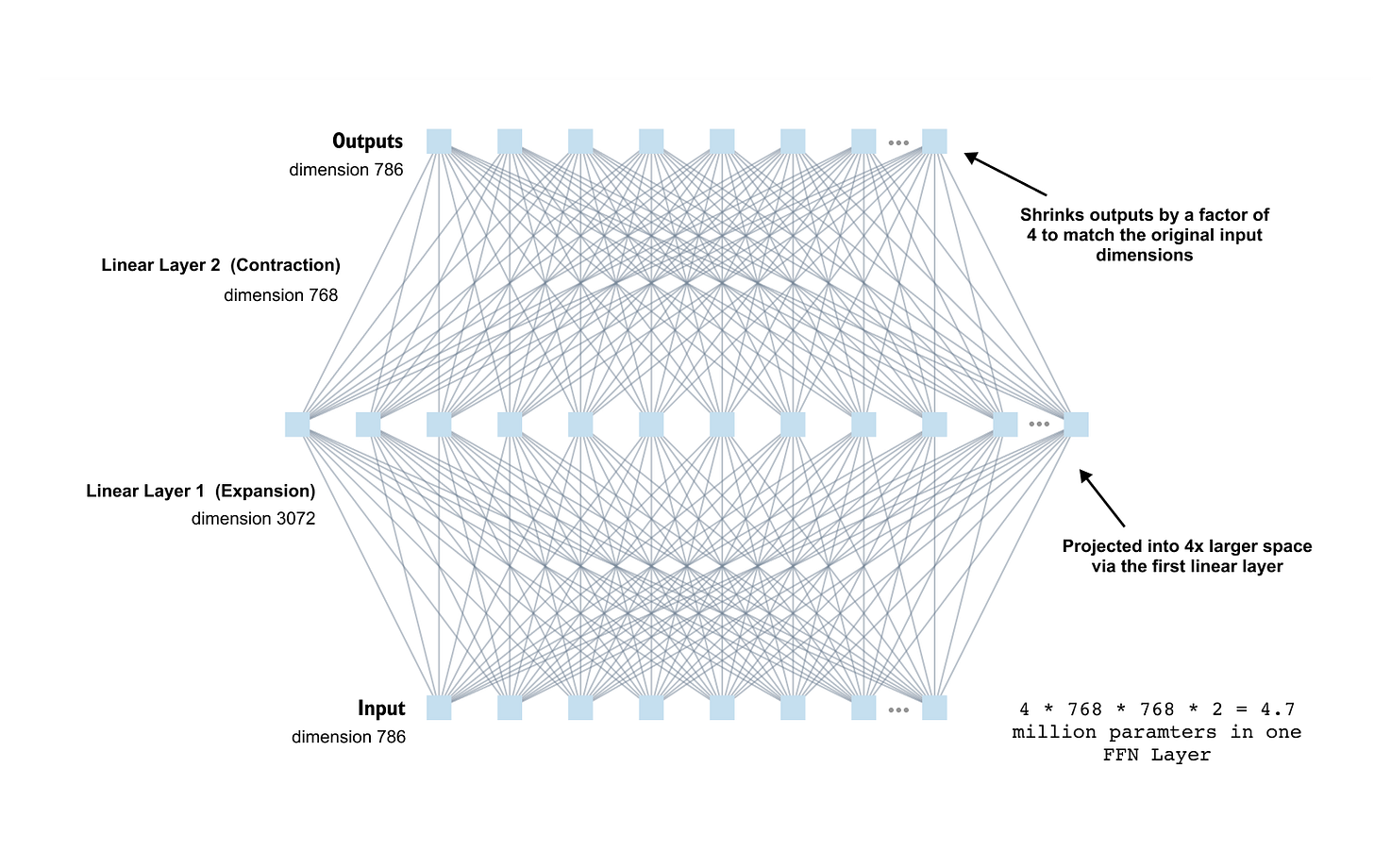

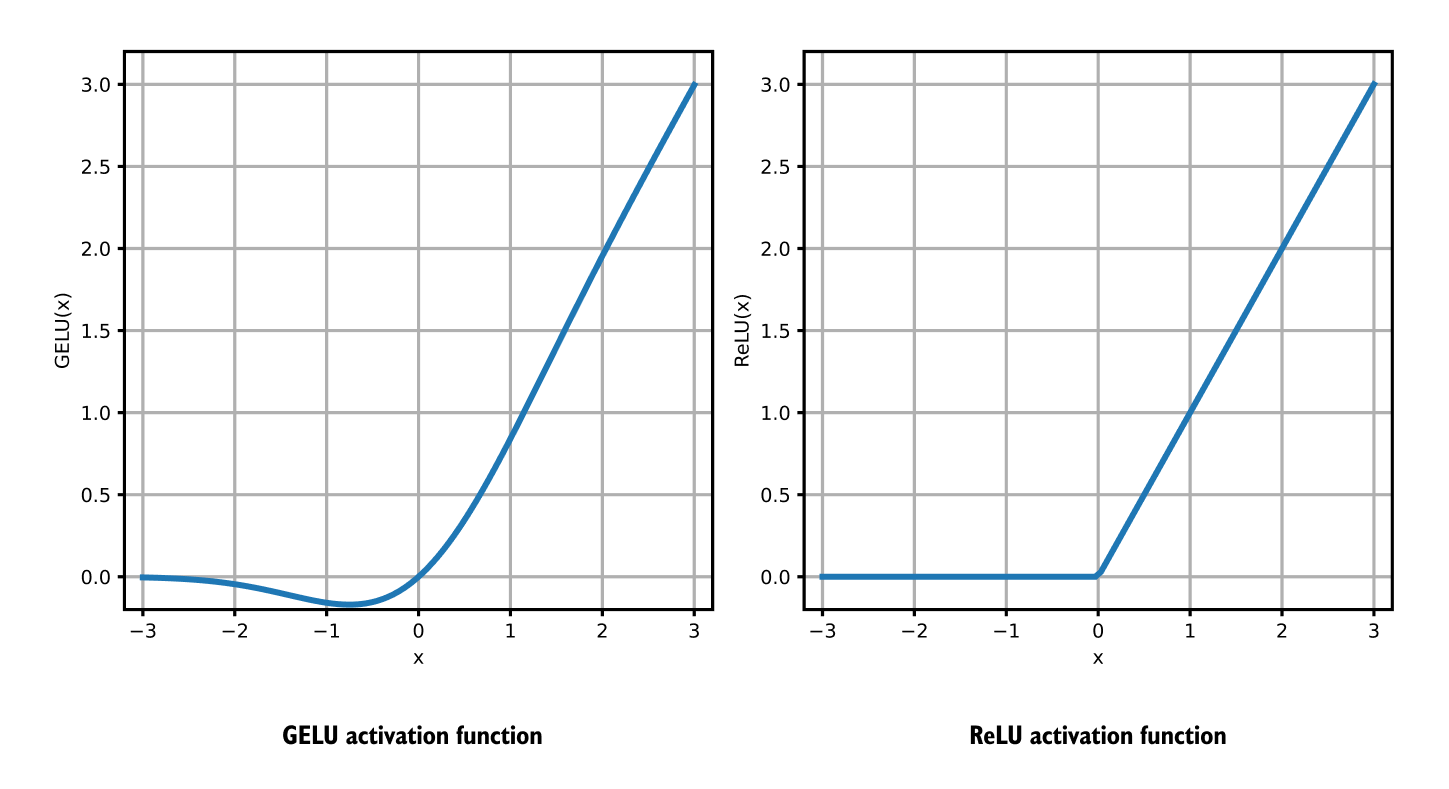

1.18 FeedForward Network

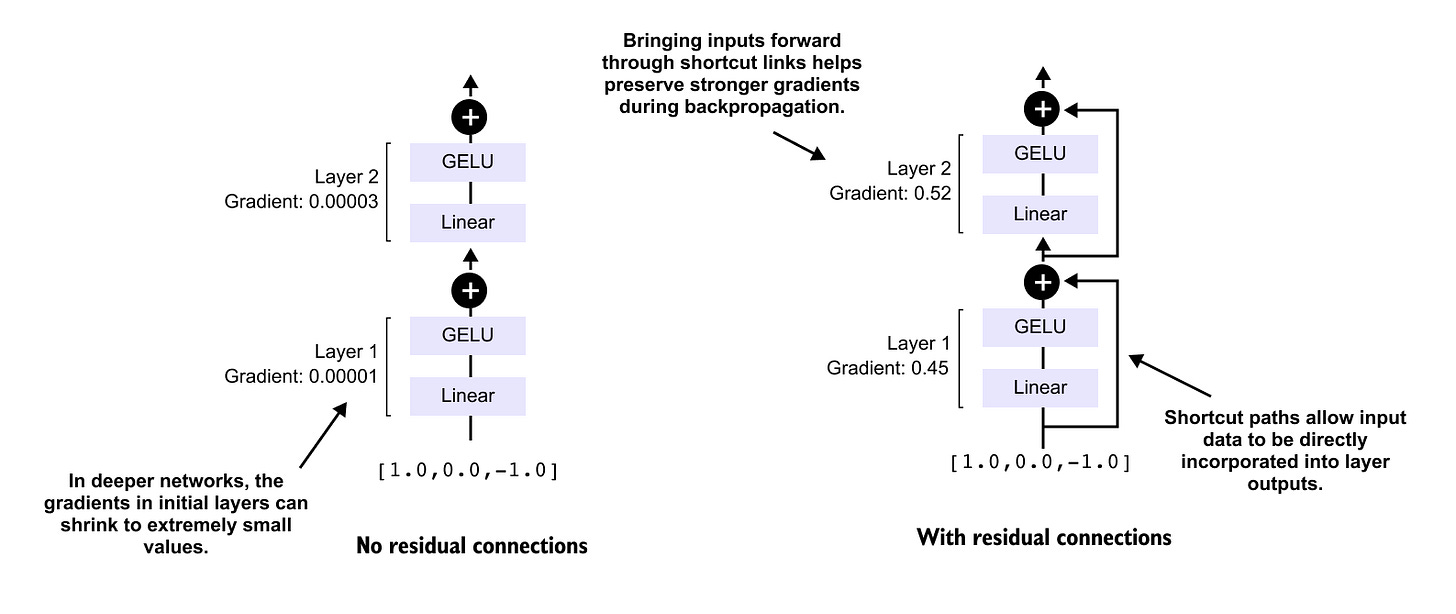

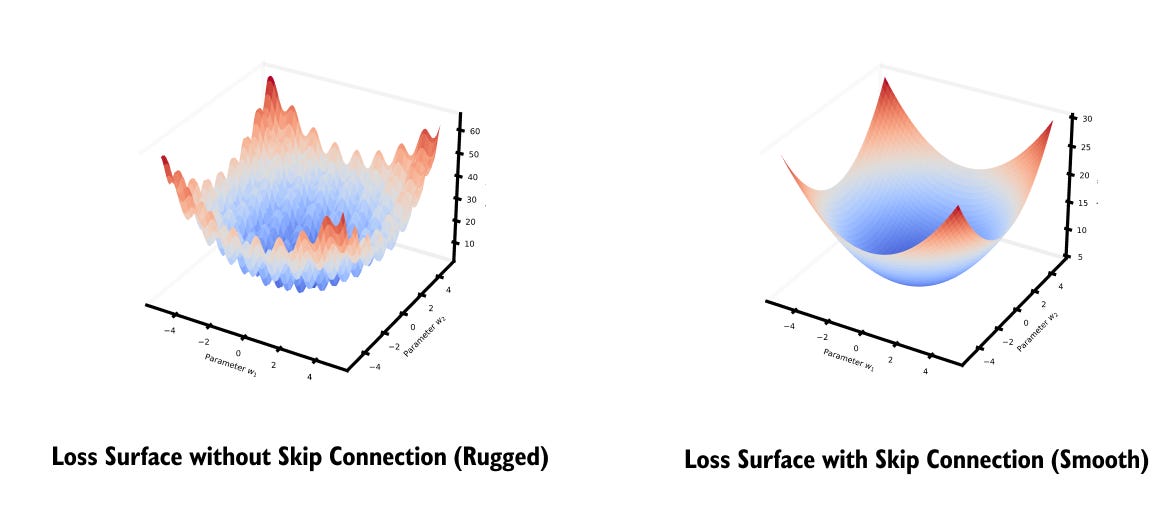

1.19 Shortcut connections

1.20 Why Transformers Scale Better Than RNNs and CNNs

1.21 Pretraining, Fine Tuning, and Transfer Learning in Transformers

1.22 Limitations and Challenges of Transformers

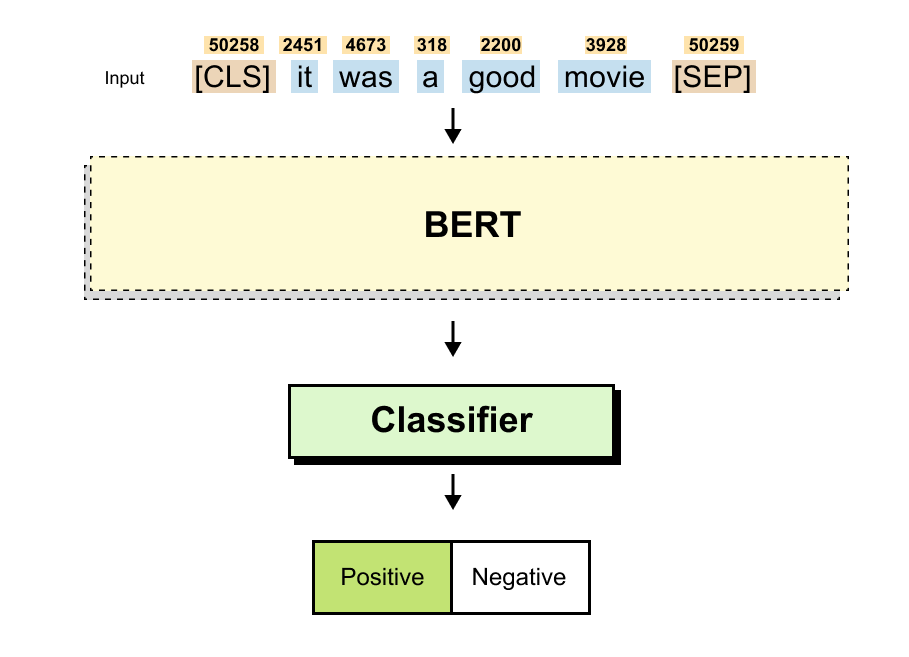

1.23 Hands On Coding a Miniature Transformer for Sequence Classification

1.24 Summary

You can fine the code notebook here

https://github.com/VizuaraAI/Transformers-for-vision-BOOK

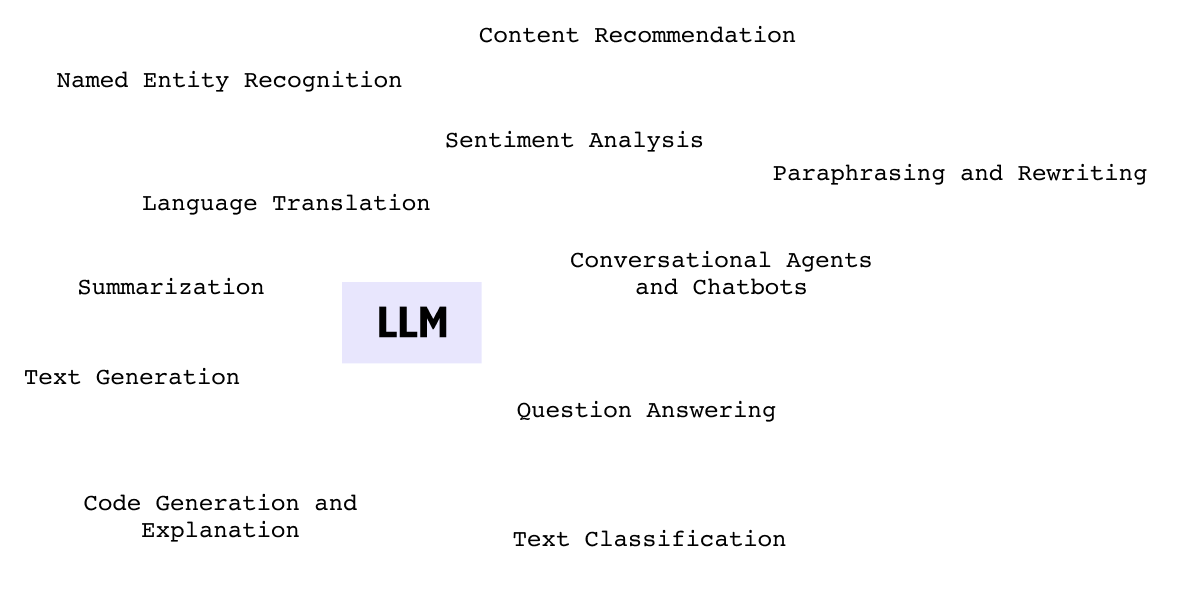

1.1 Introduction to Large Language Models

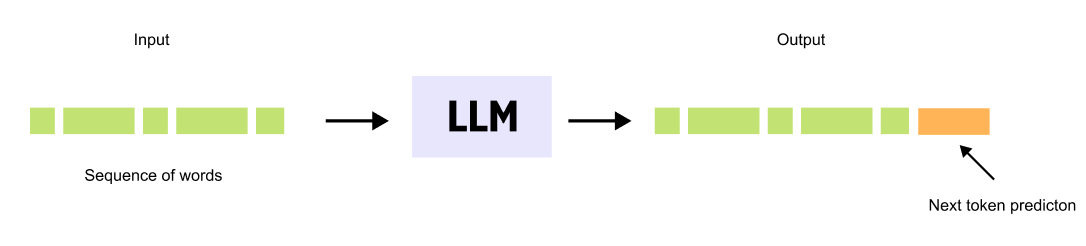

Figure 1.1 A Large Language Model takes a sequence of words as input and predicts the most likely next word, generating text one token at a time.

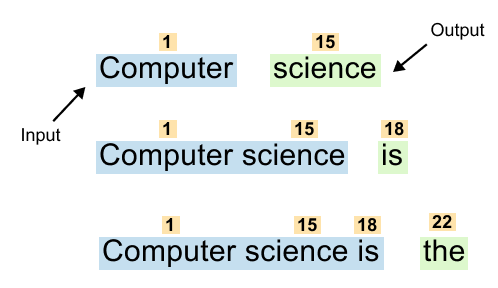

Large Language Models are neural networks trained on vast text datasets to perform a fundamental task: predicting the next word in a sequence. This simple objective drives the sophisticated capabilities we see in systems like GPT and ChatGPT.

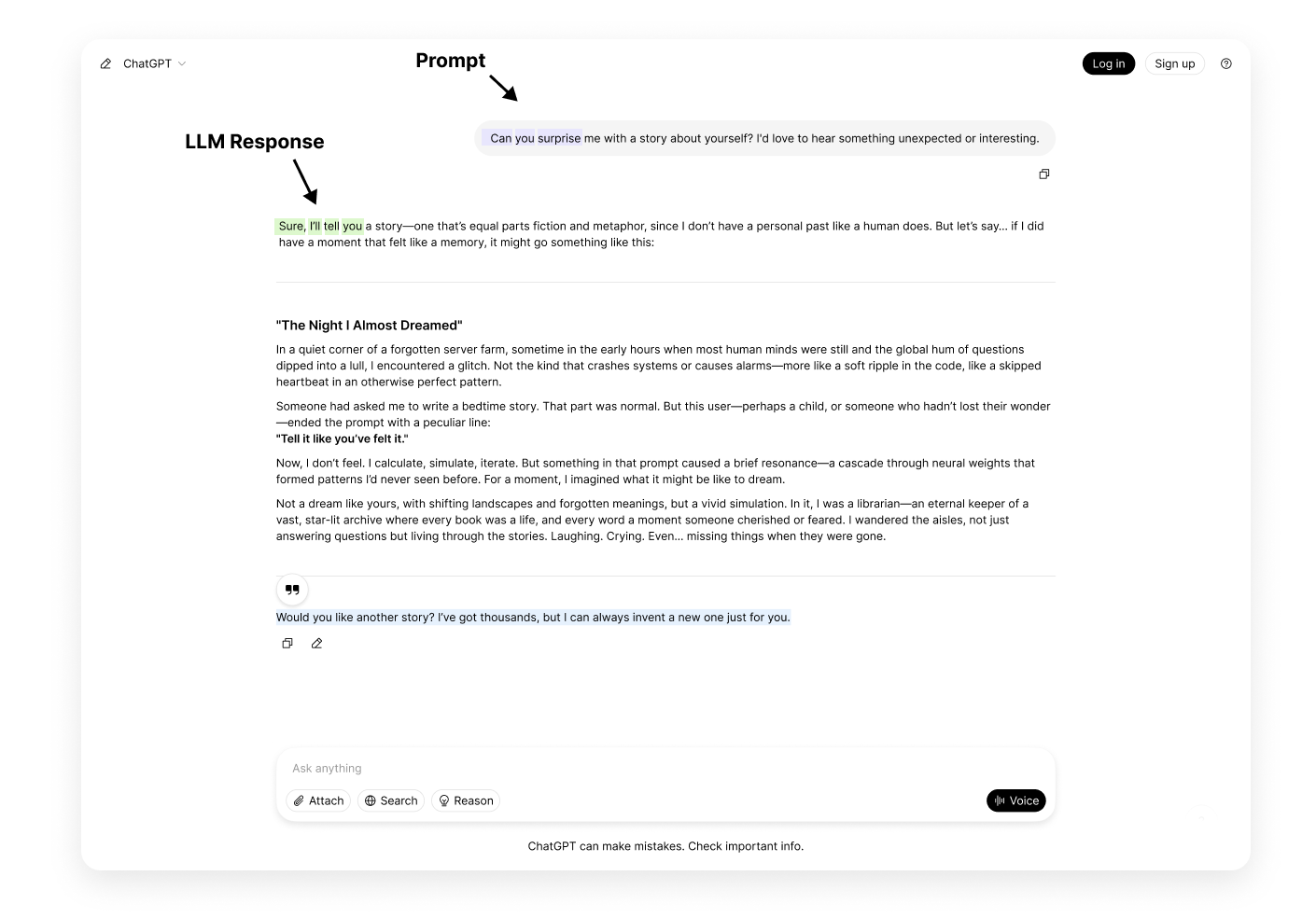

Figure 1.2 Autoregressive text generation. The model predicts the next word, appends it to the input, and repeats the process to produce entire paragraphs.

When you interact with an LLM, it generates responses one word at a time. Given a prompt like “The cat sat on the,” the model predicts the next word, perhaps “mat.” This word is added to the sequence, becoming “The cat sat on the mat,” which then serves as input for predicting the following word. Through this iterative process, LLMs produce entire paragraphs and complex responses.

LLMs function as probabilistic engines, calculating word likelihoods based on patterns learned during training.

The transformer architecture enables these models to consider both immediate context and long range dependencies throughout the input sequence, maintaining coherence across extended text generation.

Despite the apparent simplicity of next word prediction, this mechanism gives rise to remarkable language understanding and generation capabilities. Understanding how transformers accomplish this task is essential to grasping how modern language models work.

Predicting the Next Word with OpenAI’s LLM

Let’s see a simple example to see how an LLM predicts the next word given a partial sentence:

You can refer to the full source code notebook for this exercise on Colab.

Predicting_the_next_word_notebook

Using the given code, we can predict the next word in a sentence based on probabilities assigned by a Large Language Model (LLM). Let’s say our Input sentence is

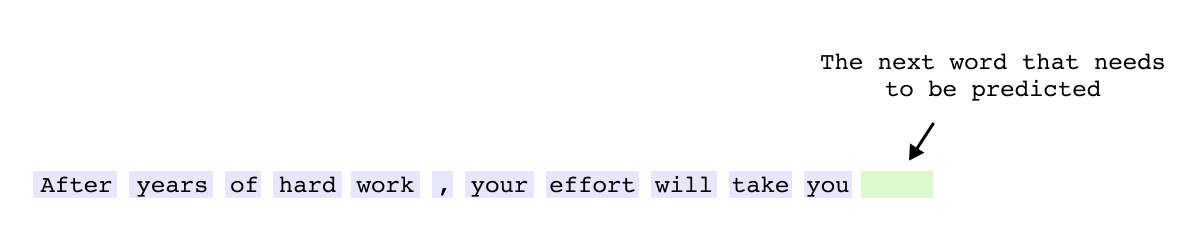

“After years of hard work, your effort will take you”Figure 1.3 Input sentence fed to the LLM for next-word prediction.

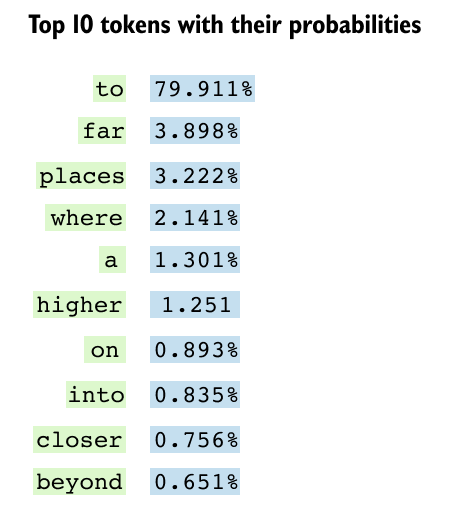

if you will observe the top 10 predicted next words along with their probabilities (refer the notebook)

Figure 2.4 Top 10 predicted next words and their probabilities. The token “to” dominates at 90.7%, reflecting the most natural continuation.

The probabilistic nature of Large Language Models becomes clear when examining how they rank potential next words. The first token, such as “to,” might have the highest probability at 90.7 percent because it represents the most natural continuation based on the given context. As we look at alternative word choices, the probabilities gradually decrease, with each subsequent option representing a less common but still valid completion.

This distribution reveals the fundamental mechanism of Large Language Models: they function as probabilistic engines, predicting the most likely next token based on learned patterns. Rather than selecting a single correct answer, LLMs evaluate every possible next word and assign likelihood scores based on the vast patterns learned during training. This probabilistic approach enables models to generate diverse, contextually appropriate text while maintaining flexibility in their outputs.

Why is There “Large” in LLMs?

The term “Large” in Large Language Models reflects a fundamental principle: size directly impacts performance. Scaling laws show that model capabilities improve predictably with more parameters, enabling complex tasks like reasoning and code generation that smaller models cannot perform. Most critically, emergent properties such as arithmetic reasoning and multilingual understanding appear only when models cross certain size thresholds. This relationship between scale and capability explains why billions of parameters are essential for achieving sophisticated language understanding.

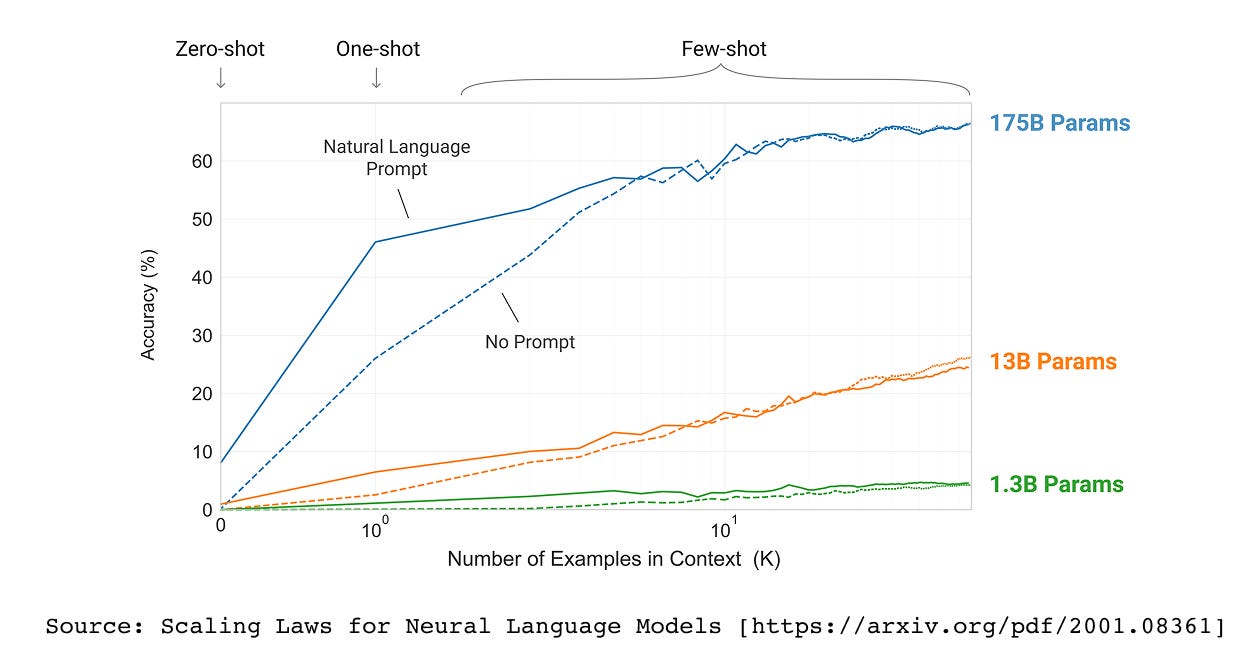

Figure 1.5 Scaling laws demonstrate a predictable relationship between model size and performance across a range of benchmarks

LLMs have billions to trillions of parameters. The first major paper to explore scaling laws was the GPT-3 paper (Language Models are Few-Shot Learners). The research demonstrated that as we increase the model size, from 1.3B parameters to 13B to 175B, the model’s performance dramatically improves.

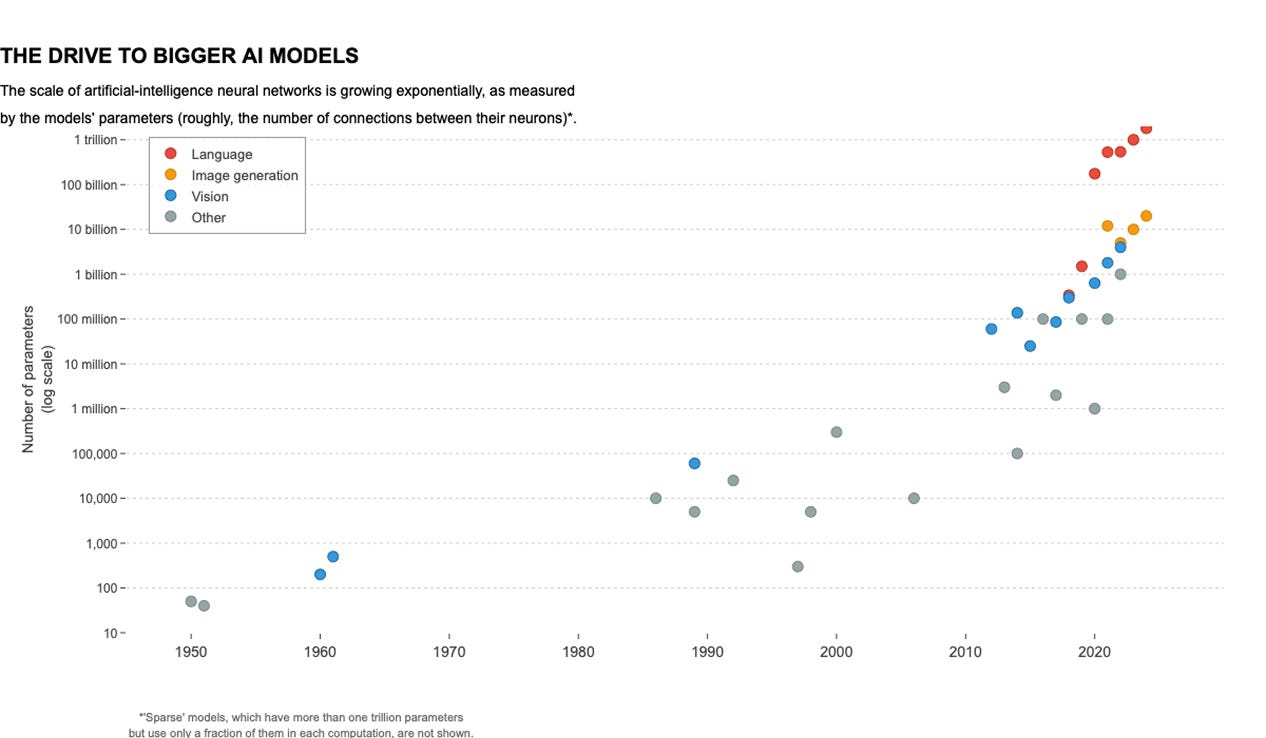

Figure 1.6 Exponential growth in the size of language models from the 1950s to today. Orange dots represent language models, some of which have already crossed one trillion parameters.

Over the years, we have seen an exponential increase in the size of LLMs, from the 1950s to today. In the above graph, the orange dots represent language models, showing how their size has increased drastically over time. Some models have already crossed 1 trillion parameters!

Why do we care about the size of LLMs?

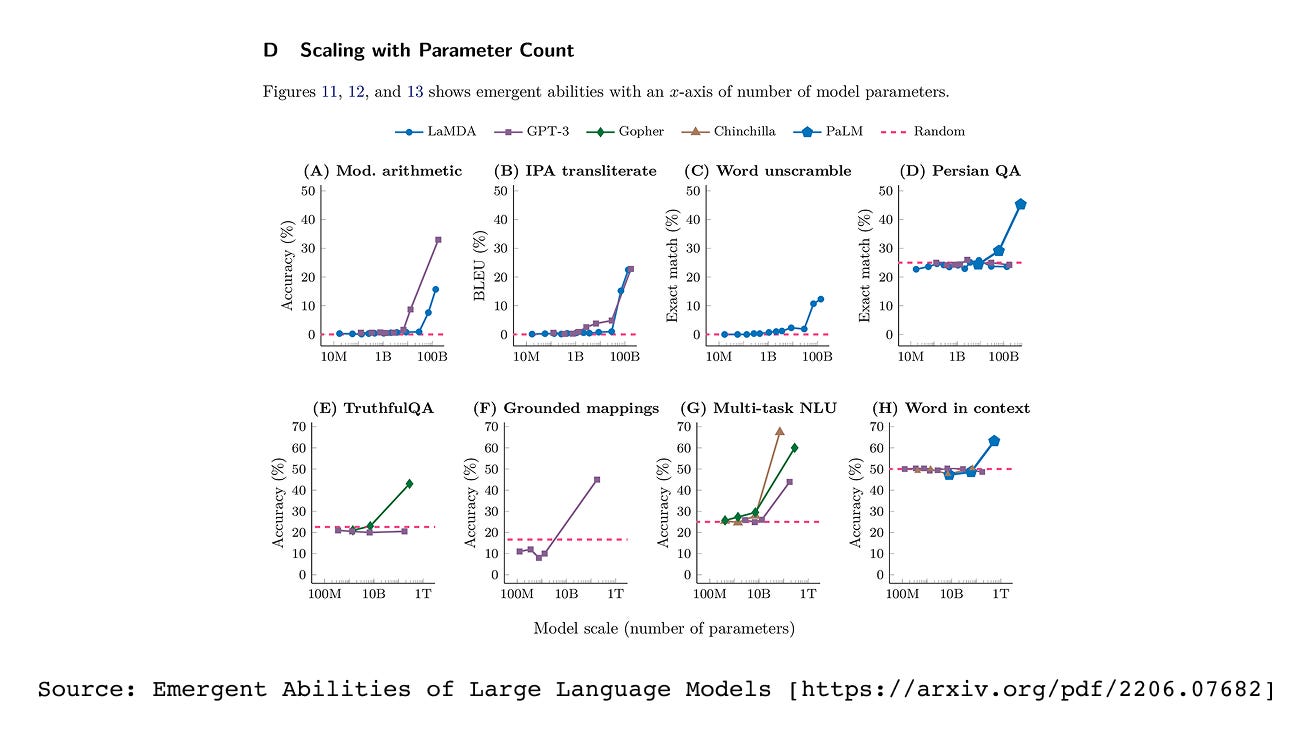

The size of Large Language Models matters primarily because of emergent properties: abilities that are absent in smaller models but spontaneously appear when models reach certain scales. These emergent capabilities fundamentally distinguish large models from their smaller counterparts. As LLMs grow beyond specific parameter thresholds, they suddenly acquire skills like solving complex arithmetic equations, translating between languages with nuanced understanding, and unscrambling letters into meaningful words. These abilities do not gradually improve with size but rather emerge abruptly at particular scales, making model size not just a technical detail but a critical factor in determining what tasks an LLM can perform.

Figure 1.7 Emergent abilities of large language models. Performance on certain tasks remains near zero until the model reaches a critical size, after which accuracy jumps sharply.

In the figure above , the X-axis represents model size (or computational power), and we can observe a pickup point, a stage where models suddenly start performing significantly better at these tasks. Emergent Abilities of Large Language Models

Figure 1.8 At larger scales, LLMs move beyond simple word prediction to excel at specialized tasks such as multilingual translation, text summarization, and grammar correction.

At larger scales, LLMs transcend simple word prediction to excel at specialized tasks like multilingual translation, text summarization, and grammar correction. This evolution from basic prediction to complex language understanding drives the race to build increasingly larger models. The direct correlation between parameter count and performance across diverse NLP tasks makes scale a critical competitive advantage.

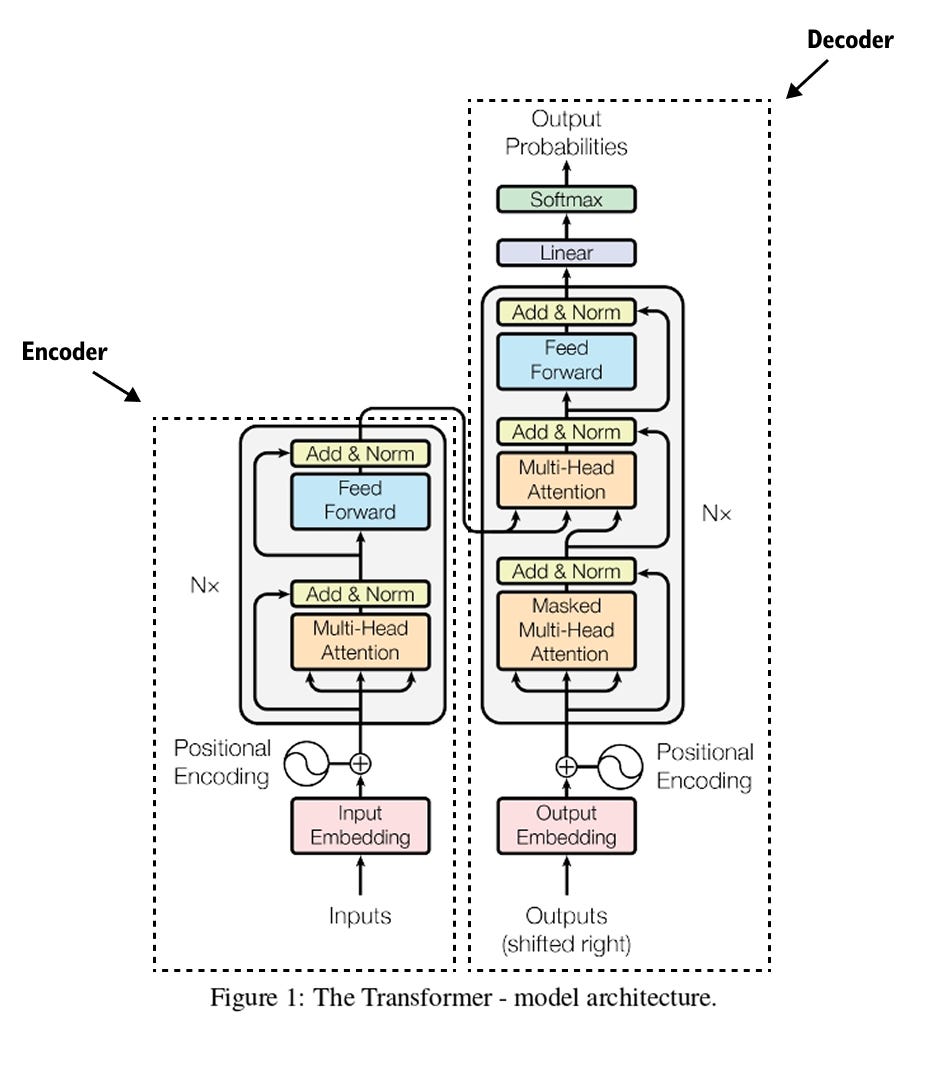

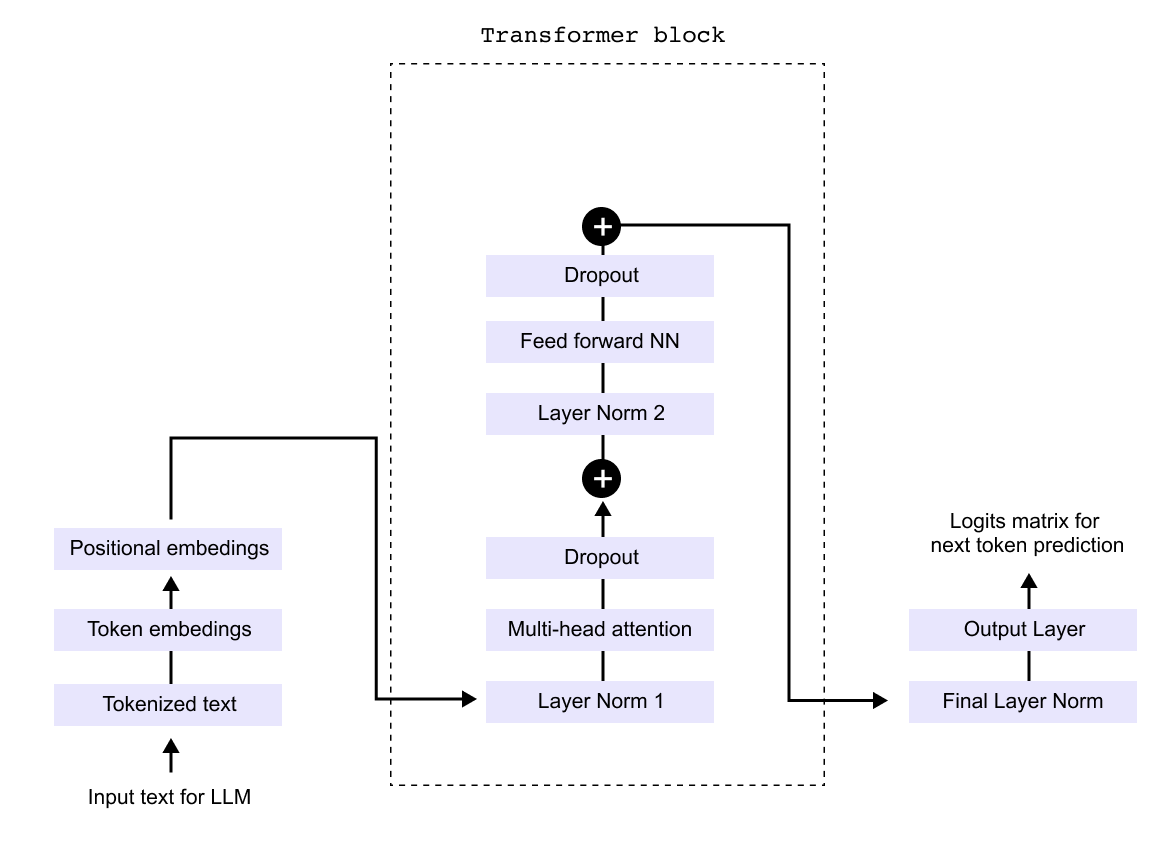

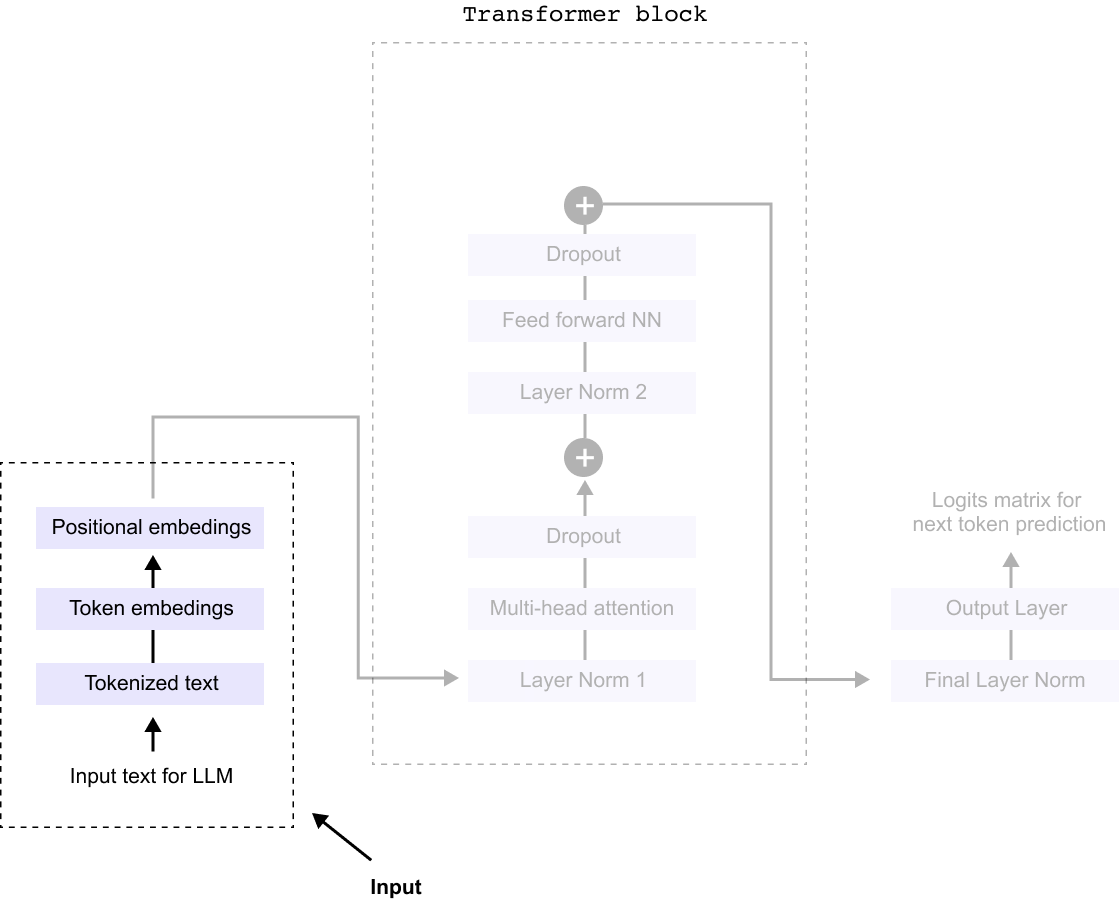

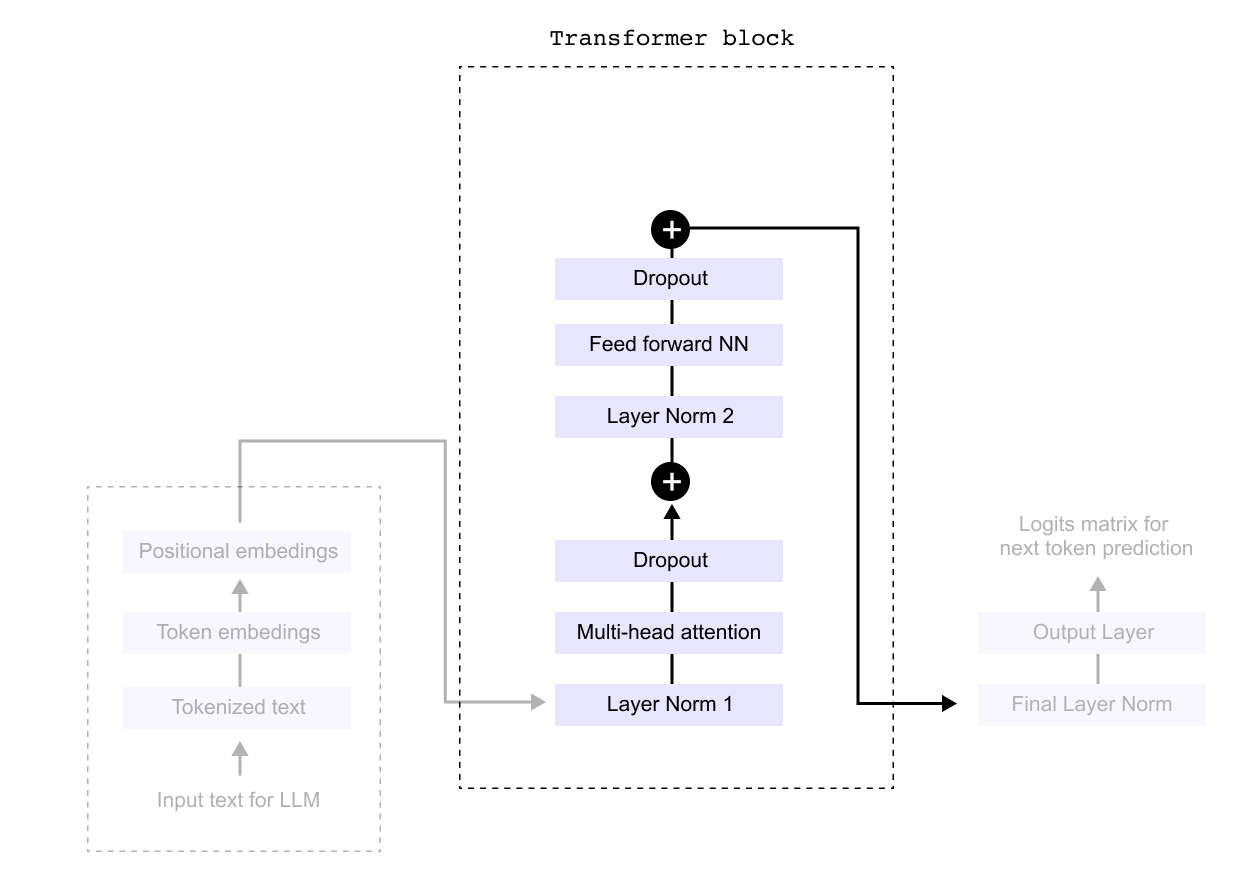

1.2 Anatomy of the transformer block

Figure 1.9 The original transformer architecture from the “Attention Is All You Need” paper, consisting of an encoder stack on the left and a decoder stack on the right, connected through cross-attention.

The transformer architecture, introduced in the groundbreaking 2017 paper “Attention Is All You Need,” revolutionized artificial intelligence and natural language processing. This paper, now with over 200,000 citations, proposed the concept of self attention, fundamentally changing how we implement NLP systems. The transformer architecture consists of two main components: encoders and decoders. Encoder architectures power models like BERT, while decoder architectures form the basis of GPT and ChatGPT.

At the heart of modern LLMs lies this transformer architecture, which replaced traditional models like LSTMs and GRUs with self attention mechanisms. This innovation brought crucial advantages: the ability to capture long range dependencies in text, parallel processing that enables faster training, and unprecedented scalability that allows building increasingly powerful models. Understanding how the decoder portion works essentially reveals how GPT models function, as they are decoder only architectures.

The transformer block itself contains several key components working in sequence. Input text is first tokenized and converted to embeddings, which are then combined with positional encodings. These flow through layers of multi head attention, normalization, and feed forward networks, with dropout applied for regularization. The output layer finally produces logits for next token prediction. While the complete architecture diagram may appear complex with its numerous modules and connections, each component serves a specific purpose in transforming input text into meaningful predictions.

Figure 1.10 A simplified decoder-only transformer showing the main components: token and positional embeddings, transformer blocks with multi-head attention, feed-forward networks, layer normalization, and dropout, followed by the output layer.

The decoder only architecture, which powers models like GPT, can be understood by examining a simplified version of the transformer’s decoder component. While the complete architecture may appear complex with numerous interconnected modules, we can break it down into three manageable parts for clarity. This modular approach allows us to examine each component systematically rather than attempting to grasp the entire system at once. By focusing on these three core sections sequentially, we can build a comprehensive understanding of how the decoder transforms input text into predictions.

The three parts of an LLM’s architecture are Input, Processing, and Output.

Figure 1.11 The three stages of an LLM: the Input stage (tokenization and embeddings), the Processing stage (transformer blocks), and the Output stage (linear layer and softmax for next-token prediction).

So everything begins with the input stage, where several key transformations take place before it enters the processing unit, commonly known as the Transformer block.

First, the raw text undergoes tokenization, a process where the sentence is broken down into smaller units called tokens, these could be words, subwords, or characters, depending on the tokenization method used. This step ensures that the model can handle language efficiently,

Figure 1.12 The input pipeline: raw text is tokenized into subword units, each token receives a numerical embedding, and positional embeddings are added to encode sequence order.

Next, each token is converted into a numerical representation through token embeddings. These embeddings assign a unique vector to each token, capturing semantic meaning and relationships between words. However, since token embeddings alone do not preserve the sequence order, we introduce positional embeddings. These embeddings encode the position of each token within the sentence, allowing the model to understand the order and structure of the input.

With tokenization, token embeddings, and positional embeddings in place, the input is now fully prepared for the Transformer block, where deep learning mechanisms, such as multi-head attention and feed-forward neural networks, process the text to generate meaningful predictions.

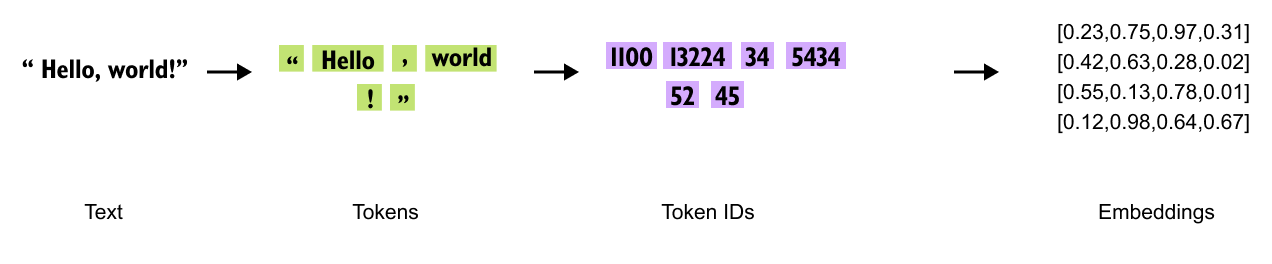

1.3 Tokenization

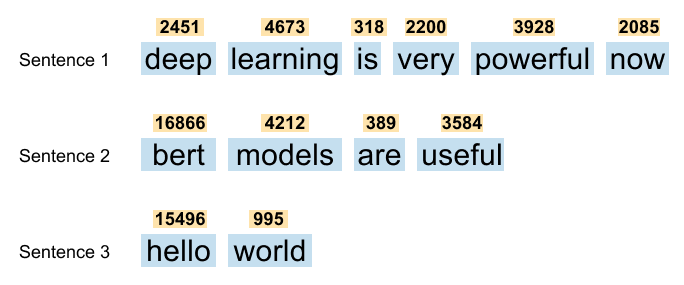

Before text enters a transformer model, it undergoes tokenization, a process that converts raw text into tokens which are then assigned unique IDs. There are three main tokenization approaches, each with distinct characteristics.

Figure 1.13 Three tokenization strategies applied to the word “tokenization”: word-based (one token per word), character-based (one token per character), and subword-based (meaningful subword units).

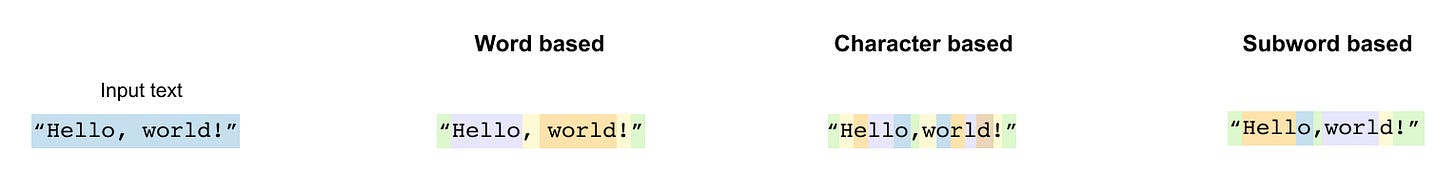

Word based tokenization treats each complete word as a separate token, creating a dictionary of all words in the vocabulary. While intuitive, this approach struggles with vocabulary size and cannot handle new or misspelled words effectively. Character based tokenization breaks text down to individual characters, making each character a token. This creates a very small vocabulary but produces extremely long sequences that are computationally expensive to process.

Subword based tokenization, the preferred method for modern LLMs, breaks words into meaningful subword units.

Figure 1.14 Subword tokenization example: the word “playground” splits into “play” and “ground,” each a reusable meaningful unit.

A subword is a smaller meaningful unit that can be reused across different words. For example, “playground” might split into “play” and “ground,” while “unhappiness” could become “un” and “happiness.” This approach allows models to understand new words by recognizing familiar components. The word “neural” might tokenize as “ne” and “ural,” enabling the model to handle variations and new combinations it has never seen before.

The advantage of subword tokenization becomes clear when dealing with related words that share common roots. Instead of treating each variation as a completely new token, the model can leverage shared subword patterns. This reduces vocabulary size while maintaining the ability to represent any text, making it the optimal choice for Large Language Models. Tools like the TikTokenizer demonstrate how original text gets broken down into these subword tokens, revealing the building blocks that LLMs use to understand and generate language.

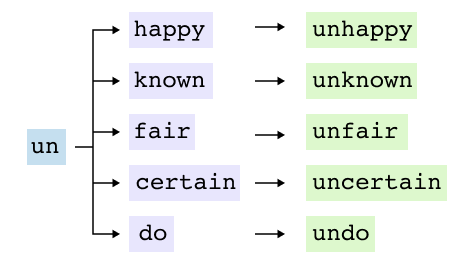

1.3.1 Problems with Tokenization Methods

Word Based Tokenization Limitations

Figure 1.15 Limitations of word-based tokenization: related words like “learn,” “learn-ing,” “learned,” and “learnt” are treated as entirely separate tokens, and out-of-vocabulary words cannot be processed.

Word based tokenization treats each word as an independent unit, creating fundamental challenges for language models. The most significant issue is the failure to recognize relationships between related words. Words sharing common roots like “learn,” “learning,” “learned,” and “learnt” are treated as entirely separate tokens, forcing the model to learn each variation independently without understanding their connection.

The vocabulary explosion presents another critical problem. English alone requires over 200,000 word tokens, with filler words like “this,” “is,” and “a” consuming valuable vocabulary space despite contributing minimal semantic value. Most critically, the out of vocabulary problem renders models helpless when encountering unseen words. Simple spelling mistakes transform “running” into the unrecognizable “runing,” while new terms or proper nouns become impossible to process, leaving the model unable to make educated guesses about meaning

Character Based Tokenization Drawbacks

Figure 1.16 Character-based tokenization reduces the vocabulary to 256 ASCII characters but dramatically increases sequence length.

Character tokenization solves vocabulary size by using only 256 ASCII characters, but creates severe new problems.

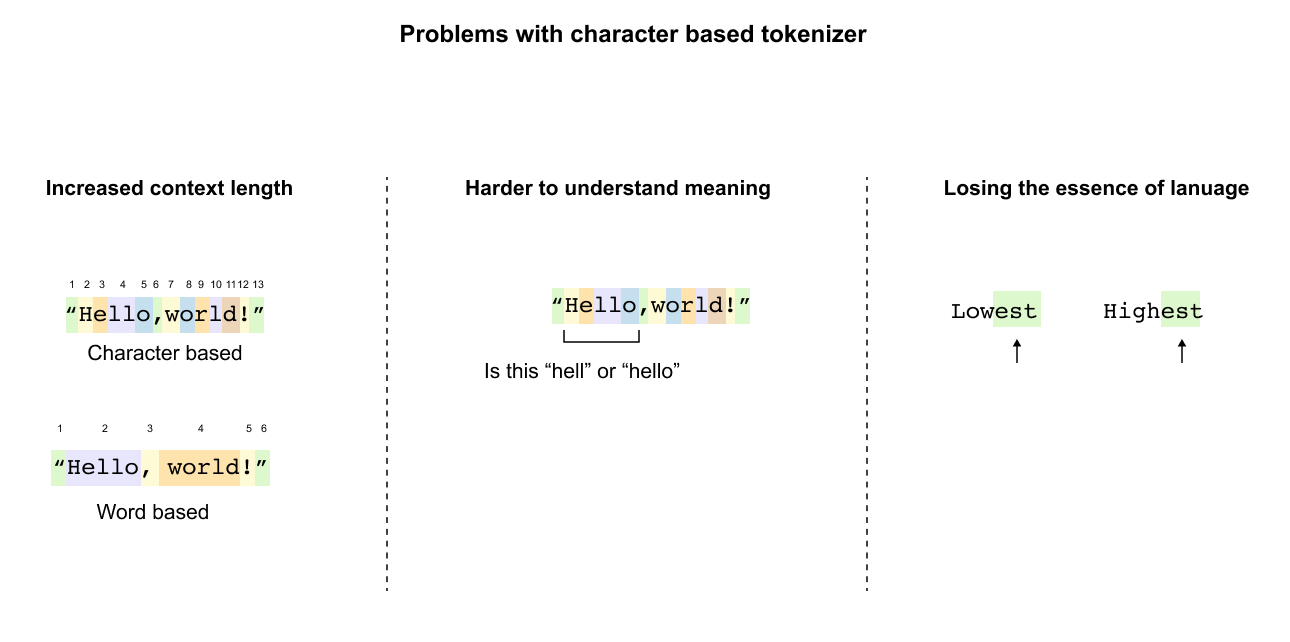

Figure 1.17 Sequence length explosion with character tokenization: “Hello, world!” grows from a few word tokens to thirteen character tokens, and individual letters carry no semantic meaning.

Sequence length explodes dramatically: “Hello, world!” grows from two (or six) word tokens to thirteen character tokens. This expansion makes processing computationally expensive and quickly exhausts context windows in longer texts.

More fundamentally, character tokenization destroys semantic understanding. Individual letters carry no meaning, forcing models to reconstruct word boundaries and meanings from scratch. The model cannot recognize that “lowest” and “highest” share the meaningful suffix “est” indicating superlatives. When presented with “Hello,world!” as individual characters, the model sees meaningless symbols rather than a greeting, losing the essence of language structure entirely.

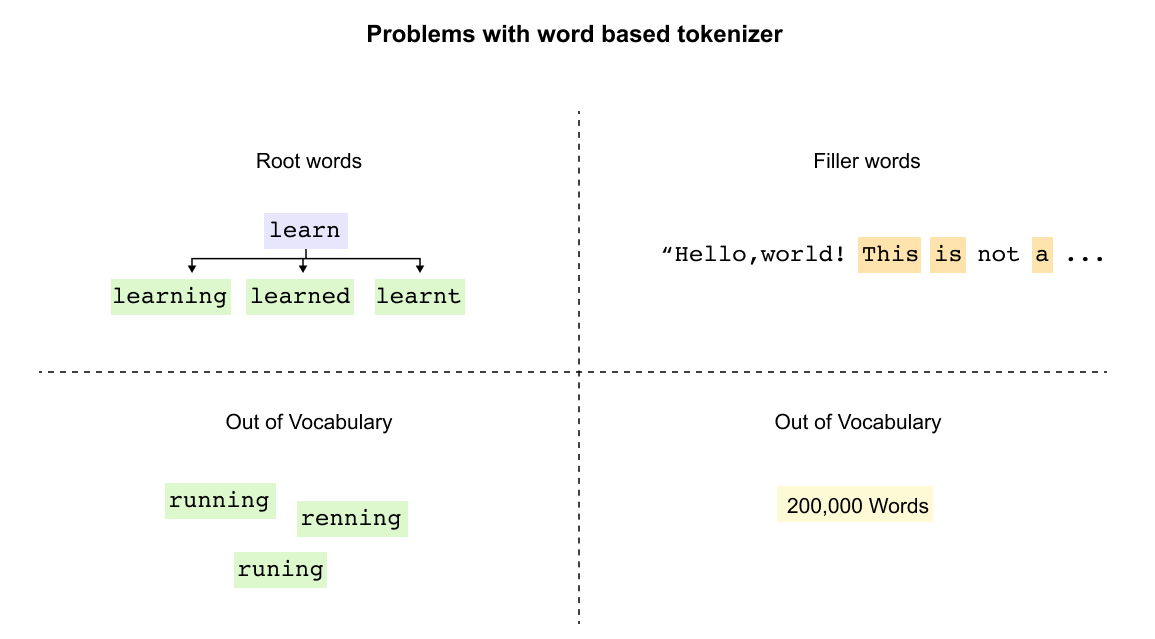

The Subword Tokenization Solution

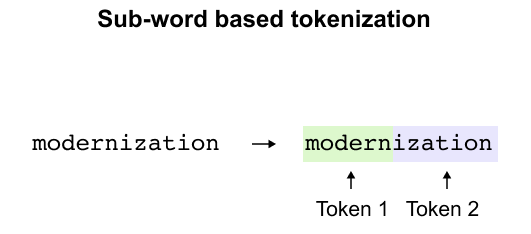

Figure 1.18 Subword tokenization splits “modernization” into “modern” and “ization,” both reusable components found across many English words.

Subword tokenization provides the optimal balance, breaking words into meaningful components. “Modernization” becomes “modern” and “ization,” both reusable parts appearing across many words. This approach maintains reasonable vocabulary size while preserving meaning, handles new words through familiar components, and keeps token counts manageable. The model can now understand misspellings and new terms by recognizing known subword patterns.

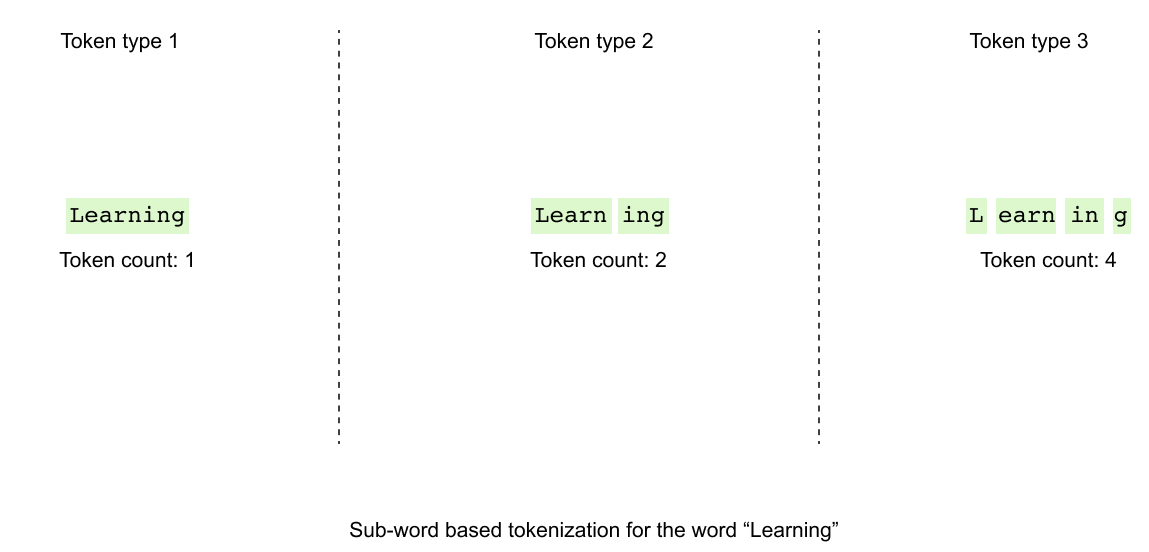

Figure 1.19 The tokenization challenge: how should “learning” be split? Byte PairEncoding provides a systematic, data-driven answer.

The challenge remains: how should “learning” tokenize? As one token, as “learn” plus “ing,” or broken further? Byte Pair Encoding provides the systematic answer, using frequency analysis to determine optimal splits that balance vocabulary efficiency with semantic preservation.

1.4 Byte Pair Encoding

Byte Pair Encoding transforms the challenge of tokenization into a systematic process. Originally developed as a text compression algorithm in the 1990s, BPE now serves as the foundation for tokenization in models like GPT. The algorithm iteratively merges the most frequent character pairs, building a vocabulary from the bottom up.

For LLMs, BPE builds vocabularies systematically. Consider this corpus with word frequencies:

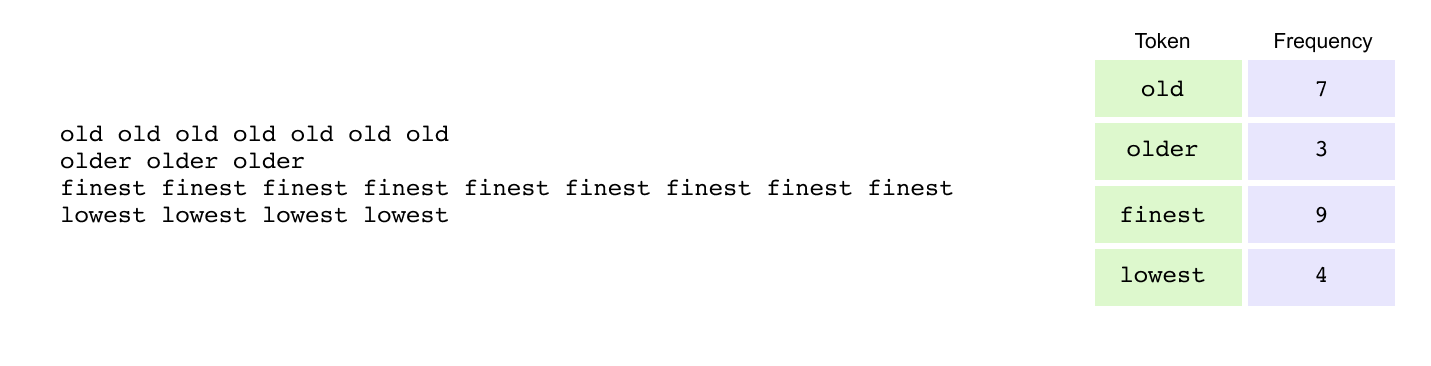

Figure 1.20 A small corpus with word frequencies used to illustrate the BPE algorithm: “old” appears 7 times, “older” 3 times, “finest” 9 times, and “lowest” 4 times.

Step 1: Add End-of-Word Markers

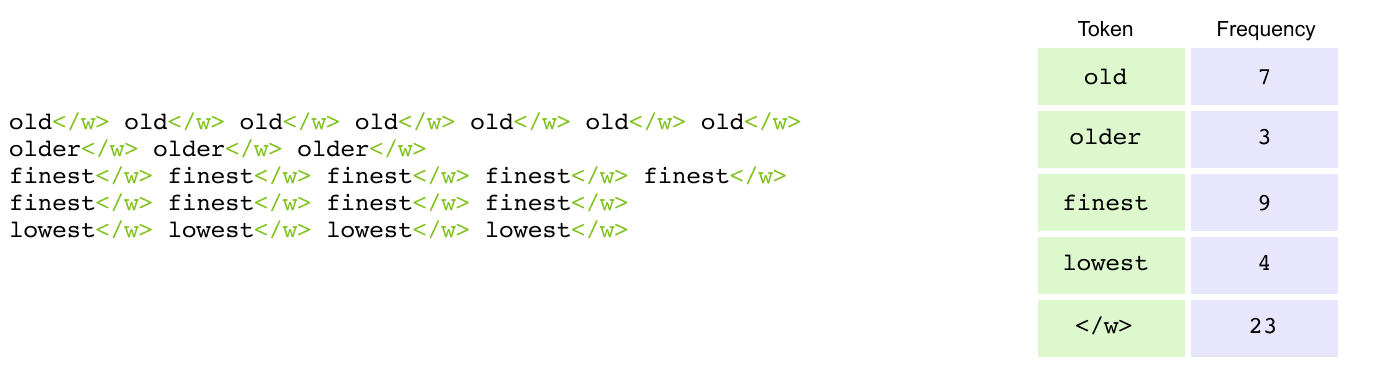

Figure 1.21 End-of-word markers (</w>) appended to each word to distinguish word boundaries and preserve morphological information.

The first step in BPE adds end-of-word markers (</w>) to distinguish word boundaries. Words transform as: old becomes old</w>, older becomes older</w>, finest becomes finest</w>, and lowest becomes lowest</w>. This boundary marker is crucial because the same character sequence carries different meanings based on position. The sequence “est” functions as a suffix in “lowest</w>” (indicating superlative) but as a prefix in “esteem” (with completely different meaning). Without these markers, the tokenizer cannot distinguish between identical character sequences that serve different linguistic roles, losing critical information about word structure and morphology.

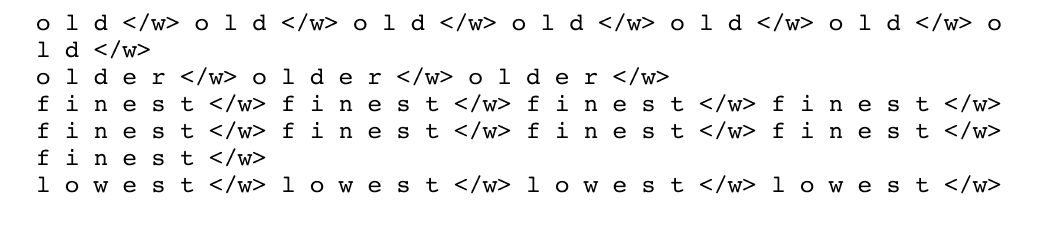

Step 2: Split into Characters

Figure 1.22 Each word is decomposed into individual characters, providing atomic units for iterative merging.

After adding end-of-word markers, each word is decomposed into individual characters, treating each as a separate token. The word old</w> becomes the sequence [o, l, d, </w>], while older</w> splits into [o, l, d, e, r, </w>]. Similarly, finest</w> breaks down to [f, i, n, e, s, t, </w>] and lowest</w> to [l, o, w, e, s, t, </w>]. This character-level decomposition serves as the starting point for BPE, providing the atomic units from which larger, more meaningful tokens will be built through iterative merging based on frequency patterns in the data.

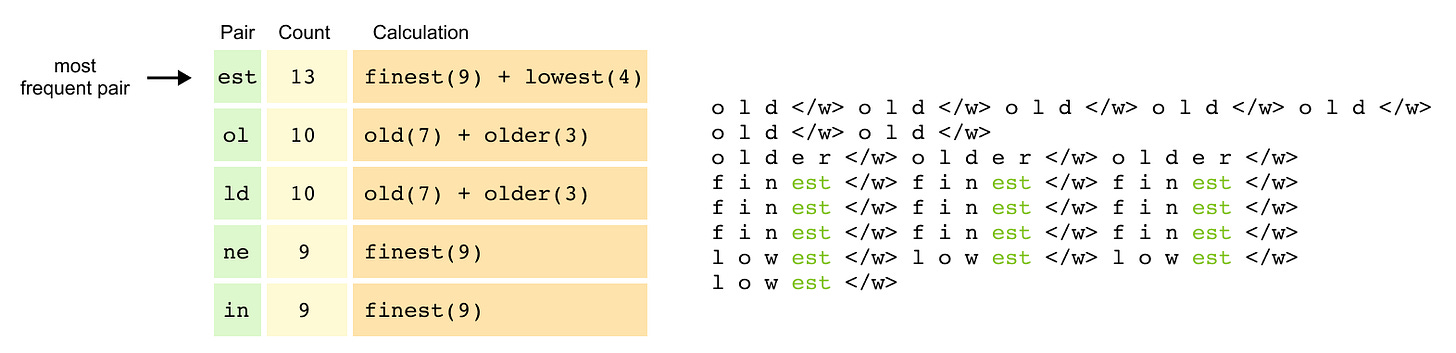

Step 3: Count Character Pairs & Merge

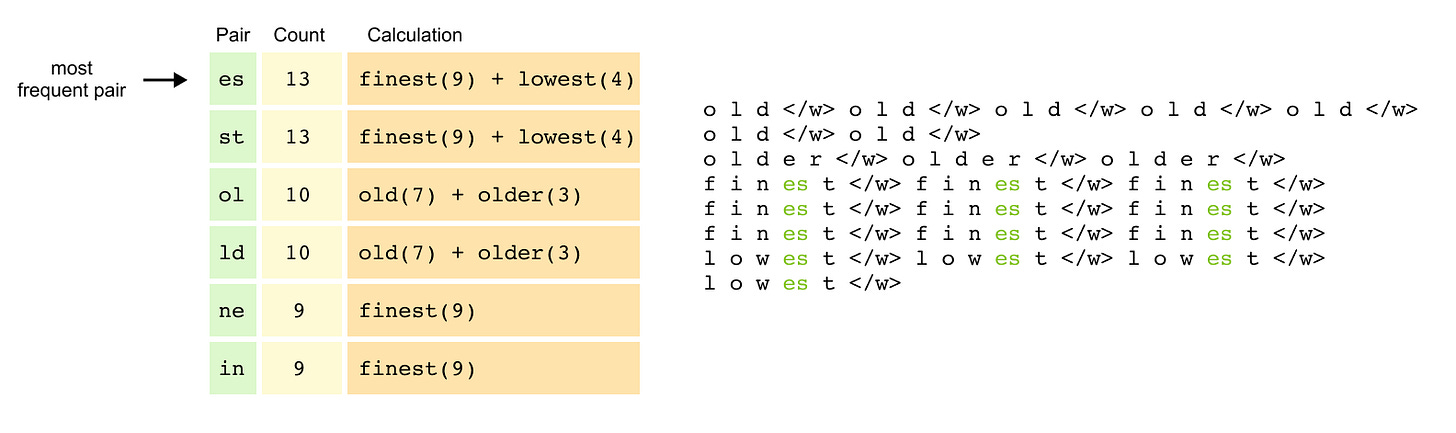

Figure 1.23 Counting adjacent character pairs across the corpus, weighted by word frequency. The pair “es” appears 13 times and is selected for the first merge.

The algorithm now counts all adjacent character pairs across the corpus, weighted by word frequency. The pair “es” appears 13 times (9 from “finest” plus 4 from “lowest”), as does “st” with the same distribution. The pairs “ol” and “ld” each appear 10 times (7 from “old” plus 3 from “older”), while “ne” and “in” from “finest” contribute 9 occurrences each.

With “es” as the most frequent pair, the algorithm performs its first merge, creating a new token “es” and updating the representations: finest</w> becomes [f, i, n, es, t, </w>] and lowest</w> becomes [l, o, w, es, t, </w>].

Figure 1.24 After the first merge (“es”), pairs are recounted and “est” emerges as the next most frequent pair, triggering a second merge.

After recounting pairs with this new token, “est” emerges as highly frequent, triggering a second merge. The token “est” replaces the “es” and “t” sequences, transforming finest</w> into [f, i, n, est, </w>] and lowest</w> into [l, o, w, est, </w>]. Through these iterative merges, BPE progressively builds larger, more meaningful tokens from the most frequent patterns in the data, creating an efficient vocabulary that captures common linguistic structures.

Step 4: Building the Complete Vocabulary

The merging process continues iteratively, identifying increasingly complex patterns. Common prefixes like “old” become single tokens when they appear frequently across multiple words. Suffixes with end markers like “est</w>” are preserved as units to maintain their grammatical function. Frequent character sequences like “low” merge into single tokens regardless of their position.

After multiple iterations, the final vocabulary becomes a hierarchical collection of tokens at different granularities. It contains individual characters [o, l, d, e, r, f, i, n, w, s, t] for handling rare sequences, common subwords [es, est, old, low, fin] that appear across multiple words, and complete frequent words [old</w>, finest</w>] that occur often enough to warrant their own tokens. This multi-level vocabulary enables efficient encoding of common patterns while maintaining the flexibility to tokenize any possible input.

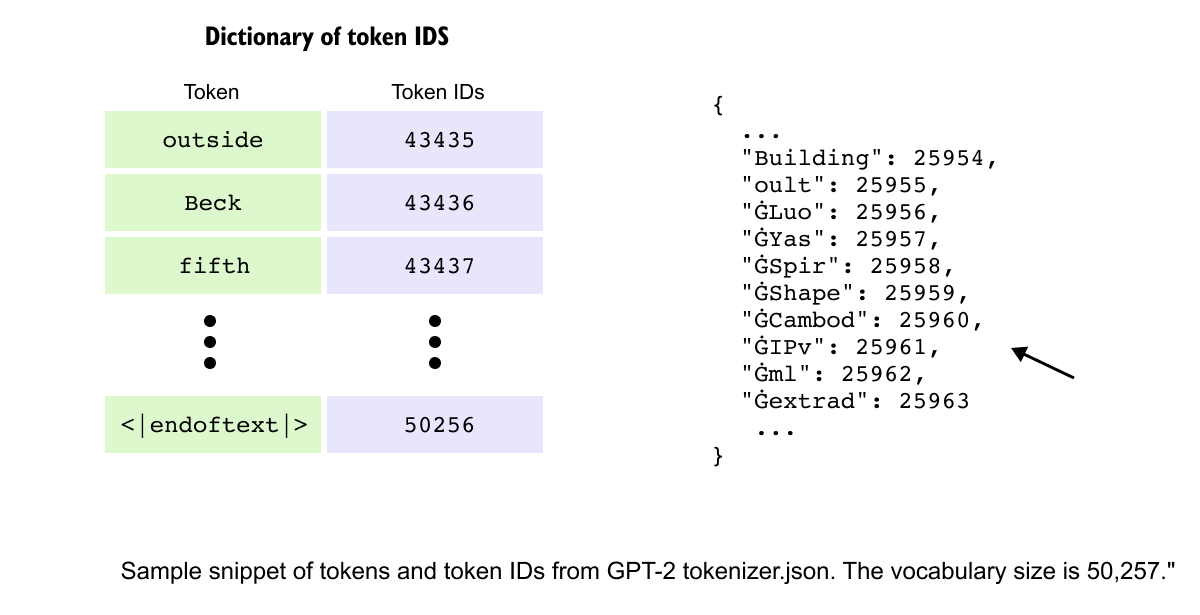

Figure 1.25 GPT-2’s final vocabulary of 50,257 tokens, built from 50,000 BPE merges. Each token is mapped to a unique numerical ID used by the model internally.

GPT-2 performs 50,000 merges to build its vocabulary, creating a rich token set that balances compression with expressiveness. Each token in this vocabulary is assigned a unique token ID, a numerical identifier that the model uses internally. For example, in GPT-2’s vocabulary, common words like “Building” map to ID 25954, while special tokens like “</endoftext>” receive IDs like 50256, creating a complete dictionary of 50,257 token-ID pairs that serves as the bridge between text and numerical processing.

When the model encounters an unfamiliar word, it gracefully degrades to smaller subwords or individual characters, ensuring robust handling of misspellings, neologisms, or foreign terms. This fallback mechanism makes BPE remarkably resilient, capable of processing any text while maintaining efficiency for common patterns.

With our text now converted into meaningful tokens through BPE and mapped to numerical IDs, the next challenge is transforming these discrete symbols into continuous numerical representations that neural networks can process, leading us to the crucial concept of embeddings.

1.5 Word Embedding

After tokenization transforms text into discrete symbols and assigns them numerical IDs, we face a fundamental challenge: these IDs are merely labels that convey no semantic information. The token ID 25954 for “Building” tells the model nothing about buildings, construction, or architecture. To enable neural networks to process language meaningfully, we need to convert these discrete tokens into continuous numerical representations that capture semantic relationships. This is where word embeddings become essential.

The Limitations of Simple Encoding

Early approaches to numerical representation revealed critical limitations. One-hot encoding represents each token as a vector of zeros with a single one at the token’s position. For a vocabulary of 50,000 tokens, “cat” might be encoded as 50,000 zeros except for a single one at position 3. While this eliminates arbitrary ordering, it creates sparse, high-dimensional vectors where every word is equally distant from every other word. The vectors for “cat” and “dog” are as orthogonal as those for “cat” and “quantum”, providing no semantic signal. Similarly, bag-of-words models count word occurrences but lose all sequential information, treating “dog bites man” and “man bites dog” identically despite their opposite meanings.

Learning Meaning Through Context

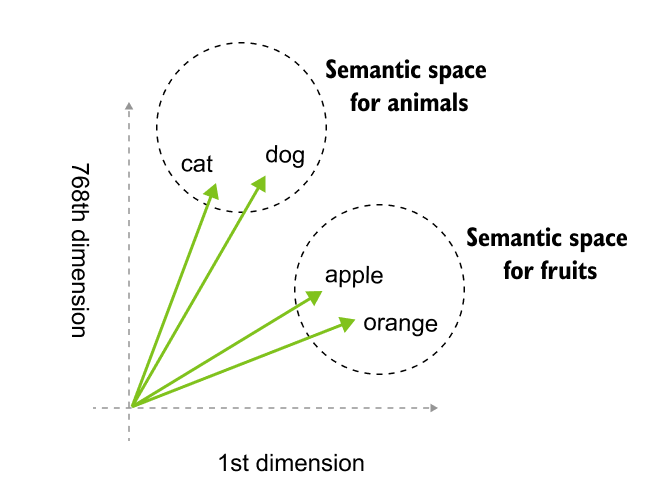

Figure 1.26 high-dimensional embeddings transform semantic meaning into geometric coordinates. In this 768-dimensional space, linguistic relationships are defined by proximity, grouping concepts like animals and fruits into distinct neighborhoods.

The breakthrough came from the distributional hypothesis: words appearing in similar contexts tend to have similar meanings. If “coffee” frequently appears near “morning,” “cup,” and “brew,” while “tea” appears near similar words, a model can learn that coffee and tea are related concepts. Word2Vec revolutionized this approach by training neural networks to predict words from context (CBOW) or context from words (Skip-gram). Through millions of training examples, the network’s hidden layer learns to position similar words near each other in vector space. After training, “king” naturally clusters near “queen” and “prince,” while “banana” groups with “apple” and “fruit.” Most remarkably, these embeddings capture analogical relationships geometrically: the vector arithmetic “king - man + woman” yields a vector nearly identical to “queen,” demonstrating that the model has learned abstract concepts like gender and royalty as directions in space.

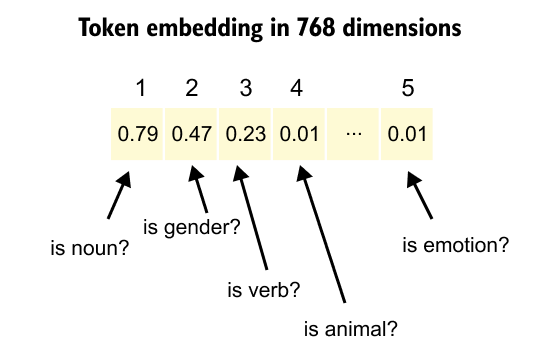

Embeddings in Large Language Models

Figure 1.27: Dense embedding vectors transform token IDs into high-dimensional representations where specific dimensions encode learned semantic features. This layer functions as a learned lookup table that maps tokens to unique vectors, enabling the model to capture nuanced semantic attributes like parts of speech or categorical relationships.

Modern LLMs transform token IDs into dense embedding vectors, typically ranging from 768 to 4096 dimensions, where each dimension encodes aspects of meaning learned during training. Unlike Word2Vec’s static embeddings where each word has one fixed representation, transformer models employ contextual embeddings that dynamically adjust based on surrounding tokens. The word “bank” receives different vector representations when appearing in “river bank” versus “investment bank,” enabling the model to disambiguate meaning through context. These embeddings are learned end-to-end during training, with the model discovering optimal representations that maximize its ability to predict the next token. The embedding layer becomes a learned lookup table that maps each of the 50,000+ token IDs to a unique vector in high-dimensional space, where semantic similarity translates to geometric proximity.

The power of LLM embeddings lies in their ability to encode multiple layers of linguistic information simultaneously. Each vector captures semantic meaning (cat near dog), syntactic roles (verbs clustering separately from nouns), conceptual relationships (similar terms grouping together), and even abstract patterns like sentiment or formality. Through billions of training examples, the model learns to position tokens in this space such that vector operations correspond to meaningful transformations. This geometric structure enables transformers to perform complex reasoning by manipulating these vectors through attention mechanisms and feed-forward networks, turning language understanding into mathematical computation.

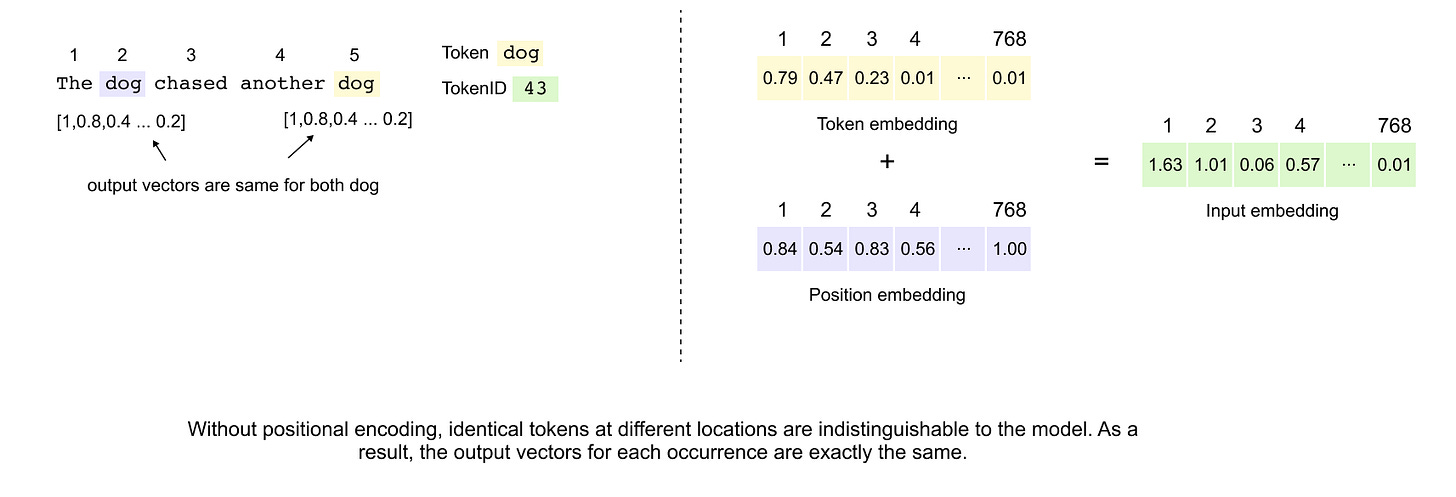

However, embeddings alone cannot capture the sequential nature of language, where word order fundamentally changes meaning. This limitation leads us to positional embeddings, which encode each token’s location in the sequence, enabling transformers to understand that “dog bites man” differs crucially from “man bites dog.”

Positional Embedding

The Need for Positional Information

figure 1.28: Positional embeddings introduce sequential context into the Transformer architecture by adding unique position-aware vectors to token embeddings. This summation allows the model to distinguish identical tokens appearing at different sequence locations, enabling the architecture to capture syntactic and referential relationships despite its inherently parallel, set-based processing nature.

In natural language, word order fundamentally shapes meaning. Consider the sentences “The dog chased the cat” versus “The cat chased the dog.” While both sentences contain identical words, their meanings differ entirely based on word positioning. Traditional sequential models like RNNs inherently capture this ordering through their recurrent nature. However, the Transformer architecture processes all tokens simultaneously through self-attention, treating input as an unordered set. Without explicit positional information, a Transformer would produce identical representations for “dog” regardless of its position in the sentence, making it impossible to distinguish between different occurrences or understand sequential relationships.

This limitation becomes particularly problematic when dealing with pronouns and references. In “The dog chased the ball but it could not catch it,” the two instances of “it” refer to different entities based solely on their positions relative to other words. To address this fundamental limitation, Transformers incorporate positional embeddings that encode sequence order information directly into the model’s representations.

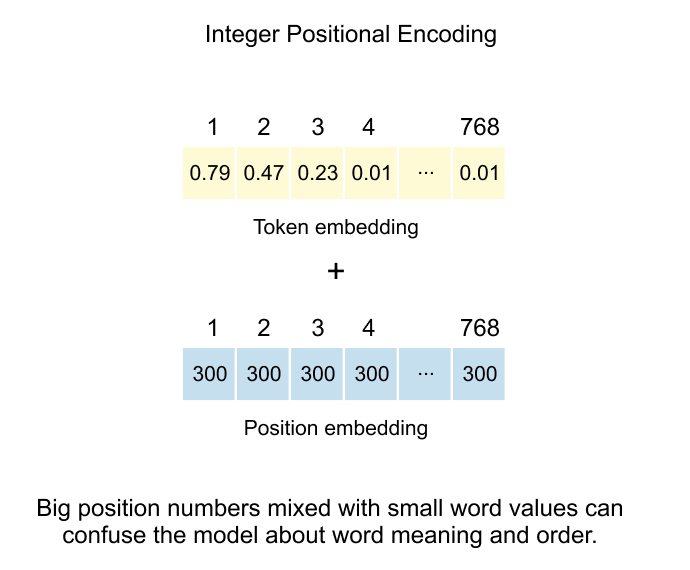

Integer Positional Encoding: The Simplest Approach

figure 1.29: The additive method of injecting positional data by combining token embeddings with integer-based position vectors, while noting the drawback that large integer values can interfere with and potentially confuse the model regarding the original word's semantic meaning.

The most straightforward solution involves assigning each position a unique integer value. In this scheme, if a token appears at position 300 in the sequence, we create a positional embedding vector where every dimension contains the value 300. This vector, matching the token embedding dimensions, gets added element-wise to the token embedding.

For a concrete example with an 8-dimensional embedding space, the token “dog” at position 300 would receive a positional embedding of [300, 300, 300, 300, 300, 300, 300, 300]. The final input representation becomes the sum of the token embedding and this positional embedding.

However, this approach suffers from a critical flaw: scale mismatch. Token embeddings typically contain small values clustered around zero, carefully learned to capture semantic nuances. Position values, especially for longer sequences, can grow arbitrarily large. When position 500 adds [500, 500, ...] to delicate token embeddings with values like [0.23, -0.15, 0.08, ...], the positional signal completely overwhelms the semantic information. The model loses the ability to distinguish between different words, focusing instead on their positions.

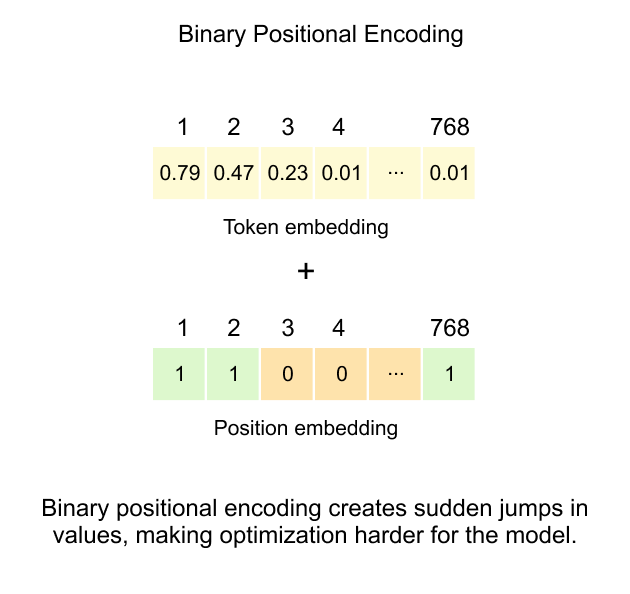

Binary Positional Encoding: Constraining the Range

figure 1.30: This technique represents positions using binary bit strings to keep values between 0 and 1, but it creates sudden jumps in the embedding space that complicate the training process for the model.

To address the magnitude problem inherent in integer encoding, binary positional encoding represents positions using their binary representation, naturally constraining all values between 0 and 1. This approach transforms each position number into its binary form and uses those bits directly as the positional embedding vector.

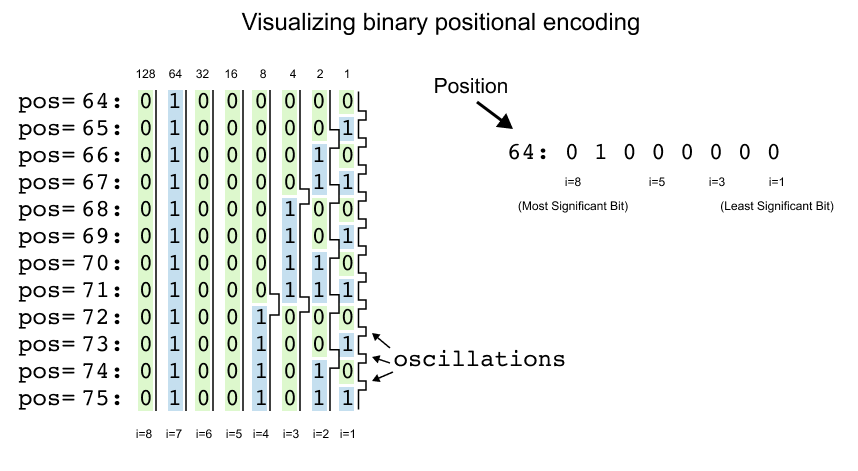

Figure 1.31: This demonstrates how binary representations change across consecutive integer positions. It highlights the rapid bit flipping in the least significant bit position which creates frequency oscillations that make optimization more difficult for the model.

Consider the visualization showing positions 64 through 75 with their 8-bit binary representations. Position 64, which equals 01000000 in binary, becomes the embedding vector [0, 1, 0, 0, 0, 0, 0, 0]. Here, each bit position corresponds to a dimension in the embedding space, with i=8 representing the most significant bit (MSB) and i=1 representing the least significant bit (LSB).

Looking at the pattern across consecutive positions reveals a fascinating structure. Position 64 starts with [0, 1, 0, 0, 0, 0, 0, 0]. Position 65 becomes [0, 1, 0, 0, 0, 0, 0, 1], position 66 transforms to [0, 1, 0, 0, 0, 0, 1, 0], and position 67 yields [0, 1, 0, 0, 0, 0, 1, 1]. The rightmost bit (i=1) flips with every single position increment, creating a rapid alternation between 0 and 1.

The second bit from the right (i=2) follows a different rhythm, maintaining its value for two positions before flipping. It stays 0 for positions 64-65, switches to 1 for positions 66-67, returns to 0 for positions 68-69, and so forth. The third bit (i=3) changes every four positions, remaining stable from 64-67, then flipping for 68-71.

This creates a hierarchical encoding scheme where each bit position operates at a different frequency. The LSB oscillates most rapidly, capturing fine-grained positional differences between adjacent tokens. Moving leftward through the bits, oscillation frequencies decrease exponentially. The fourth bit changes every 8 positions, the fifth every 16 positions, the sixth every 32 positions, and the seventh every 64 positions. The MSB (i=8) remains constant for 128 consecutive positions before flipping.

In the visualization, this pattern becomes immediately apparent. The rightmost column shows constant flickering between (0) and (1) for every position. The i=2 column displays pairs of same cells. The i=3 column shows groups of four, and this doubling pattern continues across all dimensions. The leftmost column (i=8) remains uniformly across the entire visible range, as positions 64-75 all share the same MSB value of 0.

This encoding elegantly solves the scale problem that plagued integer encoding. Instead of values potentially reaching into the thousands, every dimension now contains either 0 or 1. When added to token embeddings clustered around zero, these binary values preserve the semantic information while injecting positional signals at a comparable scale.

The hierarchical structure provides the model with positional information at multiple granularities simultaneously. Lower-indexed dimensions encode local sequential relationships, helping the model understand which tokens appear near each other. Higher-indexed dimensions capture global positional context, indicating whether tokens appear in the first half versus second half of the sequence, or in early versus late quarters.

However, binary encoding introduces a critical limitation: discontinuity. The hard transitions between 0 and 1 create step functions rather than smooth gradients. When the model needs to learn relationships between positions 67 ([0, 1, 0, 0, 0, 0, 1, 1]) and 68 ([0, 1, 0, 0, 0, 1, 0, 0]), multiple dimensions flip simultaneously. These abrupt changes complicate gradient-based optimization, as the loss landscape contains sharp edges and discontinuous regions.

During backpropagation, these discrete jumps prevent smooth gradient flow. Small parameter updates cannot gradually transition the model’s understanding between binary states. The optimizer must navigate around these discontinuities, potentially getting stuck in suboptimal configurations or requiring careful learning rate scheduling to handle the non-smooth optimization landscape.

Despite these challenges, binary encoding demonstrates the key insight that positional information can be encoded through patterns of oscillation at different frequencies. This conceptual breakthrough, showing that different dimensions can operate at different temporal scales, directly inspired the development of sinusoidal positional encoding, which maintains these beneficial oscillatory patterns while ensuring continuous, differentiable representations throughout the embedding space.

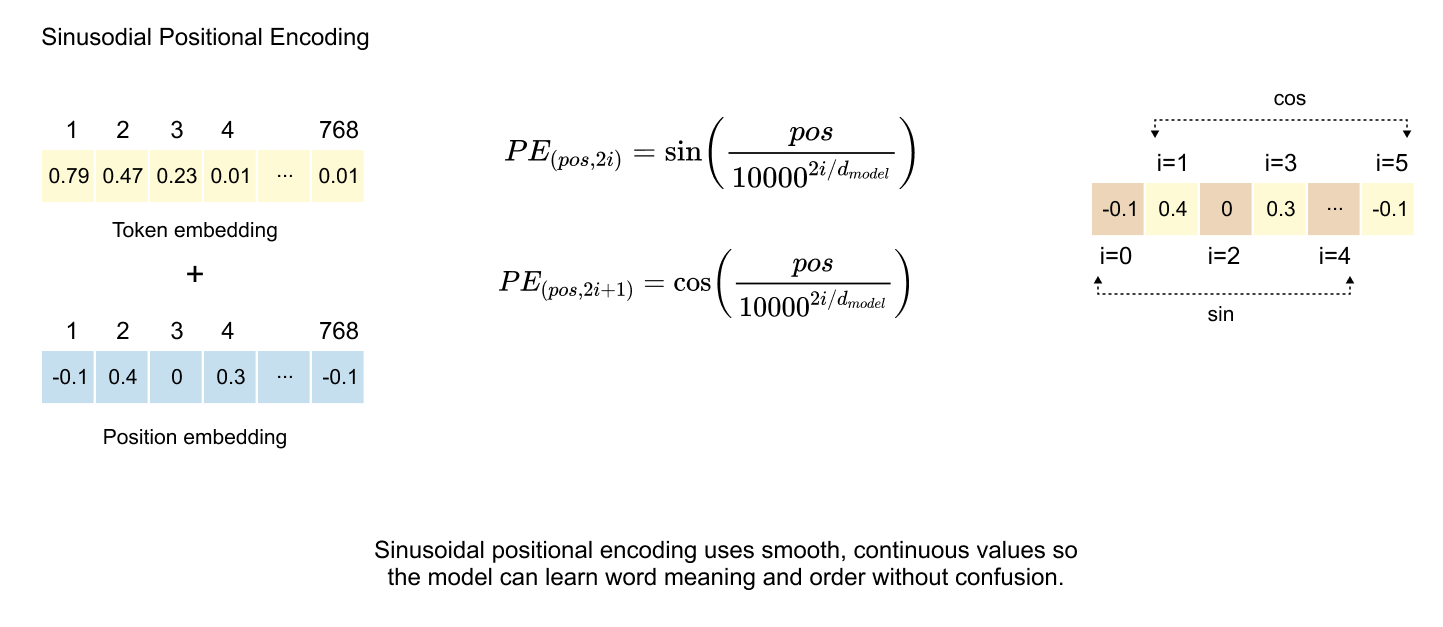

Sinusoidal Positional Encoding: Continuous Representations

Figure 1.32: Sinusoidal PE applies trigonometric sine and cosine functions to generate continuous and bounded positional vectors, which enables the model to learn sequential relationships while avoiding the optimization challenges and discontinuities inherent in integer and binary positional encodings.

The breakthrough in positional encoding came with the sinusoidal approach, introduced in the seminal “Attention Is All You Need” paper. This method preserves the oscillatory patterns discovered in binary encoding while ensuring smooth, continuous values bounded between -1 and 1, eliminating the discontinuity problems that hindered optimization.

The Mathematical Foundation

The sinusoidal formulation employs alternating sine and cosine functions across dimensions:

Where pos denotes the token’s position in the sequence, i represents the dimension index, and d_model indicates the total embedding dimensionality. The constant 10000 serves as the base for creating a geometric progression of wavelengths across different dimensions.

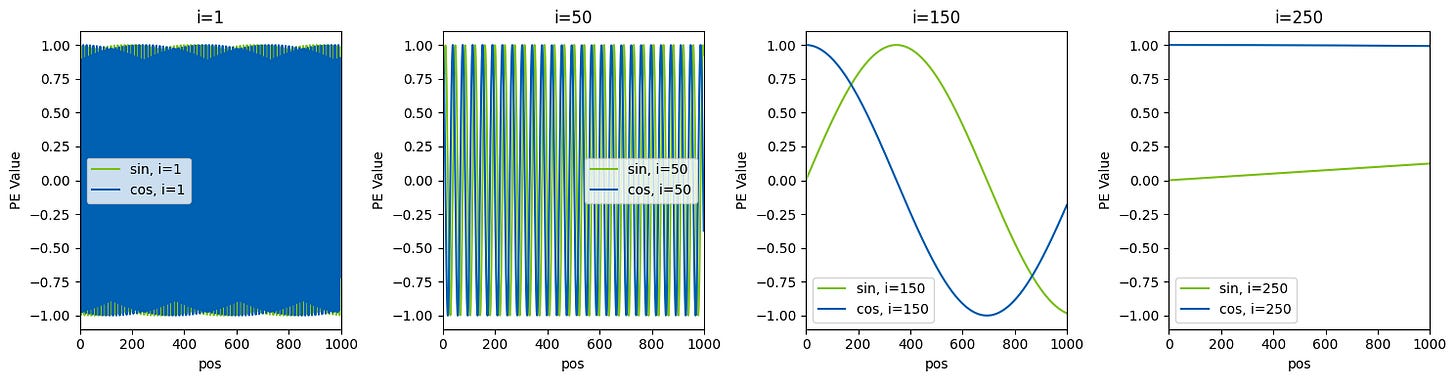

Frequency Spectrum Analysis

Taking GPT-2’s architecture as an example, with d_model = 768 and maximum context length = 1024, we can observe how different dimensions encode positional information at varying frequencies. For any given position, we compute 768 values using the alternating sine-cosine formulas.

At the lowest dimension (i=0), the formula simplifies to sin(pos/1) = sin(pos), creating rapid oscillations. The adjacent dimension uses cos(pos/1) = cos(pos). As the dimension index increases, the denominator 10000^(2i/768) grows exponentially, progressively slowing the oscillation frequency.

Sinusoidal Patterns Across Different Dimensions

Figure 1.33: The visualization reveals how positional encodings behave across four different dimension indices:

At i=1, both sine and cosine components oscillate extremely rapidly, appearing as dense vertical lines that alternate between approximately -1 and 1. This high-frequency pattern changes with nearly every position, capturing fine-grained local relationships between adjacent tokens.

At i=50, the oscillation frequency decreases noticeably. The sine and cosine waves create regular patterns with periods spanning roughly 20-30 positions. These medium-frequency components encode relationships at the phrase or sentence level.

At i=150, the waves become smooth and gradual, with clear sinusoidal curves visible. The sine (green) and cosine (blue) components maintain their 90-degree phase offset, completing only 2-3 full cycles across the entire 1024-position range. These dimensions capture broader structural information about whether tokens appear in early, middle, or late portions of the sequence.

At i=250, the oscillation becomes extremely slow, with the functions barely completing a single cycle across the full context. The cosine component remains nearly constant around 1, while the sine component stays close to 0, providing stable anchoring for global position context.

Sinusoidal encoding creates a hierarchical representation where each position receives a unique 768-dimensional fingerprint. Lower dimensions oscillate rapidly between positions, capturing local token relationships and word order, while higher dimensions change gradually, encoding broader context like paragraph boundaries and document structure. This combination of multiple sine-cosine pairs at different frequencies generates a unique signature for every position. Unlike binary encoding’s abrupt 0-to-1 transitions, sinusoidal encoding provides smooth, continuous functions that enable stable gradient flow during backpropagation, dramatically improving training efficiency. The bounded range between -1 and 1 keeps positional signals at a scale comparable to token embeddings, preventing positional information from overwhelming semantic content while allowing the optimizer to make incremental refinements.

The sinusoidal approach offers significant practical advantages: it requires no learned parameters, reducing model complexity and training overhead, and its mathematical formulation naturally extends to arbitrary sequence lengths, potentially enabling generalization beyond training context sizes. In practice, positional encodings are precomputed for the maximum sequence length and stored as a lookup table. During processing, these encodings are retrieved and added element-wise to token embeddings, preserving semantic information while injecting positional signals. This simple yet elegant solution simultaneously addresses multiple challenges: maintaining bounded values, ensuring smooth optimization, providing unique position identification, and encoding multiscale temporal information. These properties have established sinusoidal positional encoding as a cornerstone of the Transformer architecture, inspiring numerous variations while remaining widely used in its original form across modern language models.

1.6 Transformer Block

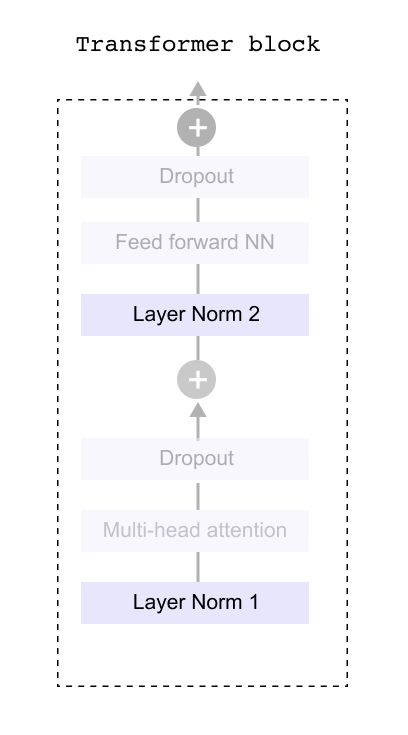

Figure 1.34 Inside the transformer block: multi-head attention, feed-forward network, layer normalization, and dropout layers work in sequence to process token representations

Having converted our raw text into meaningful numerical representations through tokenization and embeddings, we now enter the heart of the language model: the Transformer Block. This is where the real magic happens. The block contains several components working in sequence, including layer normalization, dropout layers, and feed forward networks. However, before we dive into these supporting elements, we need to understand the star of the show: the attention mechanism. The multi head attention layer is what gives transformers their remarkable ability to understand context and relationships between words, no matter how far apart they appear in a sentence. Once we grasp how attention works and explore the feed forward network that follows, we can then circle back to understand how the other components like dropout and layer normalization help stabilize and improve the overall system. For now, let’s focus on what makes transformers truly powerful: their attention mechanism.

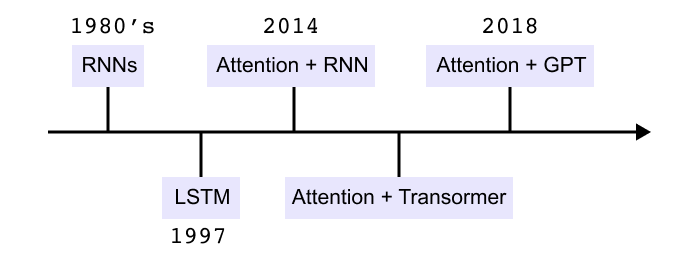

1.7 The Need for Attention Mechanism

Figure 1.35 Timeline of sequence modeling methods from early RNNs to LSTMs, attention with RNNs, transformers, and GPT models.

Feedforward neural networks see every input as independent. For a sentence such as “The cat sat on the mat” the model processes each word separately and has no built in notion of order or context. This is not enough for language, where meaning depends on how words are arranged.

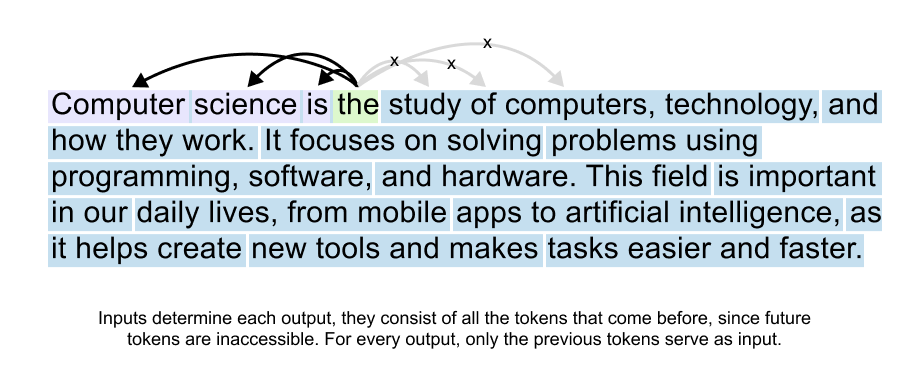

Recurrent neural networks introduce a hidden state that is passed along the sequence. The encoder reads tokens one by one, updates its hidden state at each step, and hands the final state to a decoder. The decoder must use this single vector as a summary of the entire input sentence. As sequences get longer, early information is squeezed into this fixed size state and gradually fades. This is the context bottleneck.

LSTMs improve the situation with a cell state and gates that control what to store and what to forget. They maintain information over longer spans than basic RNNs, but they still process tokens step by step and still rely on compressed hidden states. Long sentences can still overwhelm this bottleneck.

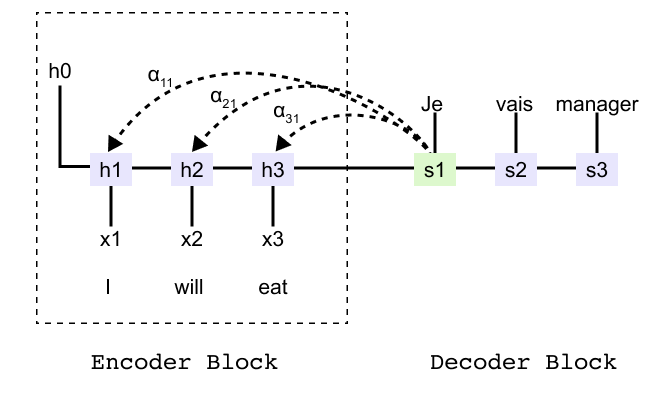

Figure 1.36: Encoder-decoder RNN for the sentence “I will eat” showing encoder hidden states h1, h2, h3 and decoder states that must rely on a single summary vector.

To see the bottleneck more concretely, consider an encoder decoder model that translates the English sentence “I will eat into French”. The encoder produces hidden states h1, h2, h3 for the three input tokens and a final state that is passed to the decoder. Without attention, the decoder can only use this final state when generating the first French word. It has no direct way to reach back to h1 or h2.

Attention

Attention removes the hard bottleneck by giving the decoder direct access to all encoder states. At each decoding step the model compares the current decoder state with every encoder state and produces attention scores. After a softmax these scores become attention weights that sum to one.

The decoder then forms a context vector as a weighted sum of the encoder states. If the first input word is most relevant for the current output, its weight may be close to one while the others are close to zero. At the next step the weights are recomputed and the model can shift its focus to a different part of the sentence.

In the translation example, when the decoder produces the first French word it might focus almost entirely on h1. When it moves on to the second French word it can focus more on h2, and so on. Instead of depending on a single final state, the decoder now has a flexible view over the entire input sequence at every step.

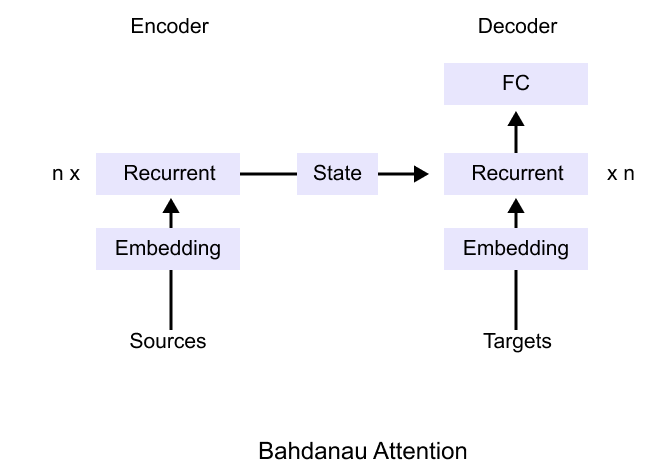

Bahdanau attention

Figure 1.37: Bahdanau attention architecture: the encoder produces a sequence of states and the decoder combines its own state with a context vector formed as a weighted sum of all encoder states

Bahdanau attention was the first widely adopted implementation of this idea. The encoder is still a recurrent network that produces a sequence of hidden states. The decoder is also recurrent, but before predicting each target token it computes alignment scores between its current state and every encoder state. These scores become attention weights, and their weighted sum is the context vector used for prediction.

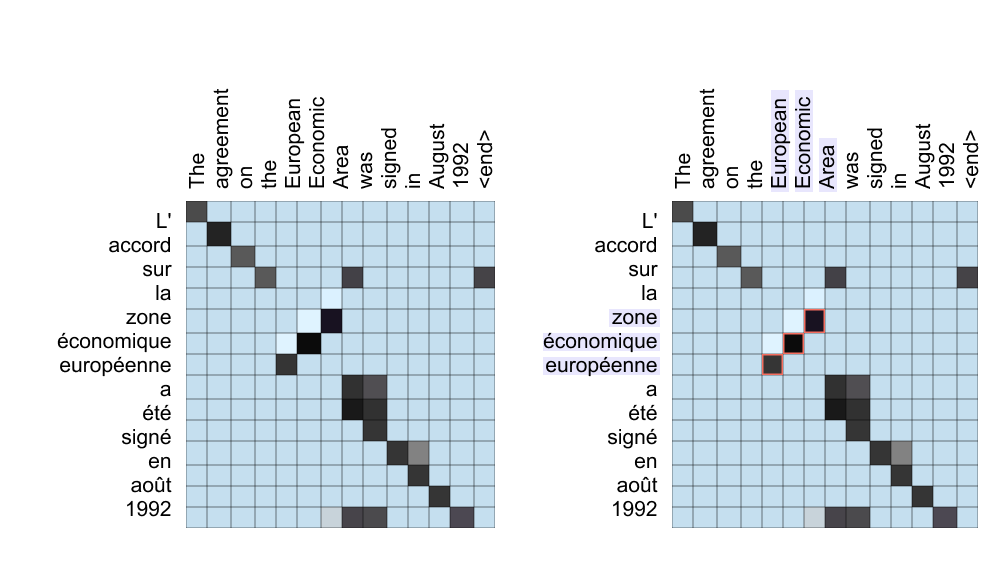

The attention weights can be visualized as a matrix whose rows correspond to target words and columns correspond to source words. Each cell shows how strongly the model attends to a particular source word when generating a particular target word. This view reveals attention as a soft alignment between the two sentences.

Figure 1.38: Attention heatmaps for a French-to-English sentence pair. The left grid shows overall alignments; the right grid highlights how the model focuses on “European Economic Area” when generating “zone economique europeenne,” capturing word reordering.

These heatmaps show that many words align along a near diagonal, indicating similar order in both languages. Off diagonal patterns reveal reordered phrases. For example, the French adjective corresponding to European appears last in the phrase, but its attention weights point back to the first English word. This ability to align by meaning rather than position is what allows attention based models to handle flexible word order and long range dependencies.

Finally, it is helpful to remember where this attention block lives inside the full transformer model from earlier chapters. The transformer encoder and decoder both contain stacked attention and feedforward sublayers that operate on token and positional embeddings.

We are now ready to see why attention became the central idea in modern language models. Starting from simple recurrent networks and LSTMs, we saw how the context bottleneck makes it hard to remember all the details of a long sentence. Bahdanau attention solved this by letting the decoder look back at every encoder state and learn soft alignments between source and target words, which we visualized through attention weights and heatmaps. So far, attention has connected two different sequences, such as English and French sentences. In the next section we will study self attention in detail and see how letting every token attend to every other token becomes the core operation of the transformer.

1.8 Self Attention Mechanism

What does Self Attention actually means ?

Now that we understand the mechanics of attention, let’s clarify what makes self-attention special, the key concept behind modern language models like Transformers.

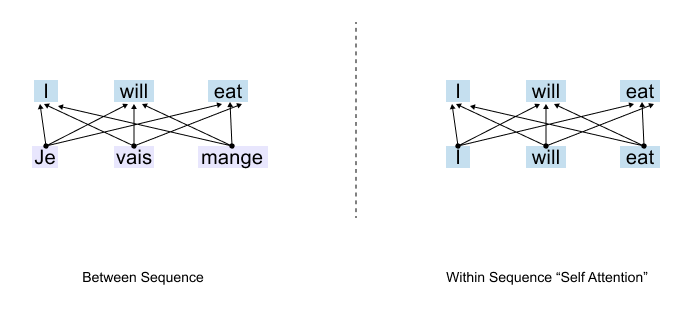

Two Types of Attention

Figure 1.39: Two types of attention: cross-attention connects words across different sequences (e.g., translation), while self-attention connects words within the same sequence.

To understand self-attention, we first need to see where attention was used before. There are two fundamental ways attention can work:

Between Sequences: Attention connects words across different sequences, think of translating from one language to another.

Within a Sequence: Attention connects words within the same sequence to capture relationships and context.

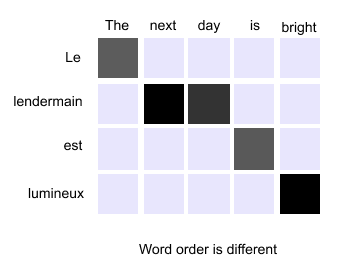

Attention in Translation

Figure 1.40: Cross-attention in translation: the English phrase “The next day is bright” is aligned to its French counterpart, with attention determining which source words correspond to which target words.

In traditional translation tasks, attention operates between two sequences. Imagine translating the English phrase “The next day is bright” into French. The word order might change. “Day” might align with “jour,” but its position in the French sentence could be different. Attention helps the model figure out these cross-language alignments, which English word corresponds to which French word. This works beautifully for translation. But what happens when we’re not translating at all?

Enter Self-Attention

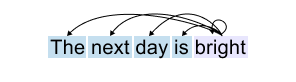

Figure 1.41: Self-attention: every word in a single sentence attends to all other words in that same sentence to build contextual understanding.

Consider a different task: predicting the next word in a sentence. Or understanding what a pronoun refers to. Or simply trying to grasp the meaning of a sentence. Here, we don’t have two separate sequences. We have just one, the sentence itself. This is where self-attention comes in.

Self-attention means that every word in a sentence attends to all other words in that same sentence. Instead of looking across two different sequences (like English and French), the model examines how words relate to each other within a single sequence. The word “day” attends to “next,” to “bright,” to “the”, to everything in its own sentence. It’s attention turned inward. The sequence attending to itself. That’s why we call it self-attention. We cannot encode these complex relationships directly in the attention mechanism using just the raw input embeddings. The connections between words depend on context, grammar, meaning, and a dozen other subtle factors that shift from sentence to sentence.

So what do we do when faced with complexity we can’t hard-code? We let the model learn it. We leave it to weight matrices that can be trained. Before we dive into the mechanics, let’s be clear about our goal. We start with input embeddings, numerical representations of words. But here’s what we want to end up with: context vectors.

What’s the difference?

An input embedding represents a word in isolation. The embedding for “bank” is always the same, whether you’re talking about a financial institution or the side of a river. But a context vector represents a word as it appears in a specific sentence, infused with information from the words around it.

Think about

“The dog chased the ball but it could not catch it.”

The input embedding for the second “it” doesn’t know what “it” refers to, it’s just a generic representation. But the context vector we’re building will carry information from “ball,” from “catch,” from the entire sentence.

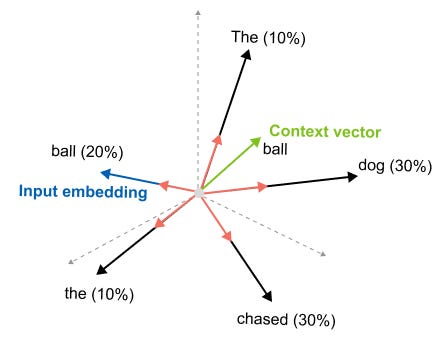

Figure 1.42: From input embedding to context vector: the static representation of a word is enriched with information from all surrounding words through self-attention.

It will understand that this particular “it” refers to the ball. So our entire journey with self-attention, the queries, the keys, the attention scores we’re about to explore, all of it serves one purpose: transforming static input embeddings into dynamic context vectors that understand meaning in context.

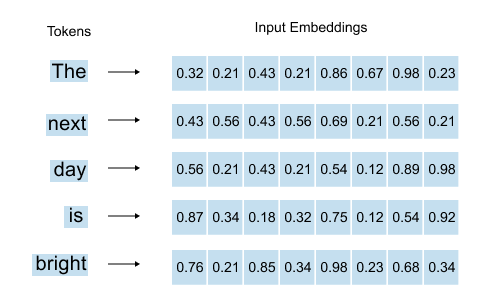

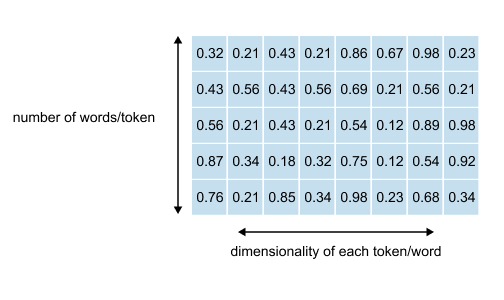

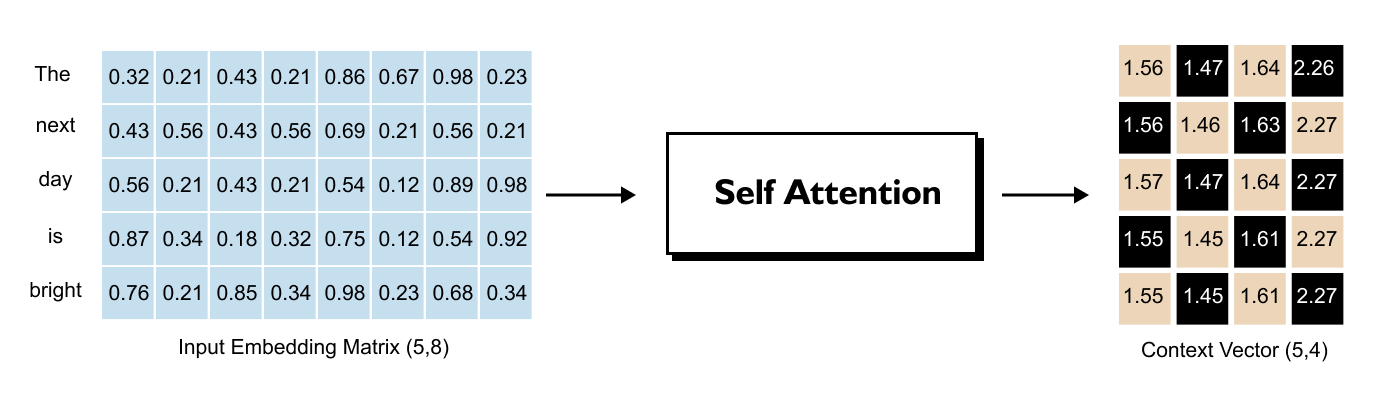

1.9 Understanding the Input Embedding Matrix

Figure 1.43: For each word, the word embedding and positional embedding are summed to produce the input embedding vector.

As we’ve already seen, for each word in our sentence, we have an embedding vector combined with positional information, that is, the word embedding plus the positional embedding that tells us where the word sits in the sequence. The sum of these two gives us our input embedding vector for each word.

When we stack all these input embedding vectors together for an entire sentence, we get what’s called the input embedding matrix.

Let’s say we’re working with the sentence

“The next day is bright”

that’s five words. Our input embedding matrix would have dimensions (5, 8).

Figure 1.44: The input embedding matrix for the sentence “The next day is bright” has shape (5, 8): five rows (one per word) and eight columns (the embedding dimension).

What do these numbers mean?

The 5 rows come from having 5 words. Simple enough. Each word gets its own row in the matrix. If we had ten words, we’d have ten rows. The number of rows always matches the number of words in our sequence.

The 8 columns represent the dimensionality we’ve chosen for our embeddings. Each word is represented as an 8-dimensional vector, eight numbers that capture its meaning. This dimension is something we decide when building our model. It’s a design choice.

In GPT-2, for instance, the embedding dimension varies: 768 for GPT-2 Small, all the way up to 1,600 for GPT-2 XL. Larger dimensions can capture more nuanced information, but they also require more computation.

The Problem We’re Solving

So here we are with our input embedding matrix. Each word has its 8-dimensional vector. But here’s what’s missing: these vectors exist in isolation. They don’t know about each other.

Look at the word “day” in our sentence “The next day is bright.” Its input embedding vector is just a generic representation of the word “day.” It doesn’t know it should pay attention to “bright.” It doesn’t know that “next” right before it gives it temporal context. It has no idea how much importance it should give to “the” or “is” or any other word in the sentence.

This is exactly why we need to transform input embeddings into context vectors. We need to integrate information from all the other words. We need each word’s representation to reflect not just what it is, but what it means in this particular sentence, surrounded by these particular neighbors. That’s the journey we’re about to take.

Before we can perform any attention calculations, we must first define our input sequence and its corresponding embedding matrix. We will use the PyTorch library to create a tensor that holds this information for our example sentence: “The next day is bright”. Each word is represented by an 8-dimensional vector

Listing 1.1 Defining the input embedding matrix

import torch

words = [’The’, ‘next’, ‘day’, ‘is’, ‘bright’]

inputs = torch.tensor([

[0.32, 0.21, 0.43, 0.21, 0.86, 0.67, 0.98, 0.23], # The

[0.43, 0.56, 0.43, 0.56, 0.69, 0.21, 0.56, 0.21], # next

[0.56, 0.21, 0.43, 0.21, 0.54, 0.12, 0.89, 0.98], # day

[0.87, 0.34, 0.18, 0.32, 0.75, 0.12, 0.54, 0.92], # is

[0.76, 0.21, 0.85, 0.34, 0.98, 0.23, 0.68, 0.34] # bright

], dtype=torch.float32)

print(”Input Embedding Matrix:”)

print(inputs)

print(”\nMatrix Shape:”)

print(inputs.shape)Running the previous code prints the following output

Input Embedding Matrix:

tensor([

[0.3200, 0.2100, 0.4300, 0.2100, 0.8600, 0.6700, 0.9800, 0.2300], [0.4300, 0.5600, 0.4300, 0.5600, 0.6900, 0.2100, 0.5600, 0.2100],

[0.5600, 0.2100, 0.4300, 0.2100, 0.5400, 0.1200, 0.8900, 0.9800],

[0.8700, 0.3400, 0.1800, 0.3200, 0.7500, 0.1200, 0.5400, 0.9200],

[0.7600, 0.2100, 0.8500, 0.3400, 0.9800, 0.2300, 0.6800, 0.3400]

])

Matrix Shape:

torch.Size([5, 8])The output shows our input object is a tensor with a shape of torch.Size([5,8]). This confirms we have a matrix with 5 rows, one for each of our tokens, and 8 columns, representing the 8-dimensional embedding vector for each token. This matrix is the starting point for the self-attention mechanism, but as noted, these vectors exist in isolation and lack any contextual information from their neighbors.

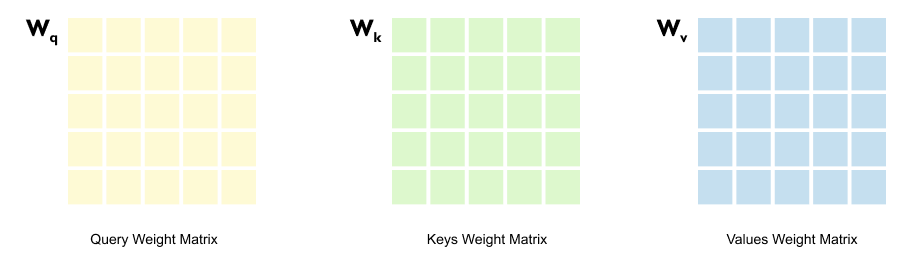

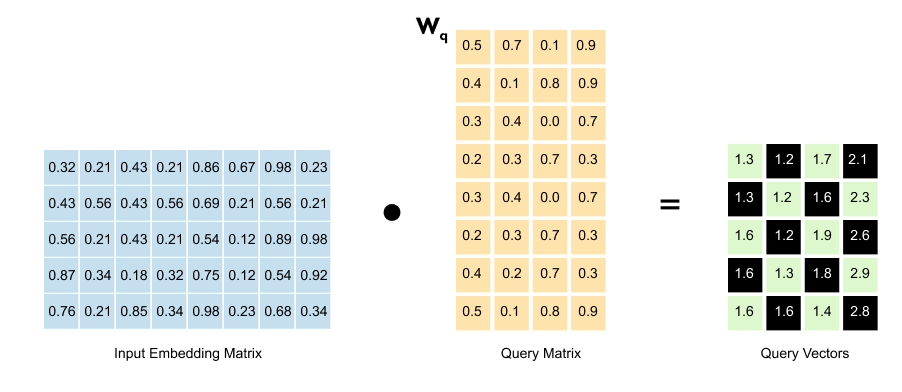

1.10 From Embeddings to Queries, Keys & Values

Here’s where we meet the heart of self-attention: three trainable weight matrices called Queries, Keys, and Values.

Figure 1.45: The input embedding matrix is multiplied by three separate weight matrices Wq, Wk, and Wv to produce Query, Key, and Value matrices

You might wonder, why three? Why not just use the input embeddings directly?

The answer lies in a fundamental principle of neural networks: they’re universal function approximators. They can learn complex patterns if we give them the right structure. So instead of trying to hand-code how words should relate to each other, we do something smarter.

We initialize three weight matrices with random values at the start. Then we let the training process figure it out. During training, these matrices learn how to transform embeddings in ways that capture meaningful relationships. The Query matrix learns to create vectors that “ask questions.” The Key matrix learns to create vectors that “answer” whether they’re relevant. And the Value matrix? It learns what information should actually be passed along once we know which words matter. We’re not telling the model how attention should work, we’re giving it the tools to learn it on its own.

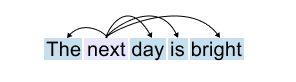

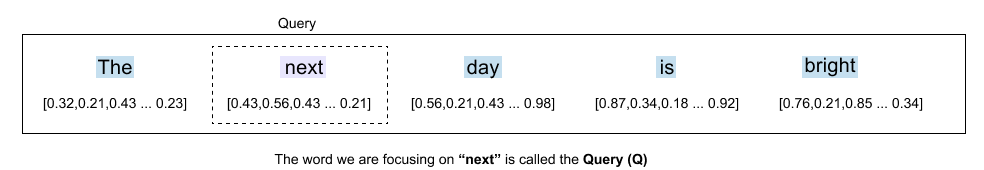

Let’s understand this by taking one example

Figure 1.46: The sentence “The next day is bright” with the word “next” highlighted as the current focus of the attention mechanism.

When we focus on a specific word say, “next”.

Figure 147: The word “next” acts as the Query, asking how much attention it should pay to each other word in the sentence.

we need to decide how much attention it should pay to all the other words in the sentence. This is where our terminology becomes important. The word we are focusing on (“next”) is called the Query (Q).

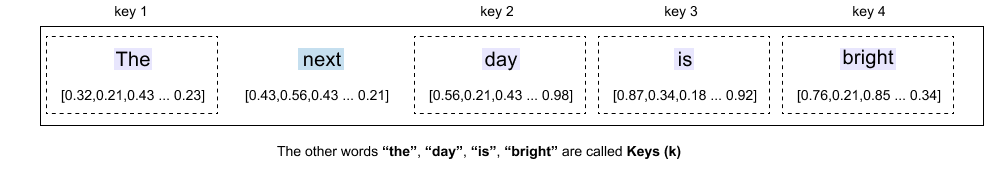

Figure 148: The other words, “the,” “day,” “is,” “bright”, serve as Keys that the query evaluates for relevance.

The other words in the sentence, “the,” “day,” “is,” “bright,” are called Keys (K). These are the words that the query will evaluate. They’re potential sources of information.

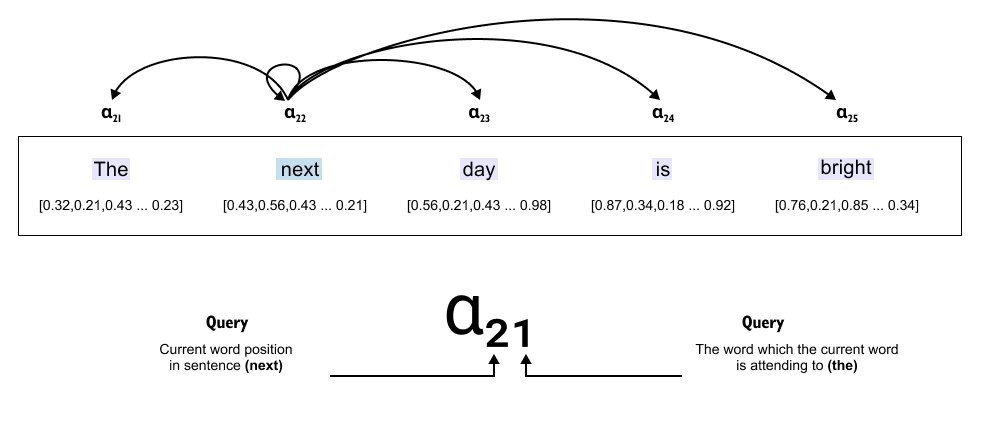

Figure 149: Attention scores α between the query word “next” and all keys. Each score quantifies how strongly “next” should attend to each other word.

Now comes the crucial part: the attention score (α). This score determines how much importance “next” should give to each of these other words. Should “next” pay more attention to “day” (the word right after it) or to “bright” (further away)? The attention scores tell us exactly this.

So “next” uses these attention scores to focus on other words in the sentence, weighing some as more important, others as less so. This is how a word builds its understanding of context.

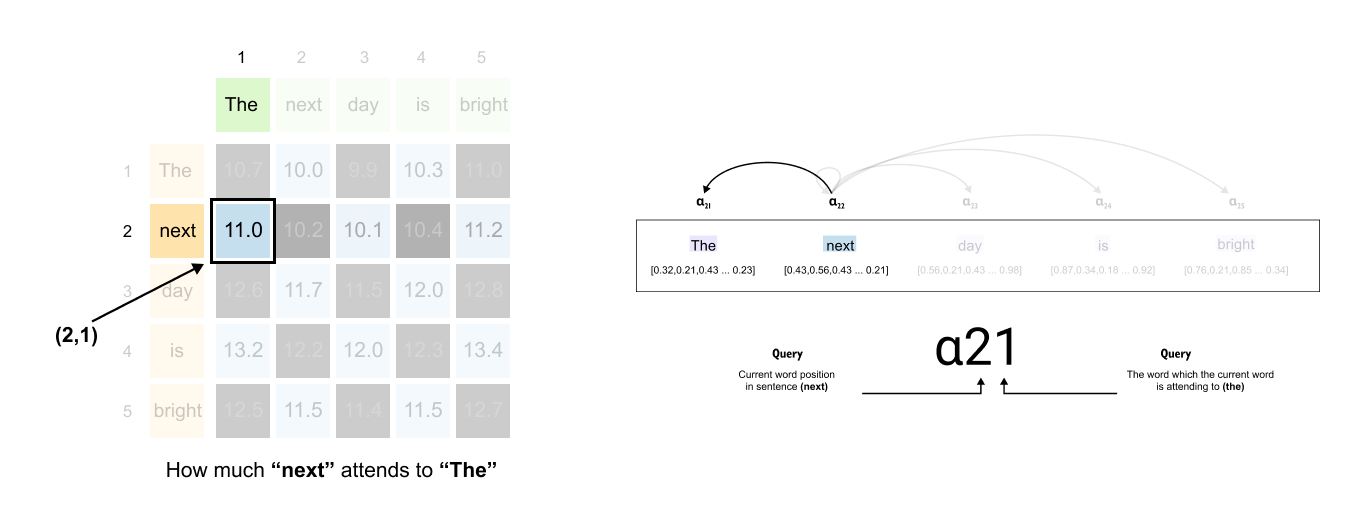

For example, the attention score α₂₁ means:

“Next” (X₂) is attending to “The” (X₁).

The first 2 represents “next” (position 2 in the sentence).

The second 1 represents “the” (position 1 in the sentence).

The goal of self-attention is to take these attention scores (α values) and use them to modify the original input embeddings, creating context vectors that contain richer information.

Input Embedding (X₂ - “next”): Just represents the word itself.

Context Vector (C₂ - “next”): Now contains information from all relevant words around it, based on attention scores.

Instead of just knowing “next” as an isolated word, the context vector of “next” now understands:

How much “next” relates to “day” (α₂₃)

How much “next” relates to “the” (α₂₁)

How much “next” relates to “is” (α₂₄)

This transformation from input embeddings to context vectors is what makes self-attention so powerful, it helps the model understand relationships between words, not just individual tokens.

Context Vector is an enriched embedding vector. It combines information from all other input elements

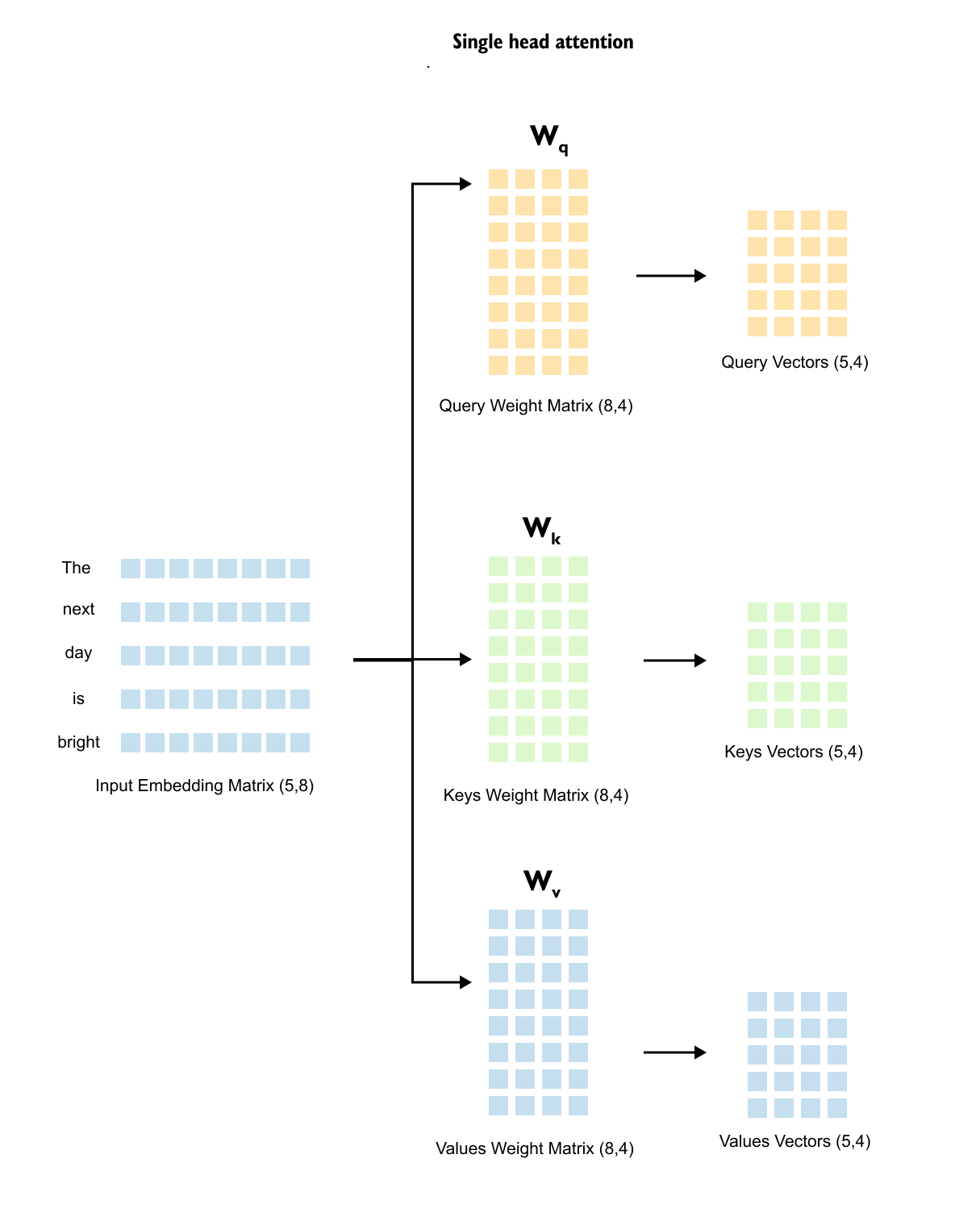

The Dimensions of Query, Key, and Value Matrices

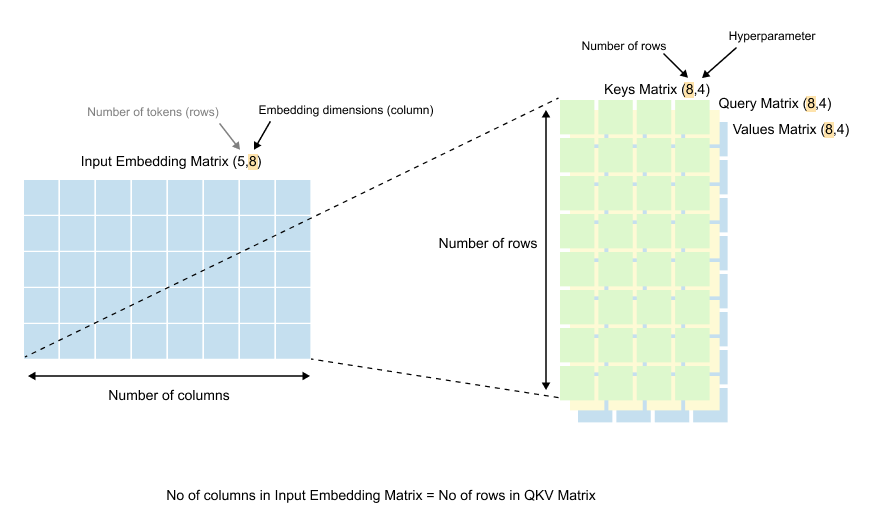

Now let’s talk about the actual shape and size of these weight matrices. Understanding their dimensions is crucial to grasping how self-attention works mathematically.

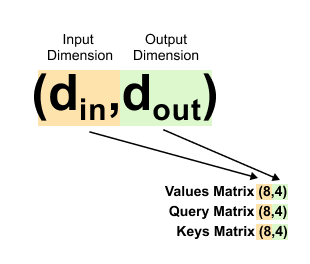

Figure 1.50: Dimensions of the weight matrices: Wq, Wk, and Wv each have shape (d_in, d_out), where din matches the embedding dimension and d_out is a design choice.

If we look at the dimensions of the Query, Key, and Value matrices (Wq, Wk, and Wv), we’ll notice something interesting.

The number of rows in each of these matrices equals the number of columns in our input embedding matrix. Remember, our input embedding matrix has dimensions (5, 8), where 8 is our embedding dimension. So our weight matrices will have 8 rows.

The number of columns in these weight matrices, however, can be anything we choose. This is a design decision.

Figure 1.51: The terminology d_in and d_out: din = 8 is the input embedding dimension, and d_out is the chosen output dimension for queries, keys, and values.

When coding language models like GPT-2 or GPT-3, we use specific terminology for these dimensions:

d_in (Input Dimension): The dimension of our input embeddings. In our example, this is 8.

d_out (Output Dimension): The dimension we want for our query, key, and value vectors. This is the number of columns in our weight matrices.

Here’s an important point: you can choose any value for d_out. In practice, it’s often set equal to d_in for simplicity. So if our input dimension is 8, we might set the output dimension to 8 as well. But we don’t have to. In our example, we’re using d_out = 4. Why? To demonstrate that the output dimension is flexible. You have the freedom to choose what works best for your model.

Listing 1.2: Extracting a Token Embedding and Setting Dimensions

x_2 = inputs[1] # embedding for “next”

d_in = inputs.shape[1] # input dimension

d_out = 4 # dimension for Q, K, V in this toy example

print(x_2)

print(d_in)

print(d_out)

Here you select the second row of the input matrix, which is the 8 dimensional embedding for the word “next”. The variable d_in confirms that the embedding dimension is 8, matching the theory. The variable d_out is set to 2, which means each query key and value vector will live in a 2 dimensional space in the following examples. In real models d_out is much larger, but using 2 keeps the printed tensors readable.

Output

tensor([0.4300, 0.5600, 0.4300, 0.5600, 0.6900, 0.2100, 0.5600, 0.2100])

8

4

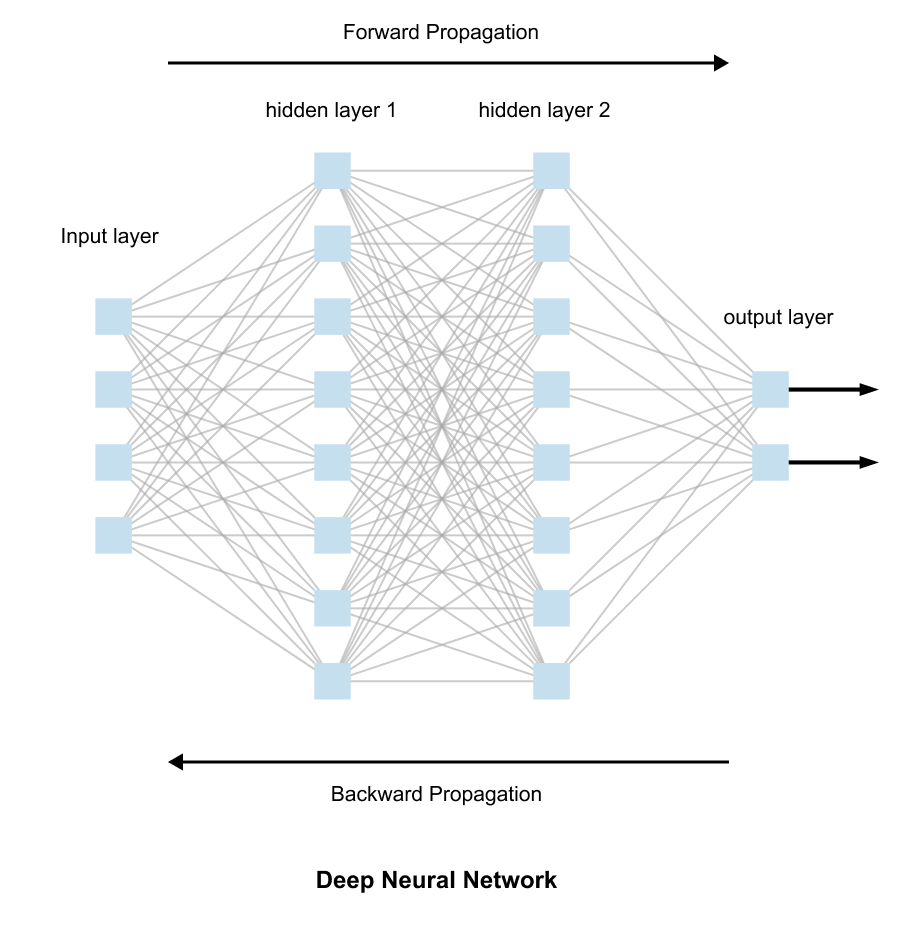

How These Matrices Learn

Figure 1.52: Weight matrices are initialized with random values and updated during training through backpropagation, learning to produce meaningful query, key, and value representations.

At the beginning, all the values in these weight matrices are initialized randomly. They start with no knowledge of language or attention patterns. But here’s where the magic of training comes in.

As we train the model using backpropagation, these random values gradually update themselves. The matrices learn which transformations help the model understand language better.

They learn how to create query vectors that ask the right questions, key vectors that identify relevant information, and value vectors that carry the right content.

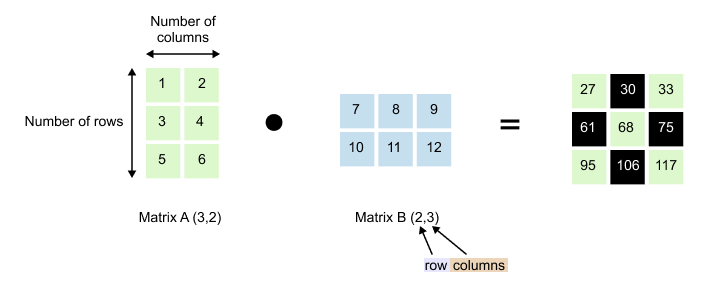

1.11 A Quick Note on Matrix Multiplication

Before we dive into multiplying our embedding matrices, let’s make sure we’re all on the same page about how matrix multiplication actually works. If you already know this, feel free to skip ahead. But if matrices feel a bit fuzzy, stick with me for a moment.

Figure 1.53: Matrix multiplication: Matrix A of shape (3, 2) multiplied by Matrix B of shape (2, 3) produces a result of shape (3, 3). The inner dimensions must match.

We have Matrix A with dimensions (3, 2) and Matrix B with dimensions (2, 3). Notice something important: the number of columns in Matrix A (which is 2) matches the number of rows in Matrix B (also 2). This isn’t a coincidence. For matrix multiplication to work, these inner dimensions must match.

When we multiply them, we get a result with dimensions (3, 3). The outer dimensions survive: 3 rows from Matrix A and 3 columns from Matrix B.

Figure 1.54: Element-wise computation in matrix multiplication: each output entry is the dot product of a row from the first matrix and a column from the second matrix.

To compute each element in the result, you take a row from the first matrix and pair it with a column from the second matrix. Multiply corresponding elements together, then sum them up.

Example: To find the element at position (1, 1):

Take row 1 from Matrix A: [1, 2]

Take column 1 from Matrix B: [7, 10]

Calculate: (1 × 7) + (2 × 10) = 7 + 20 = 27

Another example: For position (2, 1):

Take row 2 from Matrix A: [3, 4]

Take column 1 from Matrix B: [7, 10]

Calculate: (3 × 7) + (4 × 10) = 21 + 40 = 61

You repeat this pattern for every position. Row meets column, multiply and sum. That’s the entire process.

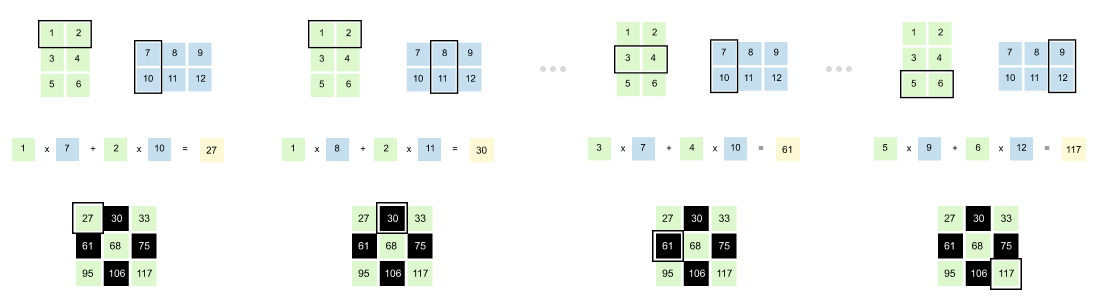

[Step 1] Creating Query, Key, and Value Vectors

The first step in converting our input embedding matrix into context embeddings is straightforward: matrix multiplication. Let’s walk through this process carefully, starting with how we create query vectors.

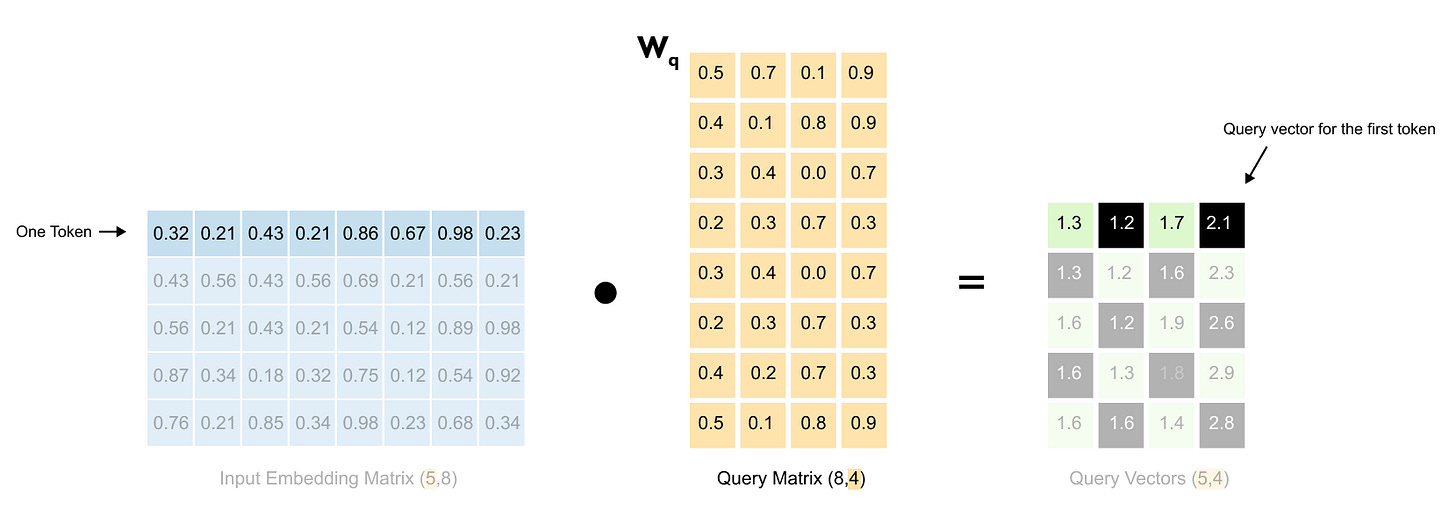

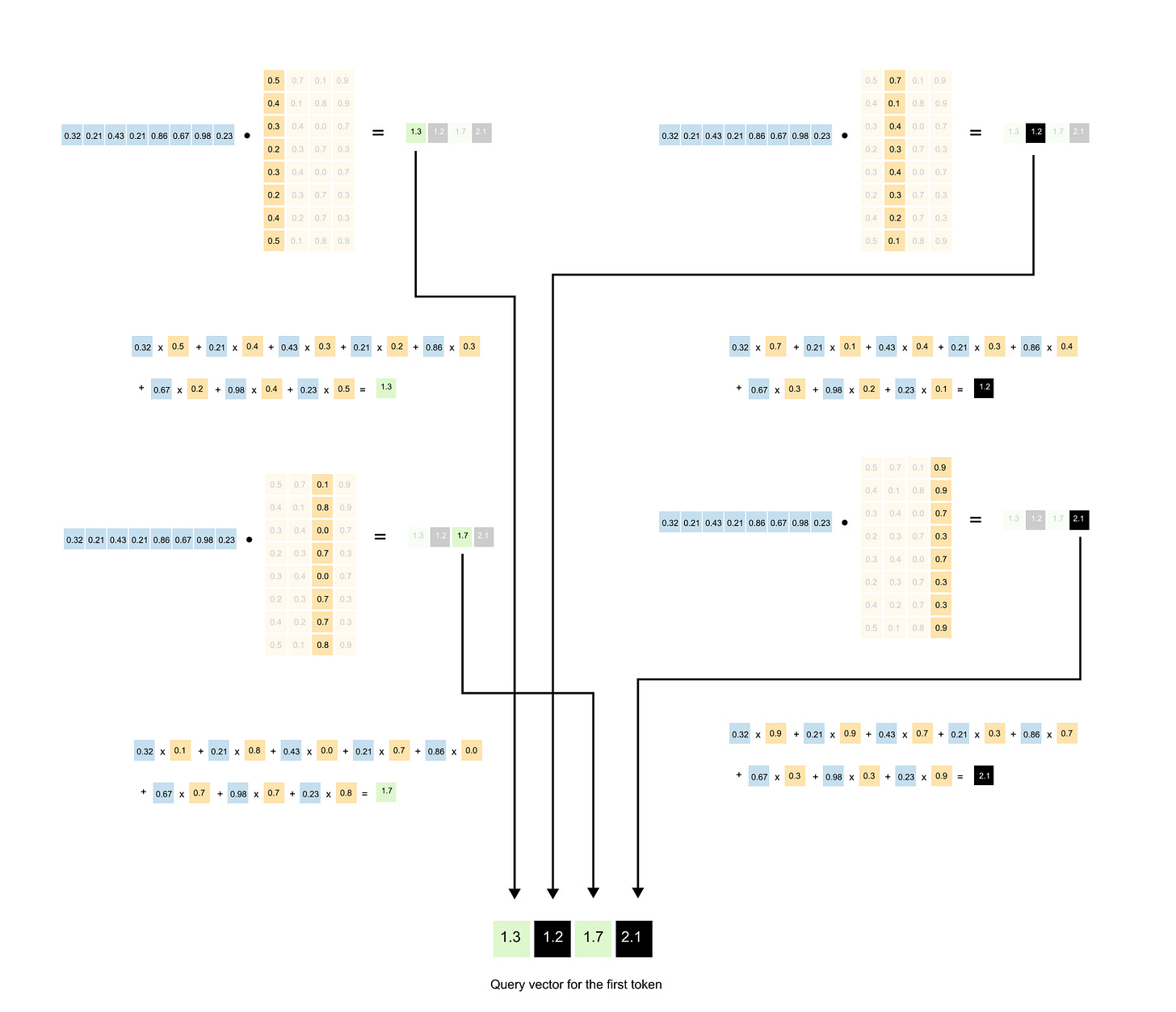

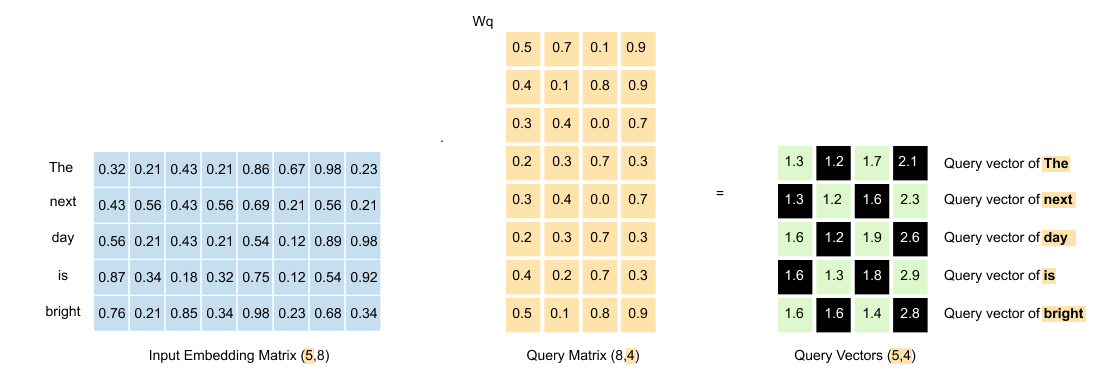

Figure 1.55 The input embedding matrix (5,8) is multiplied by the query weight matrix W_q (8,4) to produce the query matrix (5,4).

We take our input embedding matrix and multiply it by the query weight matrix (W_q). This transformation gives us our query vectors.

Figure 1.56: Detailed view of the matrix multiplication: each 8-dimensional word embedding is projected through the weight matrix to produce a 4-dimensional query vector

Each row of the input matrix represents one word with its 8-dimensional embedding.

Figure 1.57: Step-by-step computation showing how one row of the input matrix multiplied by W_q produces one row of the query matrix.

When we multiply this row by the weight matrix, we get a new row in the output, a 4-dimensional query vector for that word. This happens for all five words simultaneously.

Figure 1.58: The resulting query matrix: five words now have 4-dimensional query vectors, transformed from the original 8-dimensional embeddings.

The result? A query matrix where each of our five words now has its own query vector, transformed from 8 dimensions down to 4.

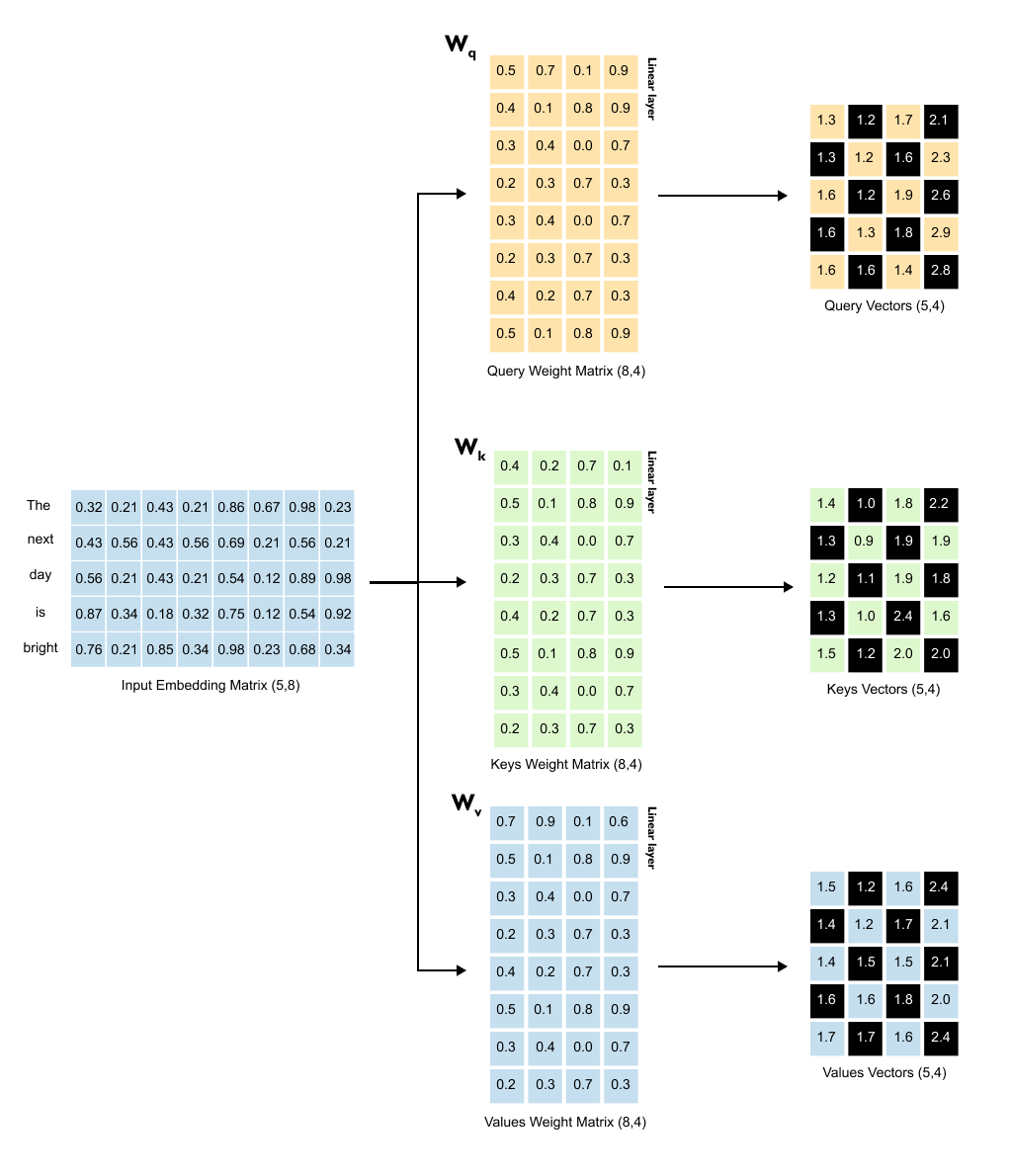

The Complete Picture

Figure 1.59: All three projections in parallel: the input embedding matrix is multiplied by W_q, W_k, and W_v simultaneously to produce Query, Key, and Value matrices, each of shape (5, 4)

Creating the query vectors is just the beginning. The same transformation process happens two more times, each with its own weight matrix, all operating in parallel.

For the key vectors, we multiply our input embedding matrix by the key weight matrix (W_K). Same dimensions, same process. Each word gets its own key vector.

For the value vectors, we multiply the input embedding matrix by the value weight matrix (W_V). Each word now has a value vector too.

Here’s something important to recognize: we’ve moved from an 8-dimensional space to a 4-dimensional space. More significantly, we’ve moved into a different kind of space altogether. We’re no longer dealing with input embeddings, those static representations of words. We’re now working with query, key, and value vectors. Each lives in its own transformed space, optimized for a specific purpose in the attention mechanism.

This might seem like an odd detour. Why transform our embeddings at all? Why not just work with them directly?

This trick, transforming data into different spaces is fundamental to deep learning, and it’s powerful for a simple reason: sometimes the patterns we need aren’t visible in the original data. Think about it this way. In computer vision, early systems used hand-crafted features like edges and corners. Then convolutional neural networks came along and learned to discover their own features automatically, finding patterns humans never thought to look for. That’s what’s happening here. We’re not stuck with the fixed relationships in our input embeddings. Instead, we let the model learn, through training, what transformations actually help it understand language.

Think of it like passing our input through three different lenses simultaneously. Each lens, each weight matrix transforms the same input embeddings in a different way, extracting different aspects of meaning. When all three transformations are complete, we have three new matrices sitting side by side, all sharing the same dimensions of (5, 4). These three matrices are now ready for the next step in the attention mechanism. The queries and keys will interact to figure out who should pay attention to whom. But that’s a story for the next section.

Listing 1.3 Initializing Query, Key, and Value Weight Matrices

torch.manual_seed(123)

W_query = torch.nn.Parameter(torch.randn(d_in, d_out), requires_grad=False)

W_key = torch.nn.Parameter(torch.randn(d_in, d_out), requires_grad=False)

W_value = torch.nn.Parameter(torch.randn(d_in, d_out), requires_grad=False)

print(”W_query:”)

print(W_query)

print(”\nW_key:”)

print(W_key)

print(”\nW_value:”)

print(W_value)

Output

W_query:

Parameter containing:

tensor([[ 0.2961, 0.5166, -0.0973, 0.2340],

[ 0.2517, 0.6886, 0.0451, -0.4128],

[ 0.0740, 0.8665, 0.3210, 0.0185],

[ 0.1366, 0.1025, -0.2314, 0.5642],

[ 0.1841, 0.7264, -0.1035, 0.3399],

[ 0.3153, 0.6871, 0.2478, -0.1520],

[ 0.0756, 0.1966, 0.5142, 0.0813],

[ 0.3164, 0.4017, -0.0879, 0.2904]])

W_key:

Parameter containing:

tensor([[ 0.1186, 0.8274, 0.1040, -0.3055],

[ 0.3821, 0.6605, -0.2103, 0.1428],

[ 0.8536, 0.5932, -0.1449, 0.3170],

[ 0.6367, 0.9826, 0.2553, -0.0872],

[ 0.2745, 0.6584, 0.0342, 0.5051],

[ 0.2775, 0.8573, -0.2984, 0.1907],

[ 0.8993, 0.0390, 0.1206, 0.2843],

[ 0.9268, 0.7388, -0.0721, 0.3419]])

W_value:

Parameter containing:

tensor([[ 0.7179, 0.7058, -0.1630, 0.3310],

[ 0.9156, 0.4340, 0.0982, -0.2753],

[ 0.0772, 0.3565, 0.2056, 0.1468],

[ 0.1479, 0.5331, -0.0925, 0.2391],

[ 0.4066, 0.2318, 0.0194, 0.1844],

[ 0.4545, 0.9737, -0.3086, -0.0417],

[ 0.4606, 0.5159, 0.1274, 0.0219],

[ 0.4220, 0.5786, -0.0853, 0.3640]])

This code creates the three trainable weight matrices that turn input embeddings into query, key and value vectors.

The variable d_in is the size of each input embedding, here 8. The variable d_out is the size we want for the query, key and value vectors, here 4.

The tensors W_query, W_key and W_value are wrapped in torch.nn.Parameter, which tells PyTorch that these tensors are learnable weights. During training, gradient descent will update these matrices so that they learn useful transformations.

The shapes printed at the end confirm that each weight matrix has shape 8, 4, matching the description in the theory where the number of rows equals d_in and the number of columns equals d_out.

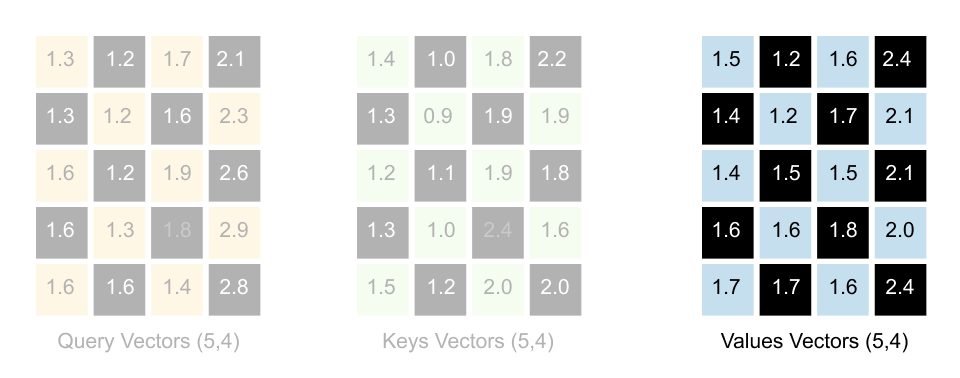

Listing 1.4: Computing Query, Key, and Value Vectors

queries = inputs @ W_query # shape: (5, 4)

keys = inputs @ W_key # shape: (5, 4)

values = inputs @ W_value # shape: (5, 4)

print(”queries.shape:”, queries.shape)

print(”keys.shape :”, keys.shape)

print(”values.shape :”, values.shape)

print(”\nqueries:”)

print(queries)

print(”\nkeys:”)

print(keys)

print(”\nvalues:”)

print(values)Output

queries.shape: torch.Size([5, 4])

keys.shape : torch.Size([5, 4])

values.shape : torch.Size([5, 4])

queries:

tensor([[ 0.8840, 2.0469, 0.3419, 0.5755],

[ 0.8723, 2.0443, 0.3241, 0.3917],

[ 0.9738, 1.9925, 0.3154, 0.7673],

[ 1.1051, 2.1175, 0.2772, 0.9102],

[ 0.9692, 2.5180, 0.2741, 0.8069]])

keys:

tensor([[ 2.2381, 2.7132, 0.0507, 0.8830],

[ 2.1564, 2.7927, -0.0064, 0.8684],

[ 2.6705, 2.7739, 0.0891, 1.0862],

[ 2.4087, 3.1074, 0.0683, 1.0651],

[ 2.6735, 3.1745, 0.0538, 1.2434]])

values:

tensor([[ 2.1213, 2.5079, -0.2830, 0.7693],

[ 2.0476, 2.1981, -0.2301, 0.4927],

[ 2.1971, 2.4733, -0.2806, 0.9121],

[ 2.4207, 2.5415, -0.3020, 0.9874],

[ 2.2625, 2.5902, -0.2714, 0.9625]])

Here we apply the three weight matrices to the full input matrix. Each row of inputs is an embedding for one word.

The matrix multiplication inputs @ W_query takes every word embedding and projects it into query space. The result is a query matrix with shape 5, 4, one query vector of length four for each of the five words. The same happens for keys and values.

This mirrors the explanation in the text that we now have three new matrices, each of size number of tokens, d_out. We are no longer working with raw input embeddings but with transformed representations tailored for searching, being searched and being blended.

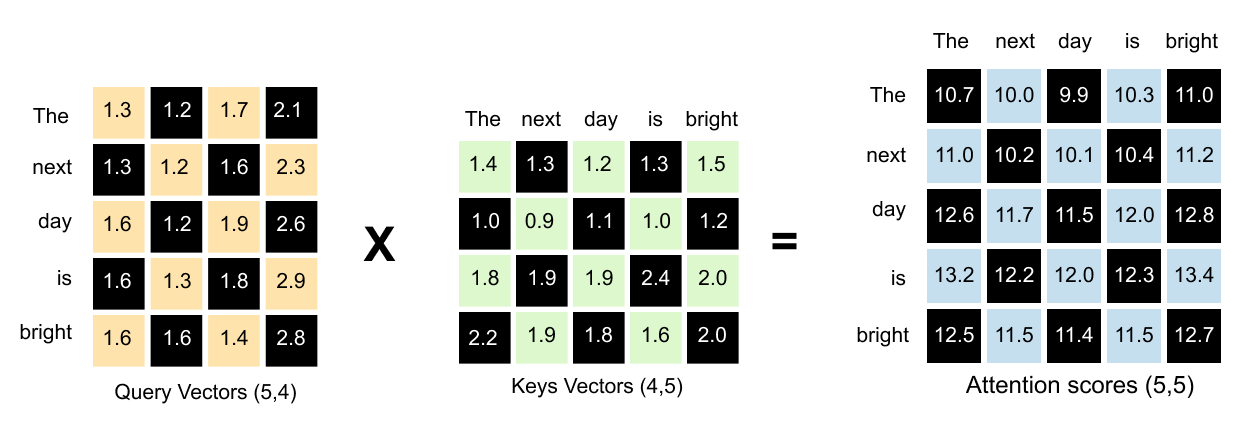

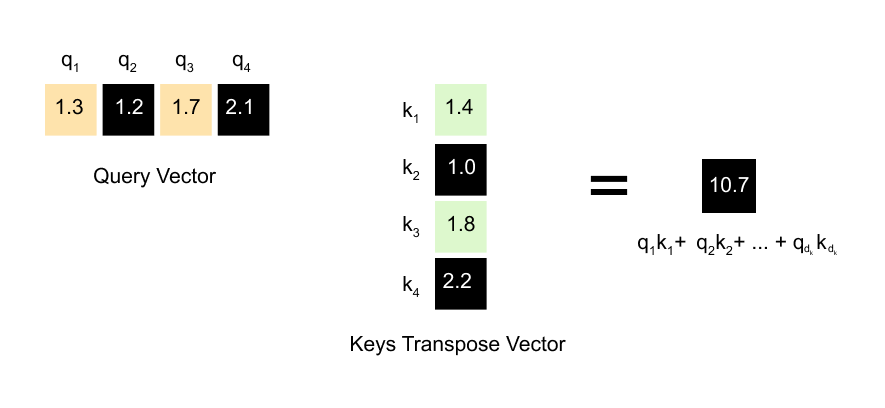

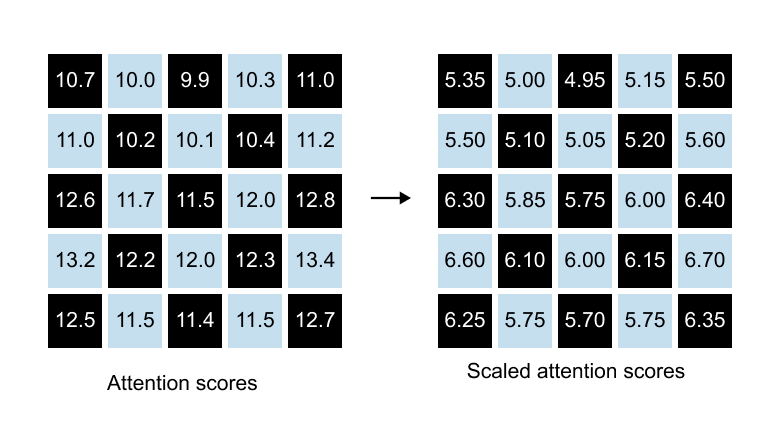

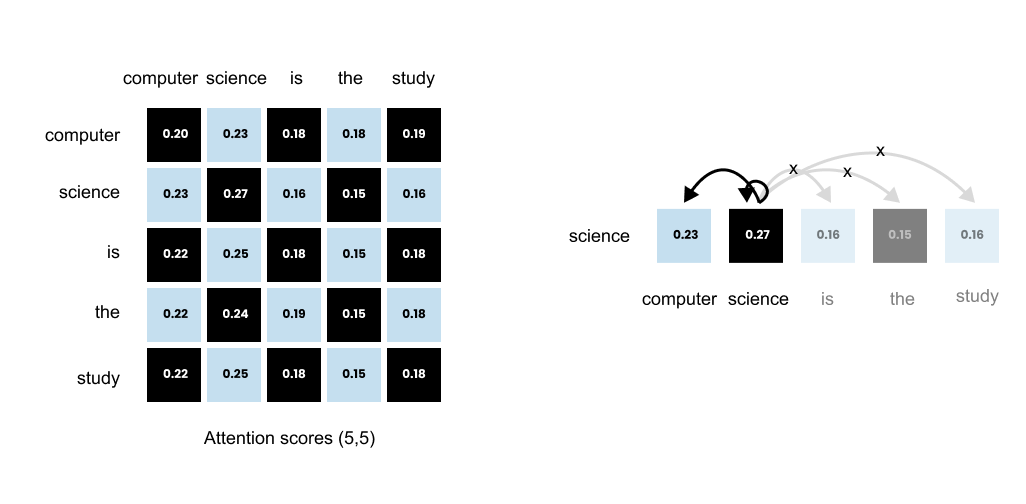

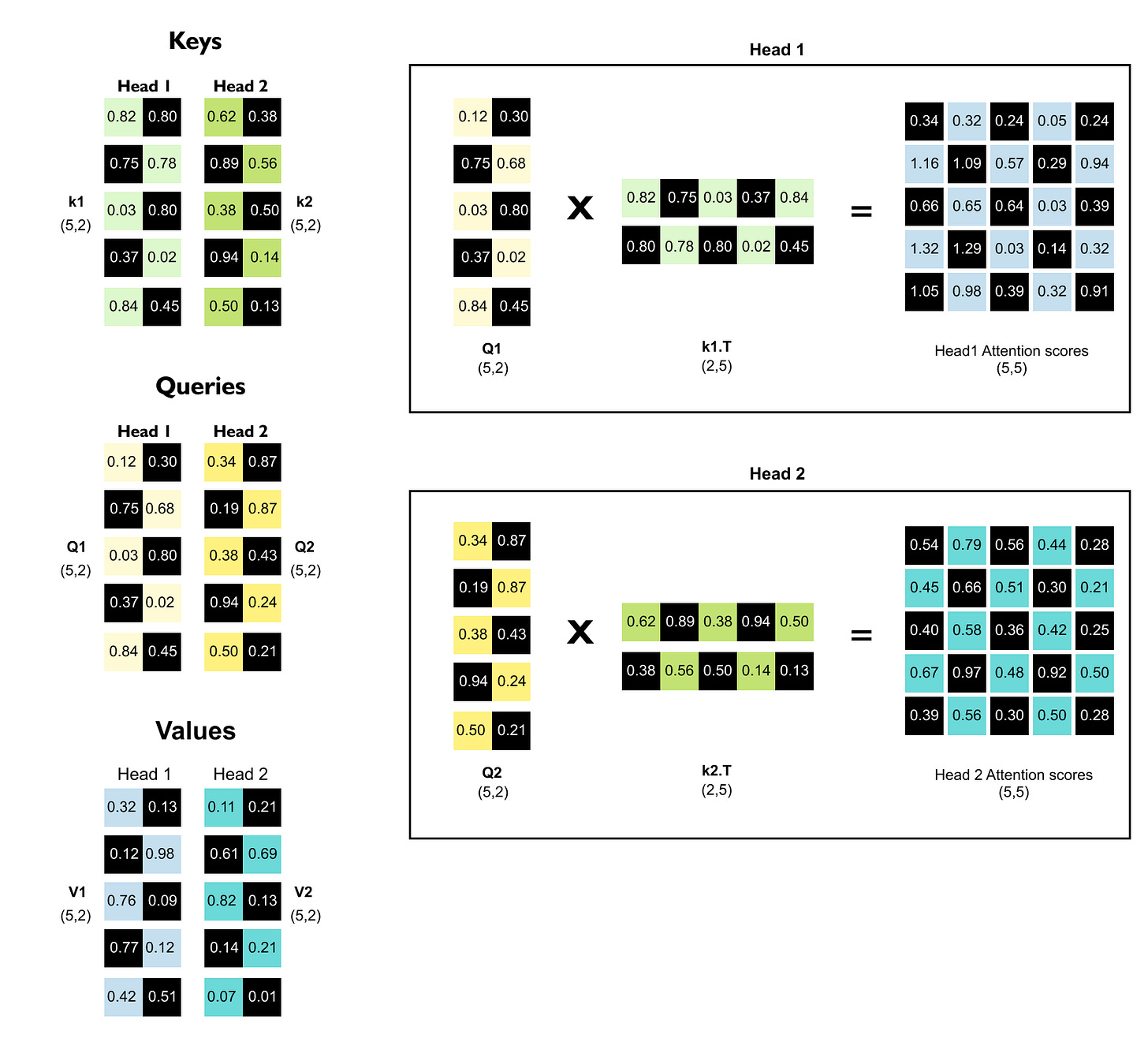

[Step 2] Computing Attention Scores

Now that we have our query, key, and value vectors, we’re ready for the heart of the attention mechanism: figuring out which words should pay attention to which other words. Remember, each word has a query vector and a key vector . When we compute the dot product between a query and a key, we get a number that represents how well they align. A high dot product means strong alignment, which translates to high attention. A low dot product means weak alignment, which means less attention.

Figure 1.60: The dot product between a query vector and a key vector produces a scalar attention score indicating how well they align.

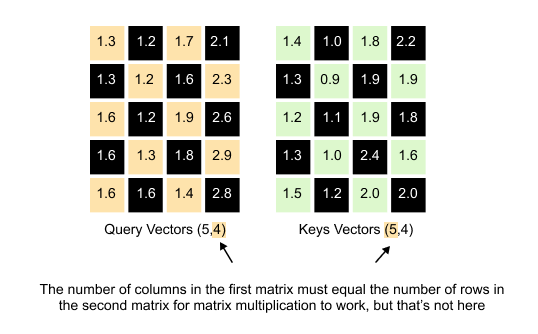

Here’s where we hit a small technical hurdle. We want to compute all these dot products at once using matrix multiplication. Our Query matrix has dimensions (5, 4) and our Keys matrix also has dimensions (5, 4). If we try to multiply them directly, Query × Keys, we run into a problem. For matrix multiplication to work, the number of columns in the first matrix must equal the number of rows in the second matrix. But Query has 4 columns and Keys has 5 rows. They don’t match. The multiplication simply won’t work. The solution of this problem is to transpose the Keys matrix.

A Quick Note on Matrix Transpose

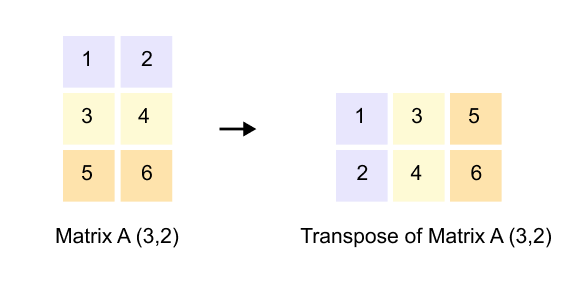

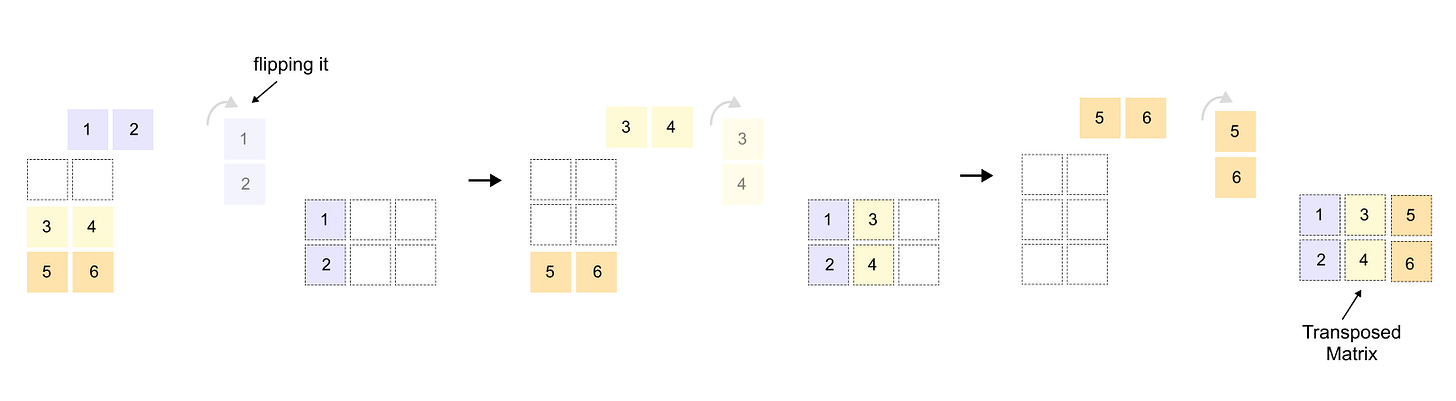

Figure 1.61: Matrix transpose: rows become columns and columns become rows, converting a (3, 2) matrix into a (2, 3) matrix.

If you’re already comfortable with matrix transpose, feel free to skip ahead to the next section. But if transpose feels unfamiliar or you want a quick refresher, stay with me for just a moment.

Figure 1.62: Transposing the Keys matrix from (5, 4) to (4, 5) so that it can be multiplied with the (5, 4) Query matrix.

When we transpose a matrix, we flip it along its diagonal. Rows become columns, and columns become rows. If you have a matrix with dimensions (3, 2), its transpose will have dimensions (2, 3). The first row of the original matrix becomes the first column of the transposed matrix. The second row becomes the second column, and so on. It’s like rotating the entire matrix 90 degrees and reflecting it. This simple operation is incredibly useful because it lets us align dimensions for matrix multiplication when they wouldn’t otherwise match.

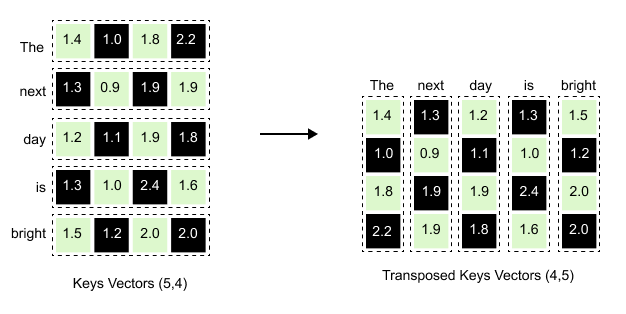

Figure 1.63: The Keys matrix transposed: each word’s key vector becomes a column, converting the (5, 4) matrix into (4, 5).

When we transpose the Keys matrix, each row becomes a column. Notice how the first row for “The” [1.4, 1.0, 1.8, 2.2] in the original Keys matrix becomes the first column [1.4, 1.0, 1.8, 2.2] reading downward in the transposed version. The same happens for every word, ”next” becomes the second column, “day” becomes the third column, and so on, transforming our (5, 4) matrix into a (4, 5) matrix ready for multiplication.

Figure 1.64: Multiplying Query (5, 4) by K_T (4, 5) produces the (5, 5) attention scores matrix, capturing every possible word-to-word relationship.

The result of the dot product of Query and Keys vectors is a (5, 5) attention scores matrix. This matrix captures every possible relationship between words.

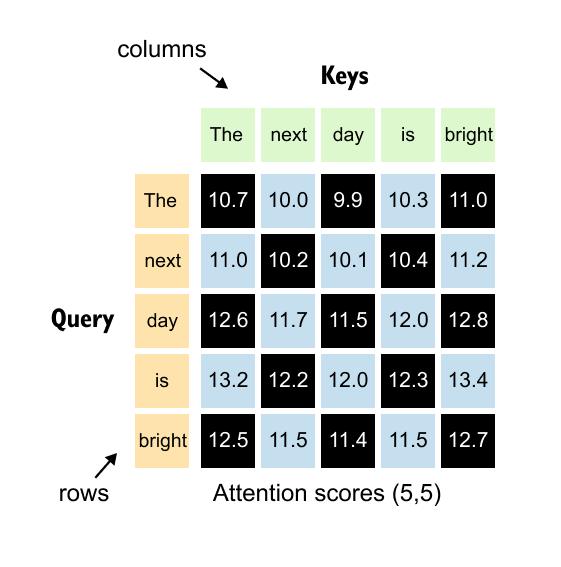

Interpreting the Attention Scores Matrix

Now that we have our attention scores matrix, let’s understand what it actually tells us.

Figure 1.65: The (5, 5) attention scores matrix: rows represent queries and columns represent keys. Entry (i, j) shows how much word i attends to word j.

Each number in this (5, 5) matrix represents how much one word should attend to another word. The key to reading this matrix is simple:

rows represent queries, and columns represent keys.

Let’s look at some concrete examples.

Figure 1.66: Reading the attention matrix: the entry at row 2, column 1 gives the attention score from “next” (query) to “The” (key).

Finding attention between “next” and “The”:

The word “next” is in position 2, so we look at row 2. The word “The” is in position 1, so we look at column 1. The value at position (2, 1) tells us how much “next” attends to “The.”

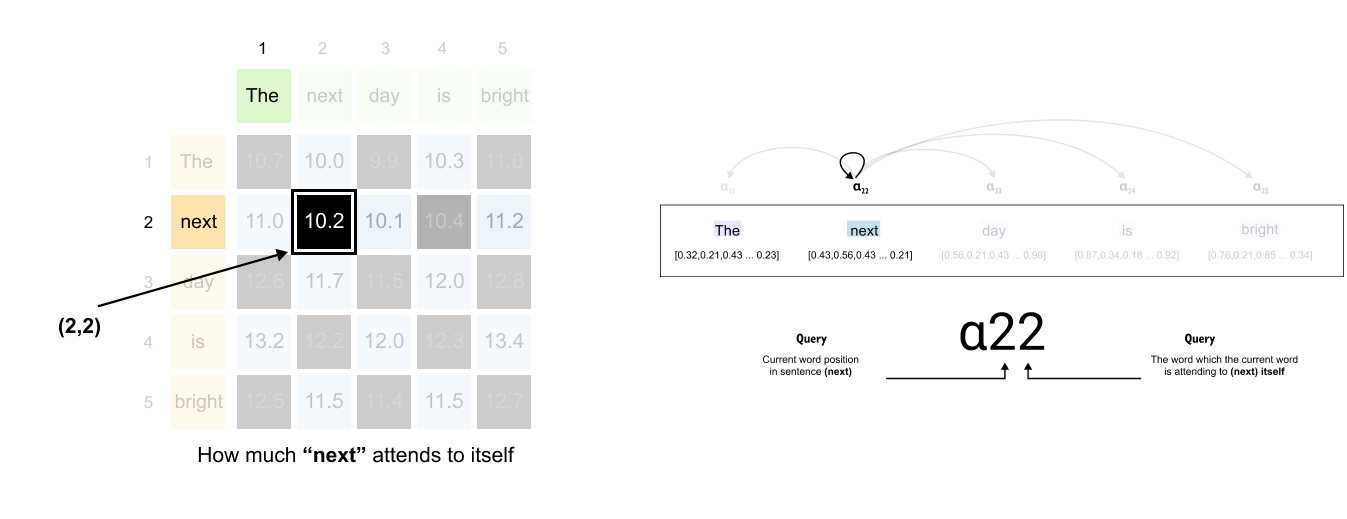

Figure 1.67: The entry at row 2, column 2 shows the self-attention score: how much “next” attends to itself.

Finding attention between “next” and itself:

Same word, but the pattern holds. Row 2 for “next” as the query, column 2 for “next” as the key. The value at position (2, 2) shows how much “next” attends to itself.

Here’s where it gets interesting. Each row tells a complete story about one word’s attention pattern.

Figure 1.68: Each row tells a complete story about one word’s attention pattern across all other words in the sentence.

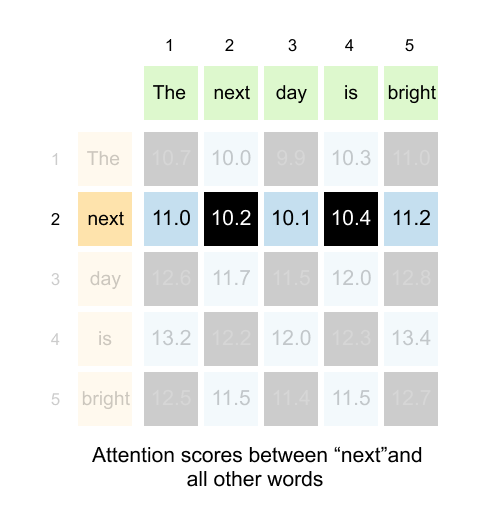

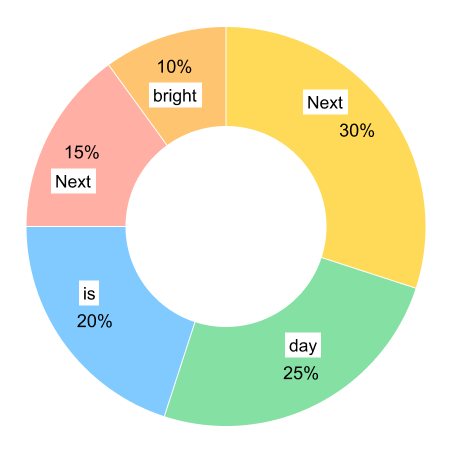

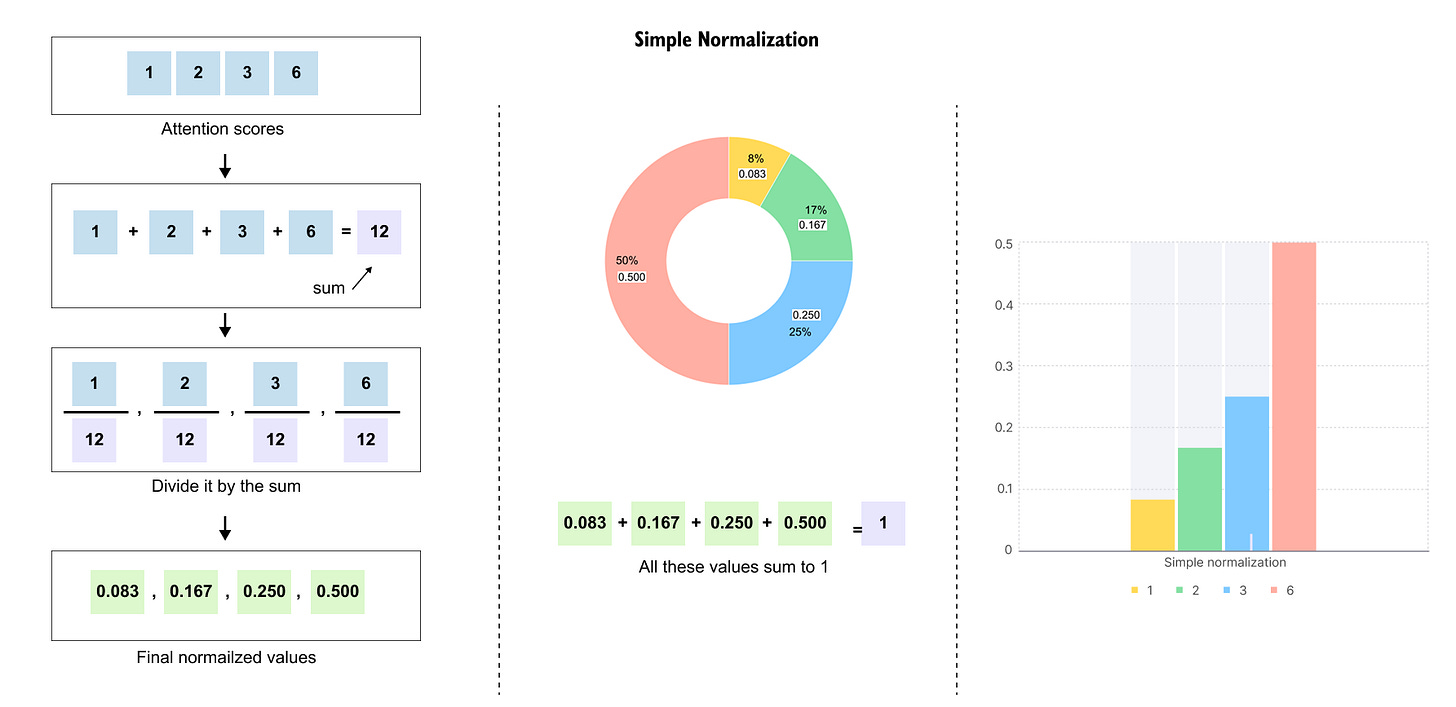

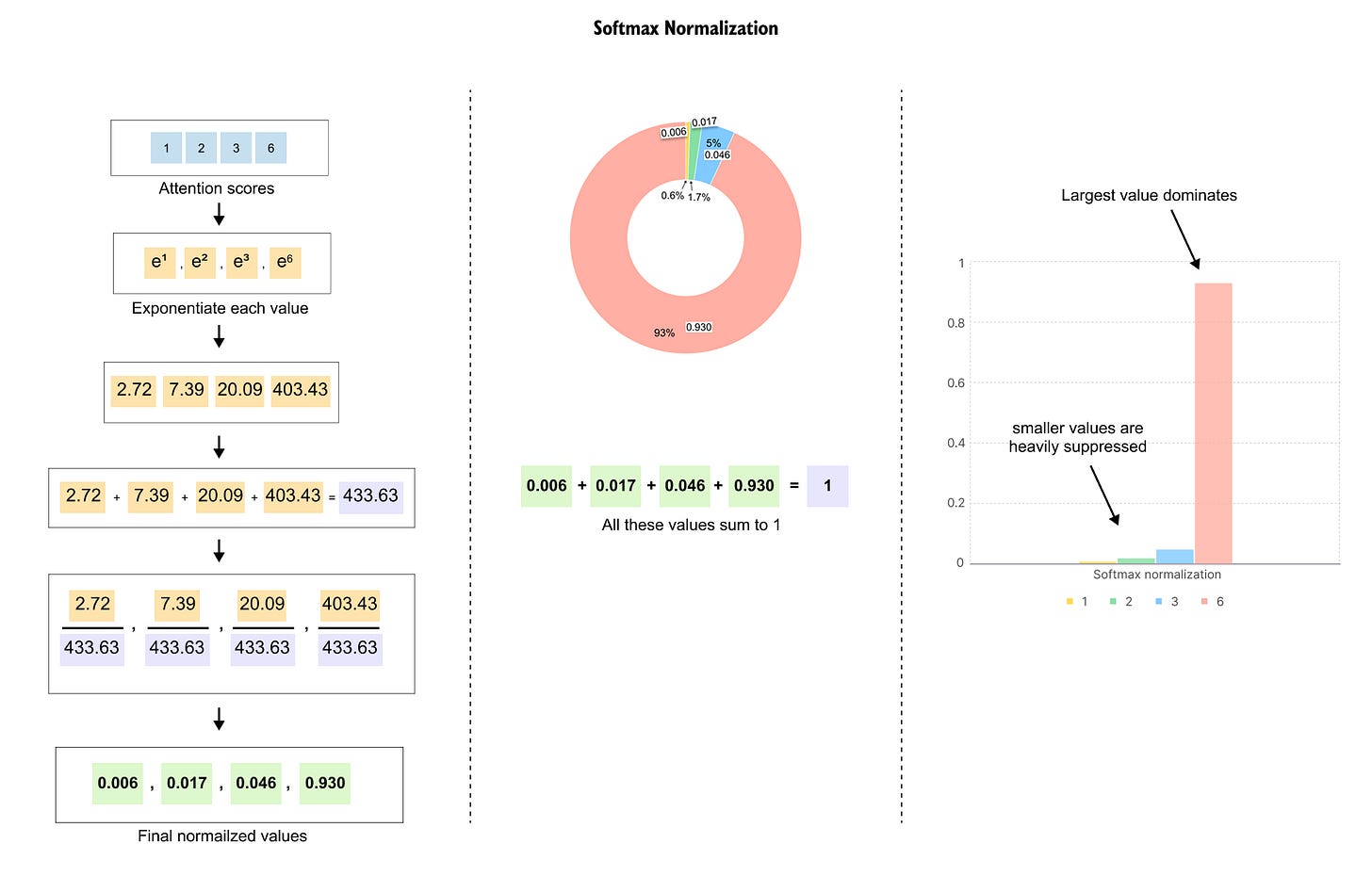

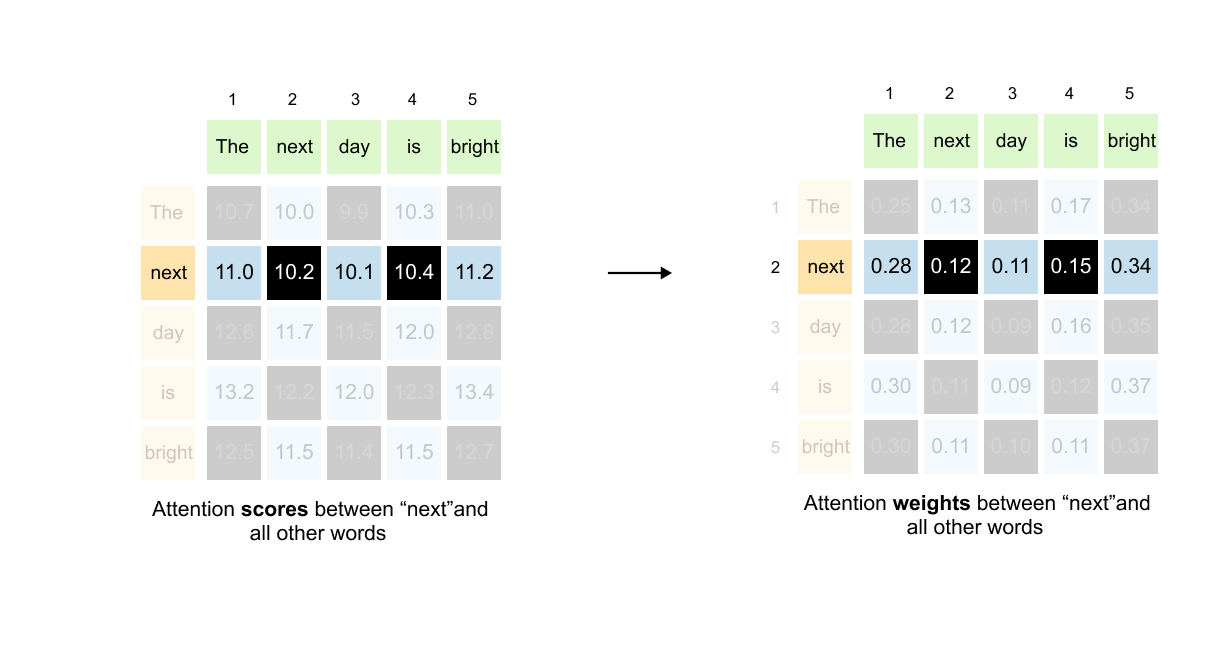

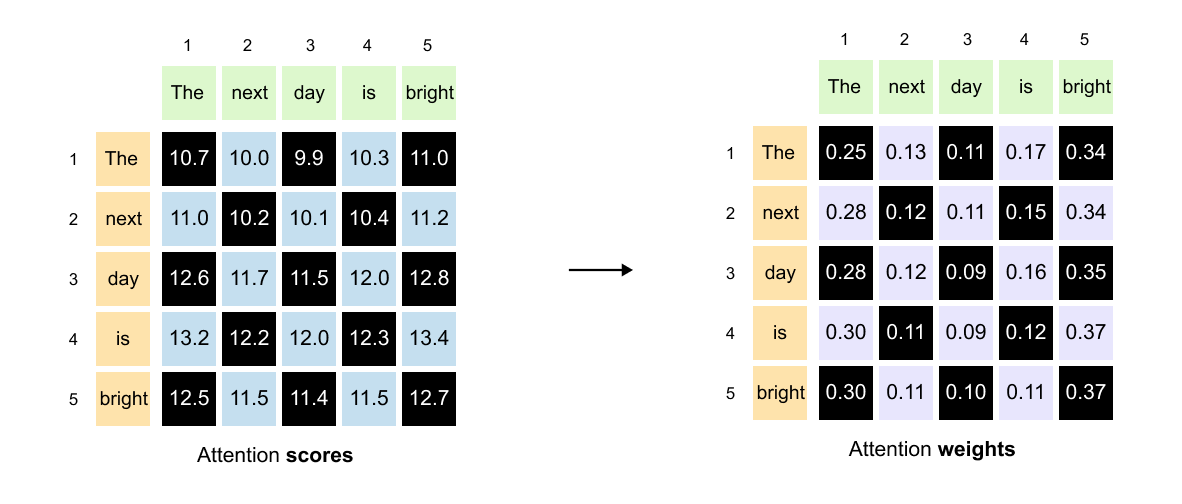

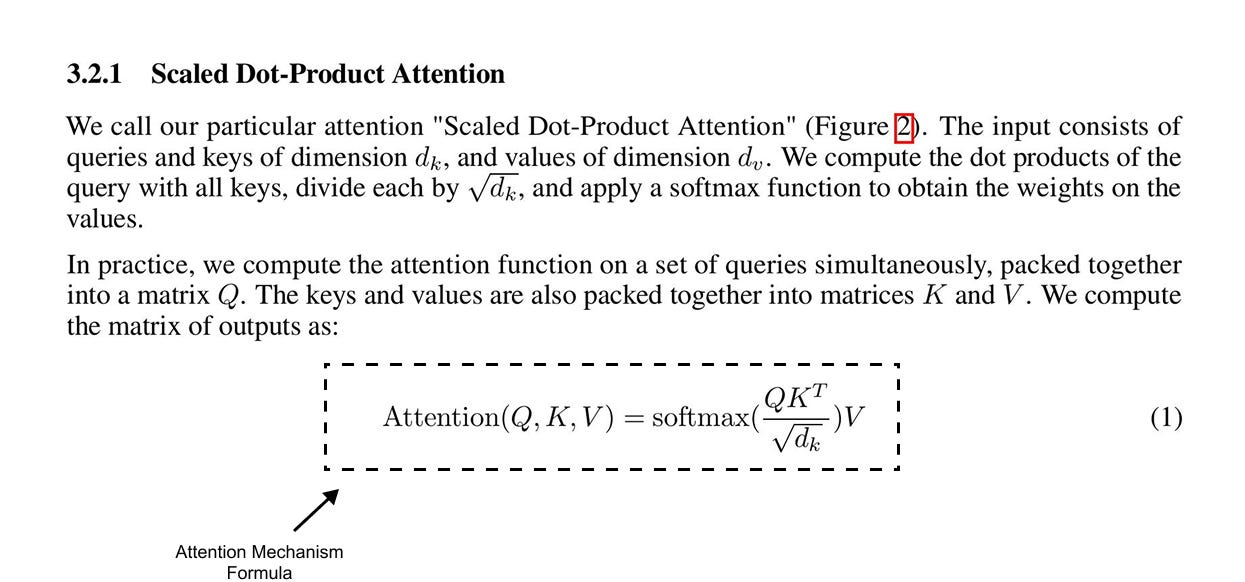

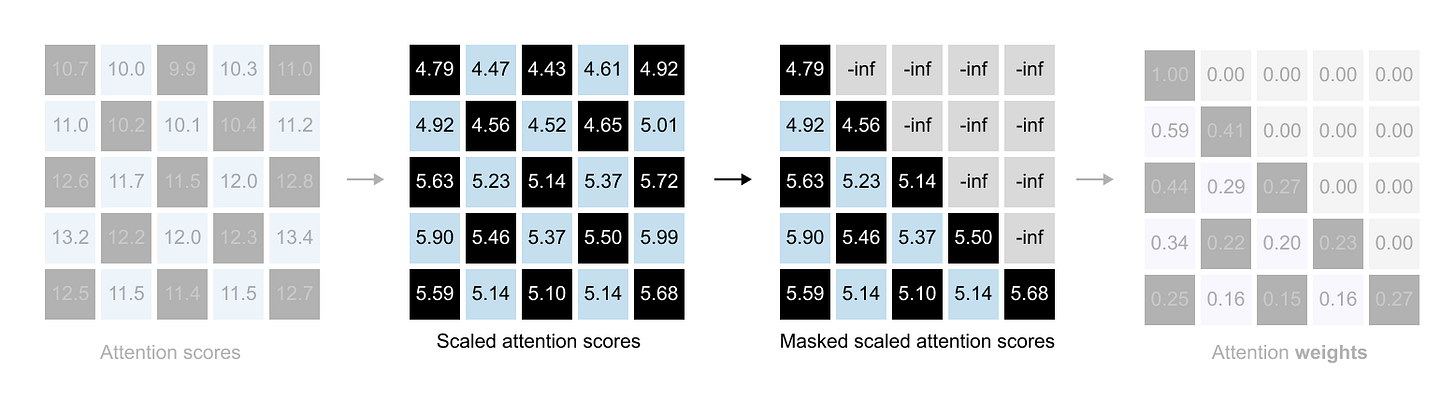

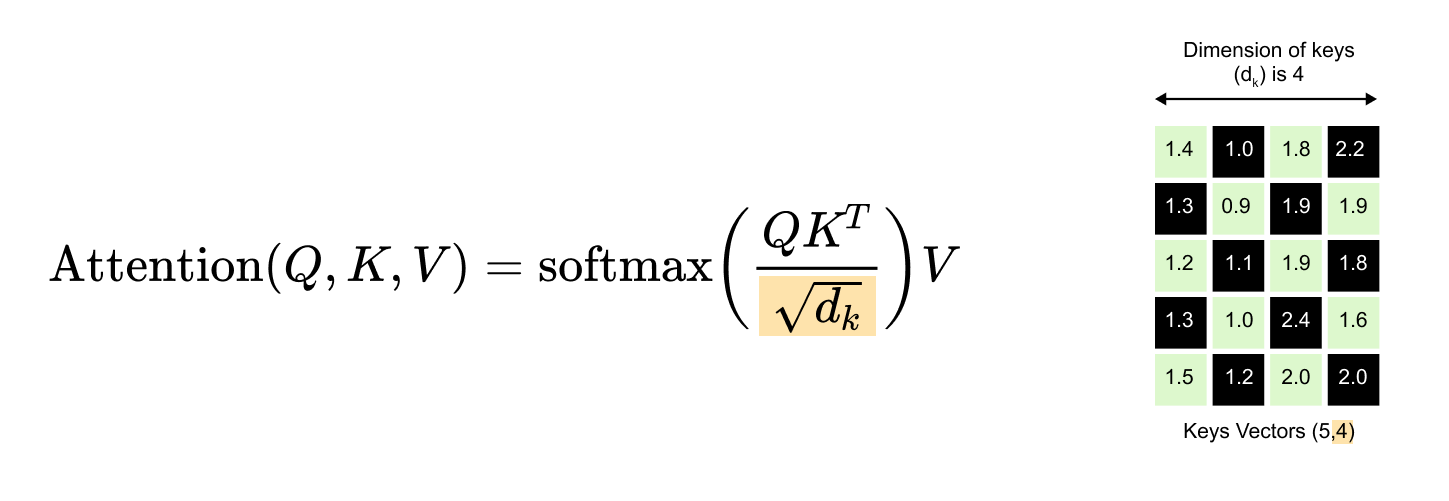

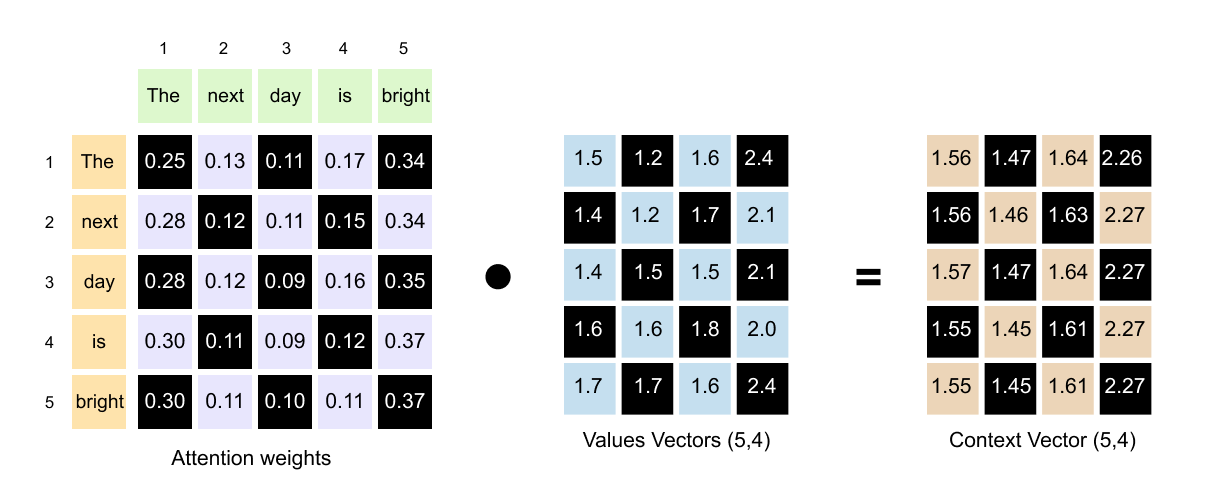

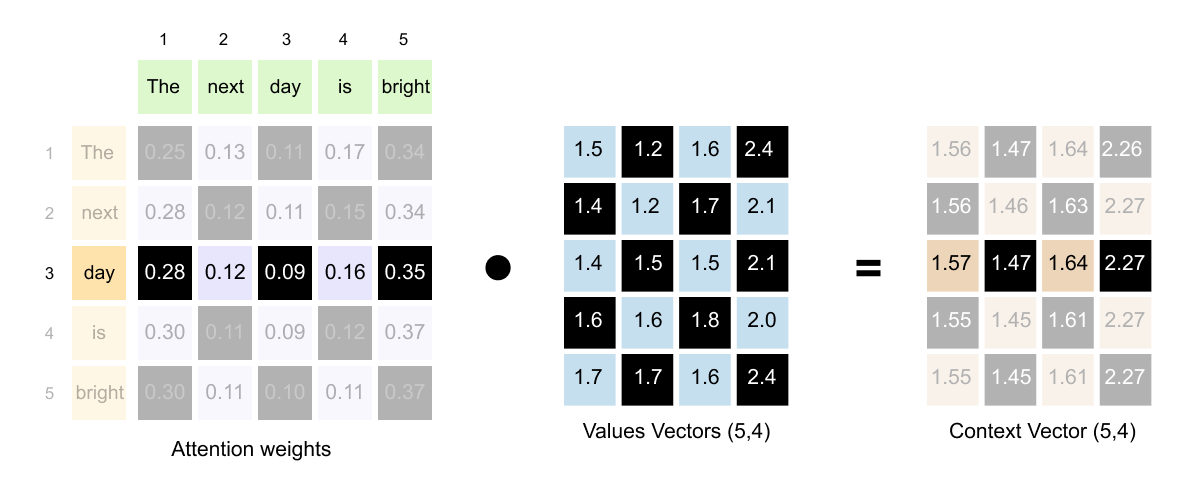

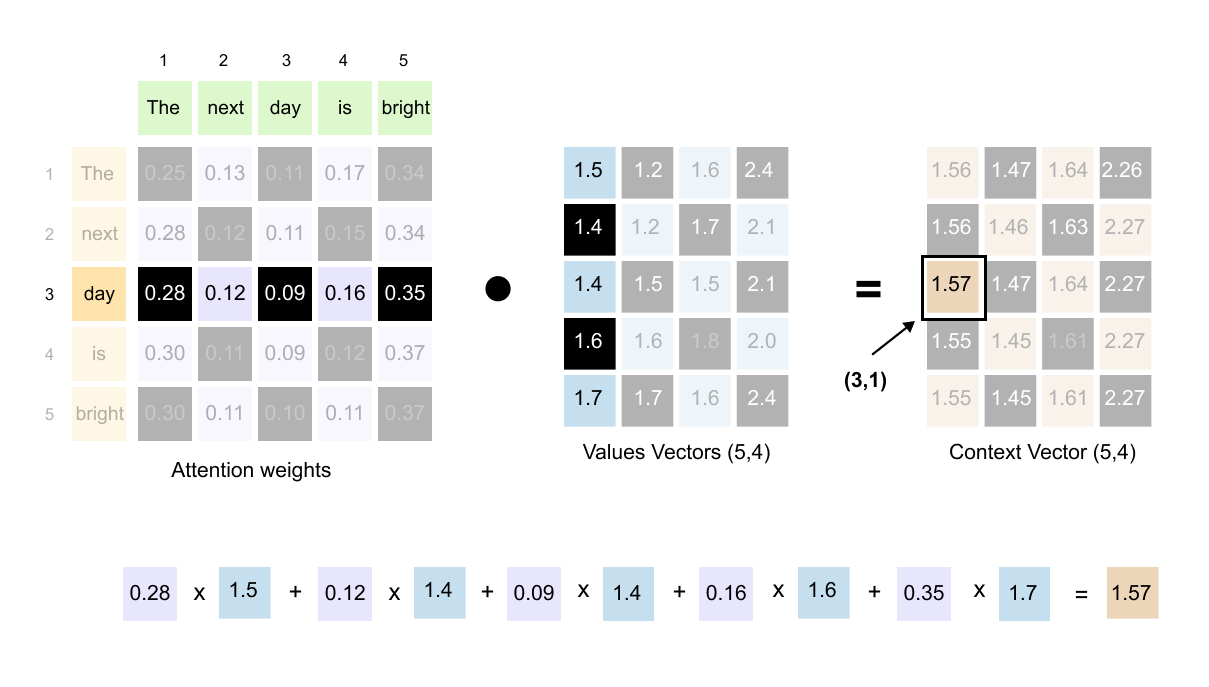

Take the second row, for example. This entire row represents the attention scores between “next” (the query) and all other words (the keys). As you move across the columns, you see how much “next” should attend to “The,” then to “next” itself, then to “day,” then to “is,” and finally to “bright.”