Diffusion Policy: Teaching Robots to Act by Denoising

How diffusion models solve the multimodal action problem in robot imitation learning

The Multimodal Action Problem

Let us start with a simple example. Imagine you are training a robot to push a T-shaped block into a target zone on a table.

You collect expert demonstrations by asking several humans to perform the task. Some humans push the block from the left side, steering it rightward into the target. Others push from the right side, steering it leftward. Both strategies work perfectly.

Now, you train a standard neural network on this data. The network takes the current image of the table as input and predicts a single action: which direction to push.

Here is the problem: the network sees that for the same observation (T-block sitting in the center), the correct answer is sometimes “push left” and sometimes “push right.” To minimize its total error across all demonstrations, the network does what any reasonable regression model would do — it takes the average.

The average of “push left” and “push right” is “push straight ahead.”

And pushing straight ahead slams directly into the flat edge of the T-block. The robot fails.

This is called the multimodal action problem. In robotics, it shows up constantly. A robot picking up an object can reach from the left or the right. A robot navigating around an obstacle can go clockwise or counter-clockwise. Whenever multiple valid strategies exist for the same observation, a point-estimate policy collapses them into a single useless average.

What we really want is a policy that captures the full distribution of possible actions — not just the mean. The policy should be able to sample from this distribution, and each sample should be a coherent, valid action sequence.

So, how do we build a policy that captures the full distribution of actions, not just the average?

From Image Generation to Action Generation

This brings us to an elegant idea: What if we borrowed the same technique that generates images from noise — diffusion models — and used it to generate robot actions from noise?

Let us quickly understand how diffusion models work. The core idea has two parts.

The Forward Process: Take a clean piece of data — say, an image of a cat — and gradually add Gaussian noise to it, step by step, until the image becomes pure random noise. This is a fixed process. We do not need to learn anything here. We just keep adding noise.

The Reverse Process: Train a neural network to undo this corruption. Given a noisy image, the network learns to predict what the slightly-less-noisy version looks like. If we chain many such denoising steps together — starting from pure noise — we can generate a clean image that looks like it came from the training distribution.

The mathematical formulation for the forward process is straightforward. At each step k, we corrupt the clean data A⁰ by mixing it with Gaussian noise:

Here, ᾱₖ is a noise schedule parameter that controls how much of the original signal remains at step k. When k is small, ᾱₖ is close to 1, so most of the original data is preserved. When k is large, ᾱₖ is close to 0, and we have almost pure noise.

Let us plug in some simple numbers to see how this works. Suppose our clean action is A⁰ₜ = 3.0 (a joint angle in radians), the noise schedule gives ᾱₖ = 0.5 at step k, and the sampled noise is ε = 0.8:

Notice how the original action value of 3.0 has been pulled partway toward noise. If we continued this process with smaller and smaller ᾱₖ, the value would eventually become indistinguishable from a random Gaussian sample. This is exactly what we want.

Now, here is the key insight from the Diffusion Policy paper by Chi et al. (2023): instead of denoising pixels to generate images, we can denoise action sequences to generate robot behaviors.

The input to our denoising process is random noise in the action space — imagine a completely random sequence of joint angles and velocities. The output, after many denoising steps, is a smooth, coherent sequence of robot actions that solves the task.

And the conditioning signal? The robot’s current observations — what the camera sees and what the joint encoders report.

The Diffusion Policy Formulation

Now let us formalize how Diffusion Policy works, step by step.

The first important design choice is how the policy interacts with time. The authors define three temporal horizons:

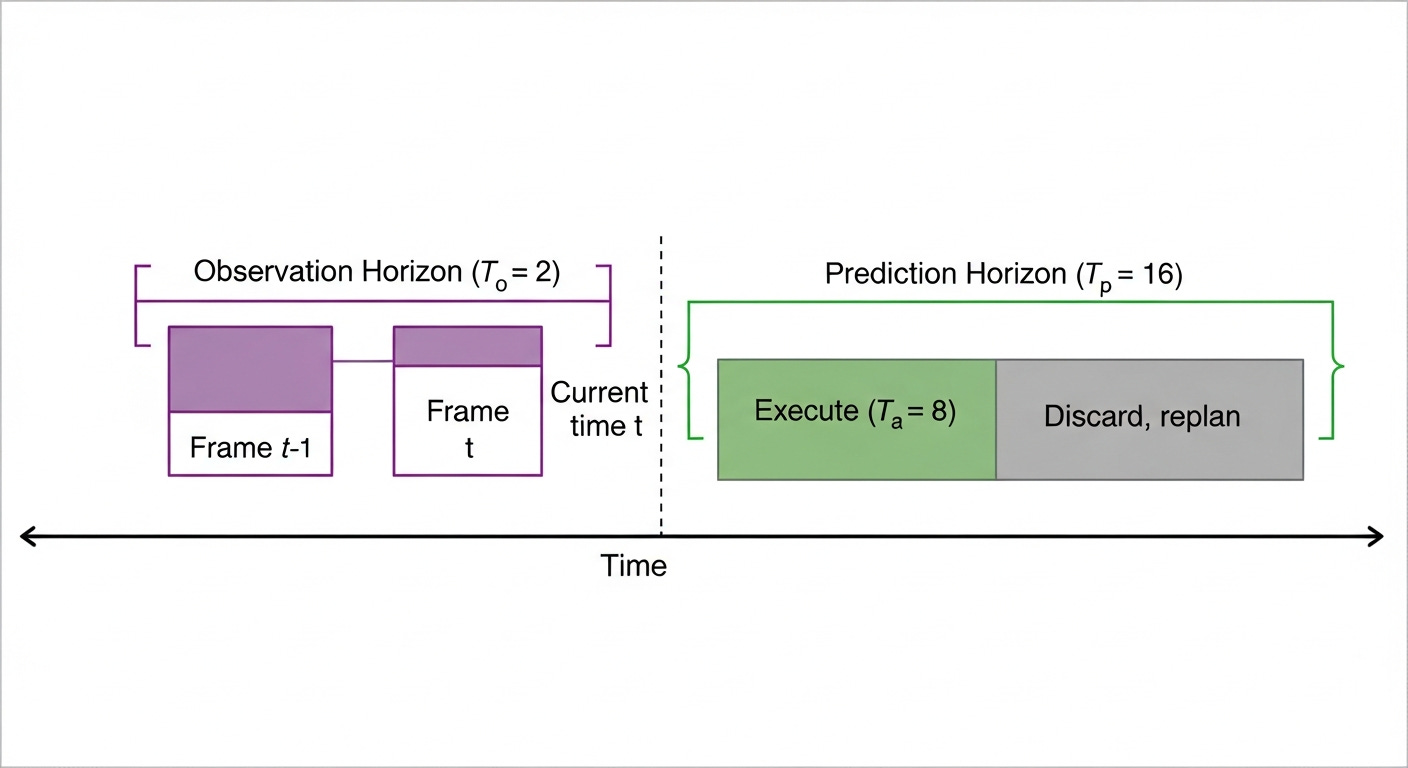

Observation Horizon (Tₒ): The policy receives the latest Tₒ steps of observation data. For example, with Tₒ = 2, the robot looks at the current camera frame and the previous one. This gives the network a sense of motion and velocity.

Prediction Horizon (Tₚ): The diffusion model predicts Tₚ future action steps all at once. Typically Tₚ = 16, meaning the model outputs a sequence of 16 future actions in a single forward pass.

Action Horizon (Tₐ): Of those Tₚ predicted actions, only Tₐ are actually executed on the robot before the policy replans. Typically Tₐ = 8, meaning we execute half the predicted sequence, then re-observe and generate a new plan.

Now let us look at the training process. The training objective is beautifully simple: predict the noise that was added.

During training, we take a clean action sequence A⁰ₜ from the demonstration data, add noise at a random level k, and ask the network to predict the noise. The loss function is:

Here, εᵏ is the actual noise that was added, ε_θ is our noise-prediction network, Oₜ is the observation, and k is the noise level. The network learns to look at a noisy action sequence and predict what noise was added to it.

Let us plug in some simple numbers. Suppose our action sequence has 3 dimensions (for a 3-DOF robot), and the true noise added was εᵏ = [0.3, -0.1, 0.5]. Our model predicts ε̂ = [0.28, -0.12, 0.48]. The MSE loss is:

This is a very small loss, which means our model is predicting the noise accurately. This is exactly what we want.

At inference time, we start from pure Gaussian noise and iteratively denoise:

Let us walk through one denoising step with concrete numbers. Suppose Aᵏₜ = 1.5 (a noisy action value), our network predicts ε_θ = 0.8, and the denoising parameters are α = 0.99, γ = 0.5, and σ = 0.1. The random noise sample is z = 0.05:

Notice how the action value moved from 1.5 toward a less noisy value of 1.094. The network’s noise prediction of 0.8 told us that a large part of the current value was noise, so we subtracted it out. After many such steps, we arrive at a clean action sequence.

Two Architectures: CNN and Transformer

Now the question is: what does the noise prediction network ε_θ actually look like inside?

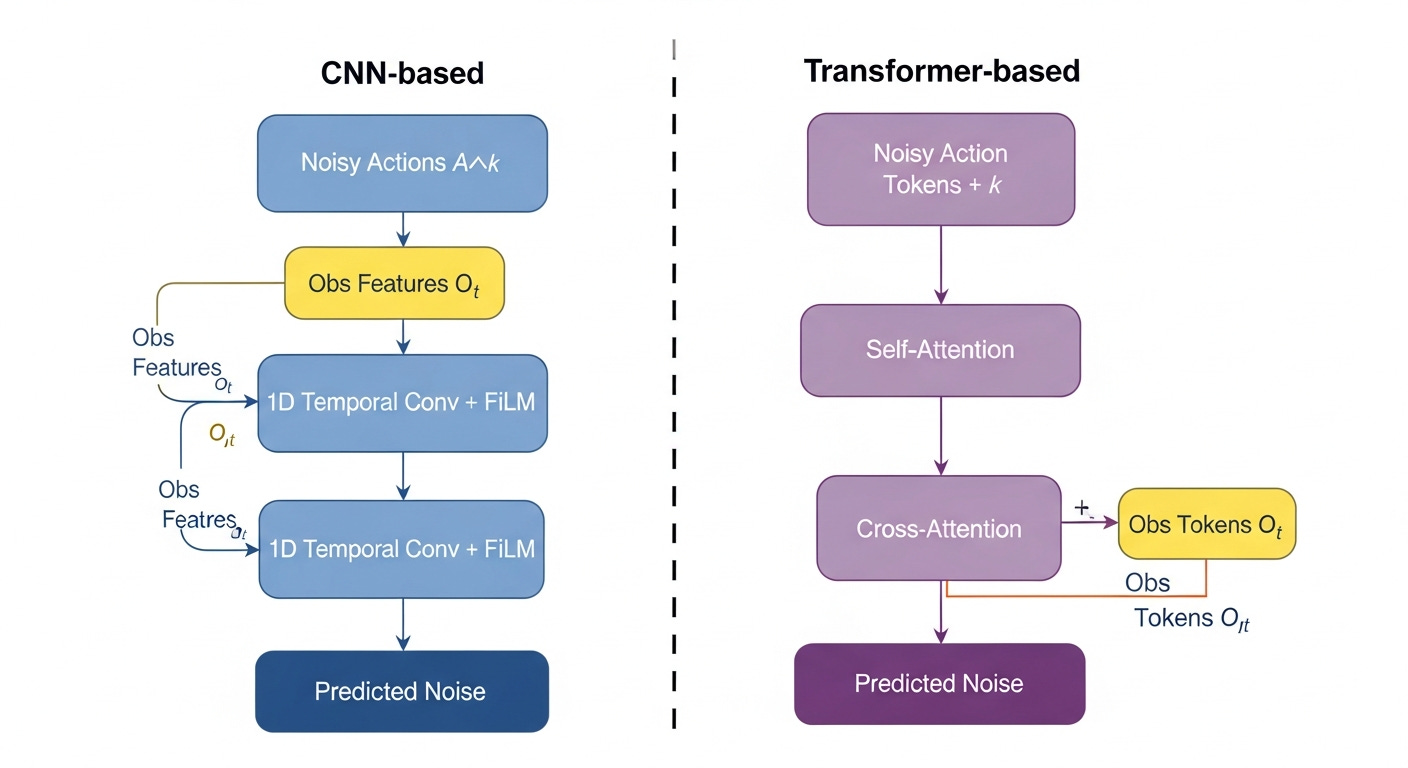

The Diffusion Policy paper proposes two architectures.

CNN-Based Diffusion Policy

The first architecture uses a 1D temporal convolutional network. Think of it as a series of convolution layers that slide along the time axis of the action sequence.

The clever part is how observations are injected. Rather than concatenating observation features to the input, the authors use FiLM conditioning (Feature-wise Linear Modulation). At every convolutional layer, the observation features scale and shift the hidden activations:

Here, h is the hidden activation from the convolution layer, γ(Oₜ) and β(Oₜ) are learned functions of the observation that produce per-channel scale and shift values, and ⊙ denotes element-wise multiplication.

Let us plug in some simple numbers. Suppose a convolutional layer produces a hidden value of h = 2.0 for one channel. The observation features generate γ = 1.5 and β = -0.3 for this channel:

The observation has modulated the hidden feature from 2.0 to 2.7. Different observations will produce different γ and β values, allowing the network to condition its denoising behavior on what the robot currently sees. This is exactly what we want.

For the visual encoder, the authors use a ResNet-18 backbone with two modifications: they replace global average pooling with spatial softmax pooling (which preserves spatial information about where features are located), and they swap BatchNorm for GroupNorm (which is more stable when using exponential moving average of weights during training).

Transformer-Based Diffusion Policy

The second architecture uses Transformer decoder blocks, inspired by minGPT. The noisy action sequence is treated as a sequence of tokens, with the diffusion timestep k prepended as a special token. The observation features are provided as a separate sequence that the action tokens attend to via cross-attention.

The processing flow is: noisy action tokens pass through self-attention (with causal masking), then cross-attend to the observation tokens, then produce the noise prediction through a feed-forward layer.

Which one should you use? The CNN-based architecture is the default workhorse — it is faster to train, requires less hyperparameter tuning, and works well on most tasks. The Transformer-based architecture shines on tasks with high-frequency action changes, such as velocity control, where the temporal convolutions of the CNN tend to over-smooth the predictions.

Why Diffusion Policy Handles Multimodality

Let us come back to our original question: how does Diffusion Policy solve the multimodal action problem?

The key insight comes from an energy-based interpretation. The noise prediction network ε_θ implicitly learns the gradient of an energy function over the action space:

This equation tells us that the noise prediction network is learning to point toward regions of high probability in the action space. The beautiful thing is that this works without ever needing to compute a normalizing constant — a notoriously difficult problem for energy-based models.

Let us see this with a simple 1D example. Suppose for a given observation, there are two valid actions: a = -1 (go left) and a = +1 (go right). The energy landscape might look like a surface with two valleys — one at a = -1 and one at a = +1 — with a high ridge at a = 0.

If we start from a random noise sample, say aᴷ = 0.3, the gradient will point toward the nearest valley. After many denoising steps, the action will settle into the valley at a = +1.

If we start from aᴷ = -0.4, the gradient points the other way, and the action settles into the valley at a = -1.

This is the fundamental difference from a point-estimate policy. The point-estimate policy would output a = 0 — the average of the two modes — which is not a valid action. The diffusion policy starts from random noise and naturally “falls into” one of the valid modes.

And here is what makes this even more elegant: the model commits to a single mode within each rollout. Because the entire action sequence is denoised together, all 16 predicted timesteps land in the same mode. You will never see a trajectory that starts going left and then suddenly switches to going right mid-execution.

Receding Horizon Control: Smooth and Reactive

There is one more important piece of the puzzle: how does the robot actually execute these predicted actions in the real world?

The authors use a strategy called receding horizon control. The idea is simple but powerful:

The policy observes the current state and predicts Tₚ = 16 future action steps

The robot executes only the first Tₐ = 8 steps

After executing 8 steps, the robot re-observes the world and generates a fresh prediction of 16 steps

Repeat

Why not just predict one action at a time? Because single-step prediction leads to jerky, inconsistent motions. The robot might oscillate between modes at every timestep. By predicting a long sequence, the diffusion model ensures temporal consistency — all actions within one prediction are coherent and smooth.

Why not execute all 16 predicted steps? Because the world changes. If the robot gets bumped, or an object shifts unexpectedly, we want to react. By re-observing and replanning every 8 steps, the robot stays responsive to disturbances.

This creates a beautiful balance: long prediction horizon for consistency, short execution horizon for reactivity.

The authors also found that using position control (predicting target joint positions) is much more robust than velocity control when dealing with computational latency. With position targets, the robot can still reach approximately the right pose even if the next action arrives a few milliseconds late. With velocity commands, even a small delay causes the robot to overshoot.

Practical Implementation

Enough theory — let us look at some practical implementation now.

We will implement the two core operations: (1) adding noise to a clean action sequence during training, and (2) the denoising loop during inference.

First, let us set up the noise schedule and the forward diffusion process:

import torch

import torch.nn as nn

# --- Noise Schedule ---

def cosine_beta_schedule(T, s=0.008):

"""Cosine noise schedule (improved over linear)."""

steps = torch.arange(T + 1, dtype=torch.float32)

alpha_bar = torch.cos((steps / T + s) / (1 + s) * torch.pi / 2) ** 2

alpha_bar = alpha_bar / alpha_bar[0]

betas = 1 - alpha_bar[1:] / alpha_bar[:-1]

return torch.clamp(betas, max=0.999)

# --- Forward Diffusion (Training) ---

def add_noise(clean_actions, k, alpha_bar):

"""Add noise to clean actions at diffusion step k."""

noise = torch.randn_like(clean_actions)

sqrt_alpha_bar = torch.sqrt(alpha_bar[k]).view(-1, 1, 1)

sqrt_one_minus = torch.sqrt(1 - alpha_bar[k]).view(-1, 1, 1)

noisy_actions = sqrt_alpha_bar * clean_actions + sqrt_one_minus * noise

return noisy_actions, noise

Let us understand this code in detail. The cosine_beta_schedule function computes a noise schedule where the amount of noise added at each step follows a cosine curve — this gives a smoother transition than a linear schedule. The add_noise function takes a clean action sequence, a diffusion step k, and applies the forward diffusion equation we saw earlier. It returns both the noisy actions (which the network will see as input) and the true noise (which the network must learn to predict).

Now, let us implement the denoising loop used during inference:

# --- Denoising Loop (Inference) ---

@torch.no_grad()

def denoise_actions(model, obs_features, alpha_bar, T=100):

"""Generate actions by iteratively denoising from pure noise."""

betas = 1 - alpha_bar[1:] / alpha_bar[:-1]

alphas = 1 - betas

# Start from pure Gaussian noise: shape (1, T_p, action_dim)

action = torch.randn(1, 16, 6) # 16 steps, 6-DOF robot

for k in reversed(range(T)):

# Predict the noise at this step

predicted_noise = model(obs_features, action, k)

# Remove predicted noise (simplified DDPM update)

alpha_k = alphas[k]

alpha_bar_k = alpha_bar[k]

action = (1 / torch.sqrt(alpha_k)) * (

action - (betas[k] / torch.sqrt(1 - alpha_bar_k)) * predicted_noise

)

# Add small noise (except at final step)

if k > 0:

action += torch.sqrt(betas[k]) * torch.randn_like(action)

return action # Clean action sequence: shape (1, 16, 6)

This is the heart of the Diffusion Policy inference. We start from pure Gaussian noise with shape (1, 16, 6) — one batch, 16 timesteps, 6 degrees of freedom. Then we loop backward from step T to step 0. At each step, the model predicts the noise component, we subtract it out (scaled appropriately), and add a small amount of fresh noise for stochasticity. After all T steps, we have a clean, coherent action sequence ready to send to the robot.

Notice how compact this is. The entire inference loop is just a few lines of code. The complexity lives inside the model — which is either the 1D CNN or the Transformer architecture we discussed earlier.

Results: How Well Does It Work?

Now let us see how Diffusion Policy performs across different tasks.

The authors evaluated across 15 tasks from 4 different benchmarks, in both simulation and the real world. The results are remarkable.

Simulation Results (RoboMimic):

On standard manipulation tasks like lifting, picking, and placing, most methods do well. But the gap becomes dramatic on harder tasks:

ToolHang (hang a tool on a hook — requires precise multimodal grasping): Diffusion Policy CNN achieves 93% success. Implicit Behavioral Cloning (IBC) achieves 0%. Behavior Transformer (BET) achieves 52%. The task requires the robot to choose between multiple valid grasp orientations — exactly the multimodal scenario where point estimates fail.

Transport (bimanual manipulation — two arms must coordinate): Diffusion Policy achieves 96% success, outperforming all baselines.

Kitchen (multi-stage cooking tasks): Diffusion Policy achieves 96-99% on the hardest metric, compared to 44% for BET. This is a 213% improvement.

Real-World Results:

The Push-T task we discussed at the beginning? Diffusion Policy achieves 95% success with a coverage IoU of 0.80. LSTM-GMM achieves only 20% success. IBC achieves 0%. The human baseline is 100% success with 0.84 IoU — Diffusion Policy is remarkably close to human performance.

On more complex real-world tasks: - Sauce pouring: 79% success with 0.74 IoU (human: 0.79 IoU) - Sauce spreading: 100% success with 0.77 coverage (human: 0.79) - Shirt folding (bimanual): 75% success from 284 demonstrations - Mug flipping (7-DOF with complex 3D rotations): 90% success

Across all tasks, Diffusion Policy achieves an average improvement of 46.9% over the previous state-of-the-art. Not bad, right?

Conclusion

Let us summarize the three key ideas behind Diffusion Policy:

Represent robot policies as conditional denoising diffusion processes. Instead of predicting a single action, sample from the full action distribution by iteratively denoising random noise, conditioned on observations.

Predict action sequences, not single actions. By generating an entire trajectory at once, the policy produces temporally consistent, smooth motions that commit to one mode.

Use receding horizon control for execution. Predict long (Tₚ = 16), execute short (Tₐ = 8), replan often. This balances consistency with reactivity.

Diffusion Policy has become a foundational method in modern robot learning. Its ability to handle multimodal demonstrations, produce smooth trajectories, and work reliably in the real world has made it the default choice for many imitation learning systems that followed.

Here is the link to the original paper: Chi et al., “Diffusion Policy: Visuomotor Policy Learning via Action Diffusion” (2023)

Project page: https://diffusion-policy.cs.columbia.edu/

References:

Chi et al., “Diffusion Policy: Visuomotor Policy Learning via Action Diffusion” (2023)

Ho et al., “Denoising Diffusion Probabilistic Models” (2020)

Janner et al., “Planning with Diffusion for Flexible Behavior Synthesis” (2022)

Florence et al., “Implicit Behavioral Cloning” (2022)

Shafiullah et al., “Behavior Transformers: Cloning k Modes with One Stone” (2022)

Perez et al., “FiLM: Visual Reasoning with a General Conditioning Layer” (2018)

That’s it!

If you like this content, please check out our bootcamps on the following topics:

Modern Robot Learning: https://robotlearningbootcamp.vizuara.ai/

GenAI: https://flyvidesh.online/gen-ai-professional-bootcamp

RL: https://rlresearcherbootcamp.vizuara.ai/

SciML: https://flyvidesh.online/ml-bootcamp